Scaling AI voice agents for contact centers is not just about choosing the right model. It is about designing systems that can handle real-time audio, sustained concurrency, and strict reliability requirements. While early prototypes often work in controlled environments, production contact center traffic exposes hidden bottlenecks in latency, cost, and infrastructure.

This guide walks through how AI voice agent APIs behave at scale, why contact center workloads are different, and what architectural decisions actually matter. By the end, you will understand how to design and scale enterprise voice AI systems that remain stable, responsive, and cost-efficient under real-world call volumes.

What Is An AI Voice Agent API In A Contact Center Context?

An AI voice agent API is the interface that connects real phone calls to an automated decision system that can listen, think, and respond in real time. However, in a contact center, this setup is far more complex than a simple voice bot.

At a technical level, contact center AI voice agents are built from multiple systems working together:

- Telephony and real-time audio transport

- Speech-to-Text (STT) for understanding callers

- A Large Language Model (LLM) for reasoning and intent handling

- Retrieval systems (RAG) for business data

- Tool calling to trigger backend actions

- Text-to-Speech (TTS) to respond naturally

Because of this, voice agents are distributed systems, not single applications.

As a result, scaling an AI voice agent API is not about scaling one service. Instead, it is about scaling all components together without breaking the call experience.

Why Are Contact Center AI Voice Agents Harder To Scale Than Chat Systems?

At first glance, voice and chat may seem similar. Both use LLMs. Both process user input. However, voice introduces strict limits that chat systems do not face.

Key Differences Between Voice And Chat AI

| Area | Chat AI | Voice AI |

| Latency Tolerance | Seconds are acceptable | 200–300 ms feels slow |

| Session Length | Short-lived | Long-lived (minutes) |

| Data Type | Text | Continuous audio streams |

| Failure Impact | Retry silently | Call drops or silence |

| Cost Model | Per request | Per minute |

Because of these limits, scaling AI calls requires more than adding servers.

Additionally, voice sessions are stateful. Each call maintains context, audio streams, and active model connections. Therefore, concurrency grows quickly and unpredictably during peak hours.

As a result, contact center AI voice agents demand a different approach to scaling.

What Does A Typical AI Voice Agent System Look Like?

Before discussing scale, it is important to understand the baseline architecture.

A standard enterprise voice AI system follows this flow:

- A caller speaks into the phone

- Audio is streamed to an STT engine

- Text is passed to an LLM

- The LLM may query internal systems (RAG or tools)

- A response is generated

- TTS converts text to audio

- Audio is streamed back to the caller

Although this flow looks simple, each step introduces latency, cost, and failure risk.

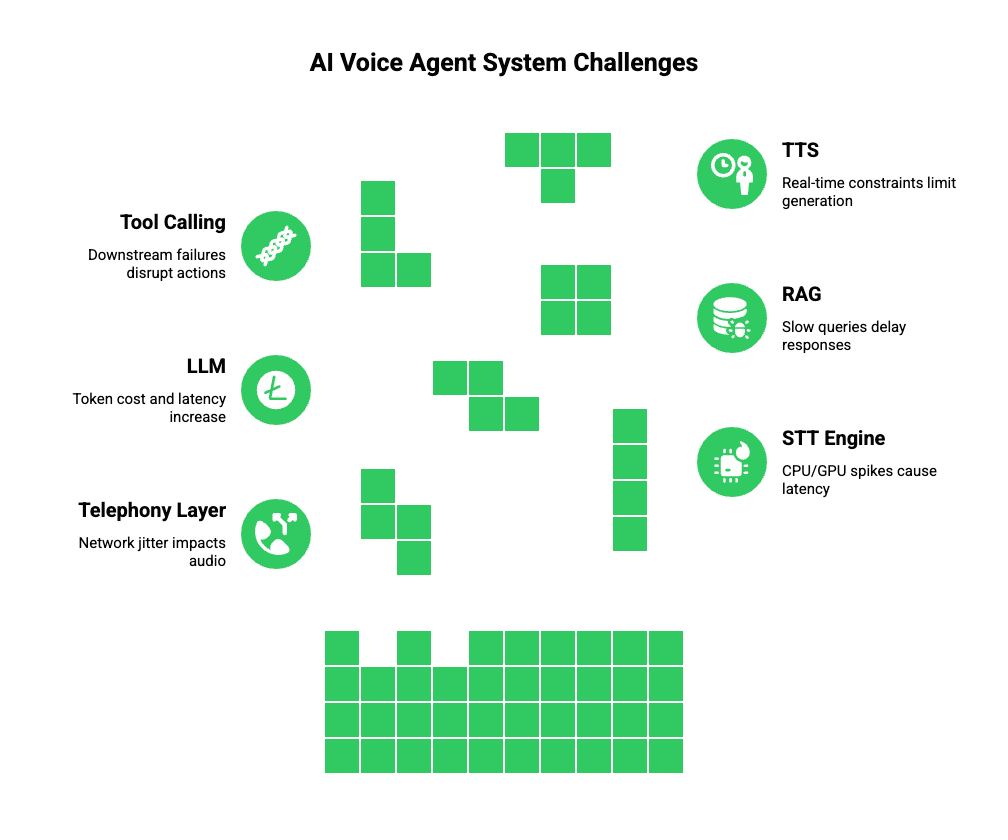

Core Components And Their Responsibilities

| Component | Responsibility | Scaling Risk |

| Telephony Layer | Call setup and audio transport | Network jitter |

| STT | Convert speech to text | CPU/GPU spikes |

| LLM | Reasoning and intent | Token cost, latency |

| RAG | Fetch business data | Slow queries |

| Tool Calling | Execute actions | Downstream failures |

| TTS | Generate speech | Real-time constraints |

Because each component scales differently, treating the system as one unit often leads to failure.

Why Latency Becomes The First Scaling Bottleneck

Latency is the most visible problem in enterprise voice AI.

Humans expect near-instant responses during conversation. Even a half-second delay feels unnatural. Therefore, every extra hop in the system matters.

In practice, streaming ASR vendors recommend small frame sizes (100 ms) to limit buffer latency while preserving recognition efficiency – an important tuning knob when designing low-latency pipelines.

Latency accumulates from multiple sources:

- Audio buffering

- Network round trips

- STT inference time

- LLM reasoning time

- Tool execution

- TTS synthesis

Individually, these delays seem small. However, together they compound.

For example:

| Stage | Avg Time (ms) |

| Audio Capture | 40 |

| STT | 120 |

| LLM | 250 |

| Tool Call | 150 |

| TTS | 180 |

| Total | 740 ms |

At this point, the conversation already feels slow.

Therefore, when scaling AI calls, latency optimization must happen before traffic scaling.

Why Concurrency Is The Real Scaling Challenge

Most teams underestimate concurrency.

A chat system can handle thousands of users because requests are short and stateless. In contrast, voice calls remain active for several minutes.

This creates three problems:

- Long-lived connections consume memory and compute

- Streaming audio requires continuous bandwidth

- Session state must be preserved without interruption

For example, 1,000 concurrent calls may require:

- 1,000 active STT streams

- 1,000 LLM contexts

- 1,000 TTS pipelines

If one component slows down, the entire call degrades.

Therefore, scaling contact center AI voice agents means handling sustained concurrency, not burst traffic.

Why Cost Grows Faster When Scaling AI Voice Agents

Cost is another major constraint.

Unlike chat, voice AI pricing often depends on time, not usage volume. Every second of delay increases cost.

Key cost drivers include:

- STT billed per audio minute

- LLM billed per token

- TTS billed per character or second

- Telephony billed per call minute

If latency increases, costs rise even when traffic stays flat.

Cost Comparison: Optimized vs Unoptimized Voice Pipeline

| Metric | Optimized | Unoptimized |

| Avg Call Duration | 3 min | 4.5 min |

| LLM Tokens | Controlled | Excessive |

| STT Usage | Streamed | Reprocessed |

| Cost Per Call | Lower | 40–60% higher |

Because of this, scaling AI calls without architectural control leads to unpredictable spending.

Why Reliability Matters More Than Intelligence In Contact Centers

In contact centers, failure is visible.

A dropped chat can be retried. A dropped call leads to frustration, churn, and lost revenue.

Common failure scenarios include:

- STT service slowdown

- LLM timeout

- Tool API failure

- Network packet loss

Therefore, enterprise voice AI systems must handle partial failure gracefully.

This means:

- Fallback responses

- Timeout handling

- Degraded but functional conversations

Reliability, not model accuracy, defines success at scale.

What Makes Contact Center Traffic Unique?

Contact centers behave differently from consumer apps.

Key traffic patterns include:

- Sudden spikes during business hours

- Seasonal surges

- Regional call distribution

- Regulatory constraints

As a result, enterprise voice AI must scale predictably and globally.

Unlike consumer bots, these systems cannot fail silently. They must meet uptime, security, and compliance requirements.

Transitioning From Prototype To Production Scale

Most teams begin with a working demo. However, scaling exposes hidden issues.

Common mistakes include:

- Hard-coding model choices

- Mixing telephony and logic layers

- Treating voice as a request-response flow

- Ignoring observability

Therefore, teams must rethink architecture early.

In the next part, we will explore:

- How scalable voice AI architectures are designed

- Where telephony infrastructure fits

- And how FreJun Teler enables enterprise-grade scaling without locking teams into a single AI stack

What Architecture Enables Scalable Enterprise Voice AI?

Once the scaling challenges are clear, the next step is choosing the right architecture. At this stage, many teams realize that their prototype design cannot survive contact center traffic.

Therefore, the goal is to build an architecture where each system scales independently.

Core Principles For Scaling AI Voice Agents

- Separate voice transport from intelligence

- Design for streaming, not request-response

- Keep conversation state outside the compute layers

- Expect partial failures and recover fast

As a result, modern enterprise voice AI systems follow a modular design.

How Should A Scalable AI Voice Agent API Be Structured?

A scalable AI voice agent API is not a single endpoint. Instead, it is a collection of coordinated services.

Recommended High-Level Structure

| Layer | Purpose |

| Voice Transport | Handles calls and audio streams |

| Speech Layer | STT and TTS services |

| Intelligence Layer | LLMs and intent handling |

| Knowledge Layer | RAG and data access |

| Action Layer | Tool calling and workflows |

| Observability | Logs, metrics, tracing |

This separation allows teams to scale one layer without breaking others. For example, STT can scale up during noisy call spikes, while LLM usage stays controlled.

Why Streaming-First Design Matters For Scaling AI Calls

Many early voice systems still rely on turn-based flows. However, turn-based systems wait for silence before processing. This increases latency and feels unnatural.

In contrast, streaming-first systems process audio continuously.

Streaming Vs Turn-Based Voice Processing

| Feature | Streaming | Turn-Based |

| Response Speed | Near real-time | Delayed |

| Conversation Flow | Natural | Robotic |

| Latency Control | Fine-grained | Limited |

| Scale Handling | Better | Poor |

Because of this, streaming is essential for enterprise voice AI.

More importantly, streaming allows partial responses. This means the system can start speaking while thinking, which reduces perceived delay.

How Do STT, LLM, And TTS Scale Differently?

Even with the right architecture, scaling fails if all components are treated the same.

Each system has unique constraints.

Speech-To-Text (STT)

- Scales with CPU/GPU usage

- Sensitive to background noise

- Requires low buffering for real-time accuracy

Large Language Models (LLMs)

- Scale based on token usage

- Context size affects cost

- Tool calls increase latency

Text-To-Speech (TTS)

- Must operate in real time

- Voice quality affects synthesis time

- Streaming output reduces delays

Because of these differences, routing logic becomes critical.

For example, short confirmations can use lightweight models. Meanwhile, complex issues can route to larger LLMs.

This approach reduces cost while improving stability.

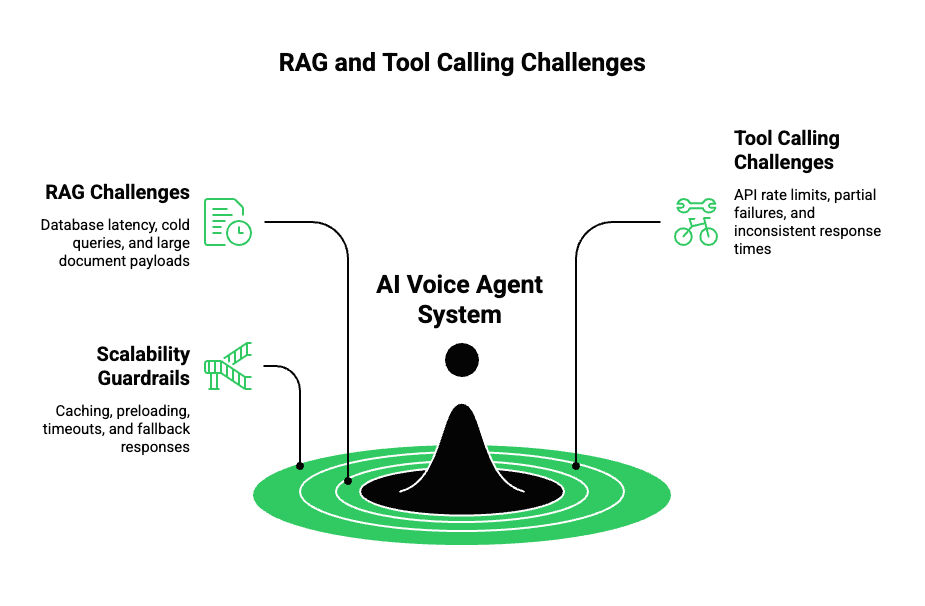

How Do RAG And Tool Calling Affect Contact Center Scale?

Enterprise voice AI must connect to real systems. However, external dependencies are often the weakest link.

Challenges Introduced By RAG

- Database latency

- Cold queries during peak traffic

- Large document payloads

Challenges Introduced By Tool Calling

- API rate limits

- Partial failures

- Inconsistent response times

Therefore, scalable systems apply guardrails:

- Cache frequently accessed data

- Preload session context

- Set strict timeouts

- Provide fallback responses

This ensures the call continues even if a backend system slows down.

Sign Up for FreJun Teler Today

How Should Teams Monitor AI Voice Agents At Scale?

Scaling without visibility is risky.

Voice systems require deeper observability than chat because failures are harder to detect.

Metrics That Matter For Contact Center AI Voice Agents

| Metric | Why It Matters |

| End-to-End Latency | Impacts user experience |

| Word Error Rate (WER) | Measures understanding |

| Call Completion Rate | Indicates reliability |

| Fallback Frequency | Signals system stress |

| Avg Call Duration | Affects cost |

In addition, tracing a single call across STT, LLM, and TTS helps teams debug issues quickly.

Where Does Voice Infrastructure Fit Into Scalable AI Systems?

At this point, one question becomes unavoidable:

How does audio move reliably between callers and AI systems at scale?

Voice infrastructure is not just call setup. It controls:

- Audio quality

- Latency

- Reliability

- Geographic routing

If the voice layer fails, no amount of AI optimization helps.

Therefore, enterprise voice AI systems require infrastructure built for real-time media streaming, not just dialing.

How Does FreJun Teler Enable Scalable AI Voice Agent APIs?

FreJun Teler acts as the voice infrastructure layer for AI systems. It does not replace LLMs, STT, or TTS. Instead, it connects them reliably to real phone calls.

What FreJun Teler Handles

- Real-time audio streaming for inbound and outbound calls

- Low-latency media transport across regions

- Scalable call concurrency

- Developer-first APIs and SDKs

What Teams Control

- Choice of LLM

- Choice of STT and TTS

- Conversation logic

- RAG and tool integrations

This separation is important. It allows teams to improve AI quality without reworking telephony.

Why This Matters For Scaling

| Problem | Traditional Telephony | With Teler |

| Latency Control | Limited | Fine-grained |

| AI Integration | Complex | Native |

| Model Flexibility | Low | High |

| Concurrency Handling | Rigid | Elastic |

As a result, teams can scale AI calls without locking into a single AI stack.

How Can Teams Combine Teler With Any AI Stack?

A typical production setup looks like this:

- Teler handles call setup and audio streaming

- Audio streams to chosen STT service

- Transcripts flow into LLM logic

- LLM triggers tools or RAG when needed

- Responses stream into TTS

- Audio streams back through Teler

Because Teler is model-agnostic, teams can:

- Swap LLMs as costs change

- Upgrade TTS voices without downtime

- Add new workflows without touching telephony

This flexibility is essential for long-term scaling.

What Are Best Practices For Scaling AI Voice Agents In Production?

After working with high-volume systems, several patterns emerge.

Proven Practices

- Start with streaming from day one

- Decouple voice from intelligence

- Route simple calls to lightweight models

- Cache aggressively

- Design for failure, not perfection

Most importantly, test at peak load early. Scaling issues rarely appear during demos.

What Should Founders And Engineering Leaders Consider Before Scaling?

Before committing to large-scale deployment, decision-makers should ask:

- Can this system handle 10x traffic without redesign?

- Are costs predictable at scale?

- Can models be replaced easily?

- Does the voice layer support global traffic?

The answers often reveal architectural gaps.

Final Thoughts

Scaling AI voice agents in contact centers is ultimately a systems challenge, not a model problem. Real success comes from combining low-latency voice transport, modular AI architecture, and reliable infrastructure that can sustain thousands of concurrent calls. Teams that separate telephony from intelligence gain flexibility, control costs, and avoid long-term lock-in. This is where a dedicated voice infrastructure layer becomes critical. FreJun Teler enables teams to connect any LLM, any STT, and any TTS to real phone calls using real-time streaming built for enterprise traffic. If you are planning to scale AI voice agents beyond pilots, Teler provides the foundation to do it reliably.

FAQs

- What is an AI voice agent API?

An AI voice agent API connects real-time phone audio to STT, LLM, tools, and TTS for automated voice conversations. - Why is scaling AI calls harder than chat?

Voice requires continuous streaming, low latency, and long-lived sessions, which increase infrastructure and cost complexity. - How many concurrent calls should systems plan for?

Enterprise systems should be designed for peak concurrency, often 5–10x average traffic, not steady-state usage. - What causes most latency in voice AI systems?

Latency usually comes from cumulative delays across audio buffering, STT processing, LLM inference, and TTS generation. - Is RAG necessary for contact center voice agents?

Yes. RAG enables real-time access to business data, which is essential for accurate and useful customer responses. - How do teams control AI voice agent costs?

By routing simple intents to lightweight models, caching responses, and minimizing unnecessary token usage. - Can voice AI systems handle partial failures?

Well-designed systems include timeouts, fallbacks, and degraded responses to keep calls active during failures. - Why is observability important for voice agents?

Without call-level metrics and tracing, teams cannot detect latency spikes, errors, or quality degradation. - Can teams change LLMs after going live?

Only if telephony and AI logic are decoupled; otherwise, model changes risk breaking production systems.

What infrastructure is most critical for scaling voice AI?

Low-latency, reliable voice streaming infrastructure is foundational; AI performance depends on it.