In the relentless pursuit of a perfect, real-time conversational AI, we have always been at the mercy of one unyielding constraint: the speed of light. For years, the standard architecture for voice recognition has been a round-trip to the cloud. A user speaks, their voice is captured, converted into data packets, and sent on a long journey across the internet to a powerful server in a distant data center.

The server processes the audio, and the resulting text is sent back. This journey, however fast, is never instantaneous. But what if the journey was only a few inches long, from the user’s mouth to the device in their hand? This is the revolutionary promise of an on-device ready voice recognition SDK.

This architectural shift, moving the core Speech-to-Text (STT) processing from the cloud to the device itself, represents one of the most significant advancements in the quest for truly seamless human-to-machine interaction.

An on-device STT solution is not just about a modest speed boost; it is a fundamental rethinking of how we build voice experiences, unlocking new levels of responsiveness, reliability, and privacy. For developers building the next generation of voice-enabled applications, understanding and leveraging an offline recognition AI is becoming a critical competitive advantage.

Table of contents

What is the “Cloud Latency Tax”?

Before we can appreciate the benefits of on-device processing, we must first understand the inherent limitations of the cloud-based model. While the cloud offers immense power and scale, it comes with a built-in and unavoidable “latency tax” for any real-time application.

The Anatomy of the Round-Trip Delay

When a user speaks to an application that relies on a cloud-based voice recognition SDK, the data must go on a long and complex journey:

- Capture and Packetization: The device’s microphone captures the audio, and it is broken down into small data packets.

- The Outbound Journey: These packets travel from the user’s device, through their local Wi-Fi or cellular network, across the public internet, to the provider’s data center.

- Cloud Processing: The powerful servers in the cloud run the complex AI models to transcribe the audio into text.

- The Return Journey: The resulting text data must then travel all the way back across the internet to the user’s device.

This entire round-trip can easily take several hundred milliseconds, even under ideal network conditions. In the real world of congested networks and cross-continent communication, it can often take a full second or more. This is the latency that makes a cloud-based voice interaction feel “laggy.”

Also Read: How Long Does It Take to Go from Prototype to Production While Building Voice Bots?

How Does On-Device Voice Recognition Change the Game?

An on-device ready voice recognition SDK completely eliminates this round-trip tax. It uses highly-optimized, lightweight AI models that are capable of running directly on the user’s own device, their smartphone, their tablet, their in-car system, or even a smart appliance.

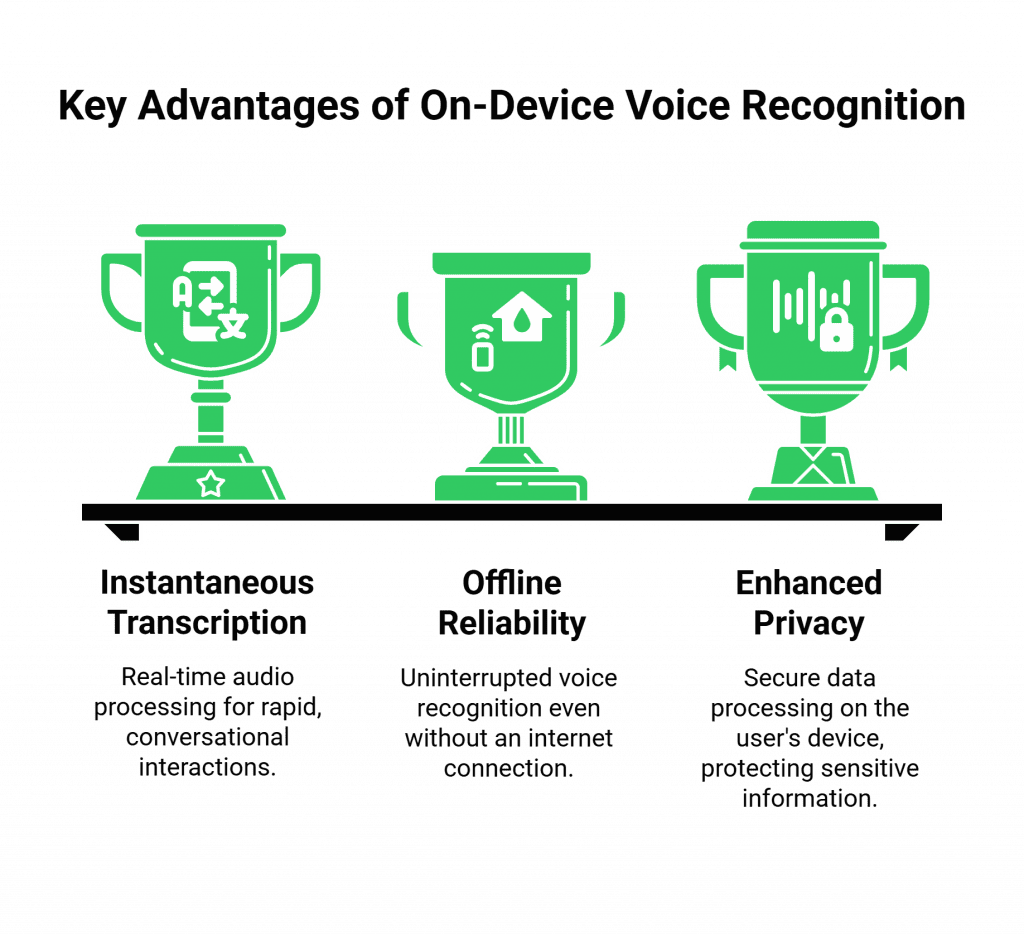

The Power of Instantaneous Transcription

With on-device STT, the entire recognition process happens locally.

- The microphone captures the audio.

- The SDK pipes this audio directly to the recognition engine running on the device’s own processor.

- The text is generated locally in a matter of milliseconds.

The journey is measured in inches, not in thousands of miles. This results in a transcription that is, for all practical purposes, instantaneous. This is a game-changer for any application that requires a rapid-fire, back-and-forth conversational flow.

Unbreakable Reliability with Offline Recognition AI

A cloud-based system has a critical vulnerability: it is entirely dependent on a stable internet connection. If the user is in an area with spotty Wi-Fi, on a subway, or in a remote rural location, a cloud-based voice feature will simply fail. An offline recognition AI is immune to this.

Because the processing happens locally, it can provide fast and accurate transcriptions even when the device is completely offline. This is an essential feature for a huge range of mission-critical applications, from in-cab driver assistants to voice-controlled medical devices.

A New Paradigm for Privacy

For many applications, especially in healthcare, finance, and the enterprise, sending raw, sensitive voice data to a third-party cloud is a major security and privacy concern. An on-device STT solution offers a fundamentally more secure architecture.

- Data Stays Local: The user’s spoken words are processed on their device and never leave it. The raw audio is never transmitted over the internet or stored on a third-party server.

- Building a Privacy-Focused Speech SDK: This makes a privacy-focused speech SDK the ideal choice for any application that handles Protected Health Information (PHI), Personally Identifiable Information (PII), or confidential business data. It provides the strongest possible guarantee of user privacy and can dramatically simplify the process of achieving compliance with regulations like HIPAA and GDPR.

This table clearly summarizes the architectural advantages of an on-device approach.

| Feature | Cloud-Based Voice Recognition | On-Device Ready Voice Recognition SDK |

| Latency | High, due to the network round-trip. | Extremely low, as processing is local. |

| Connectivity | Requires a stable, high-speed internet connection. | Works perfectly offline (offline recognition AI). |

| Privacy & Security | User’s voice data is sent to and processed in the cloud. | Voice data never leaves the user’s device. |

| Cost | Typically billed on a per-second or per-minute basis for cloud processing. | Often a one-time licensing fee per device, with no ongoing usage costs. |

| Model Power | Can use massive, powerful AI models. | Limited to smaller, highly-optimized models that can run on device hardware. |

Also Read: What Monetization Strategies Work After Building Voice Bots for Businesses?

The Hybrid Approach: The Best of Both Worlds

It is important to note that the choice between cloud and on-device is not always a binary one. In fact, the most sophisticated AI voice architecture 2025 will be a hybrid one.

- The Scenario: A user might be interacting with a voice agent. For the simple, fast, back-and-forth parts of the conversation (“What’s your account number?”, “Is that correct?”), the application can use the lightning-fast on-device STT.

- The Handoff: But if the user then asks a complex, open-ended question that requires the power of a massive Large Language Model (LLM) to answer, the application can intelligently decide to “escalate” to the cloud for that specific part of the conversation.

A truly advanced voice recognition SDK will provide the tools to manage this hybrid workflow, giving the developer the power to choose the right tool for the right job, in real time.

Ready to build voice experiences that are as fast and reliable as your users demand? Sign up for FreJun AI

What is FreJun AI’s Role in an On-Device World?

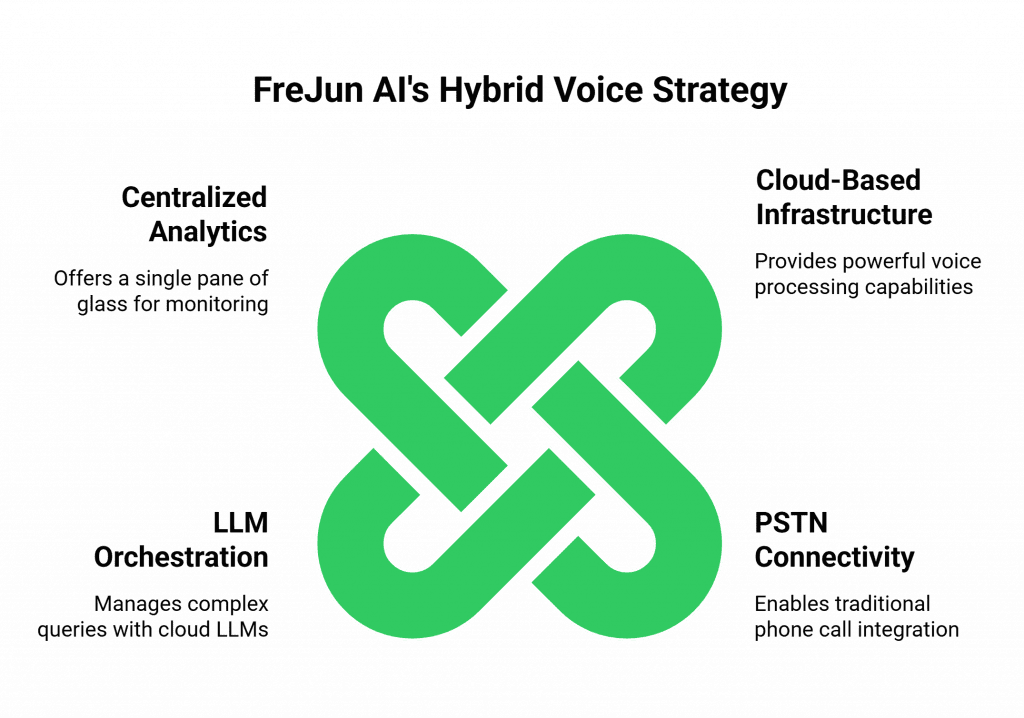

While FreJun AI’s core strength is its powerful, cloud-based voice infrastructure, we see the hybrid future as a core part of our mission. Our platform is designed to be the flexible “hub” that can orchestrate these complex, multi-layered voice experiences.

Even when the initial STT is happening on the device, a cloud connection is still essential for many parts of the enterprise voice stack:

- Connecting to the PSTN: If your voice agent needs to make or receive a traditional phone call, it still needs a bridge to the telephone network, a role that our Teler engine provides.

- Orchestrating the “Heavy Thinking”: When your on-device agent needs to hand off a complex query to a cloud-based LLM, our platform can provide the low-latency infrastructure to manage that high-speed data exchange.

- Centralized Analytics and Management: We provide the centralized platform for logging, analytics, and management, giving you a single pane of glass to monitor your entire fleet of voice-enabled devices.

Also Read: How Can Building Voice Bots Improve Customer Experience Across Channels?

Conclusion

The evolution of voice recognition is a story of a relentless march toward the user. We are moving the intelligence from the distant cloud to the powerful computer that each of us carries in our pocket. The on-device ready voice recognition SDK is the technology that is making this final step possible.

By providing an experience that is faster, more reliable, and fundamentally more private, on-device STT is not just an incremental improvement; it is a paradigm shift. It is the key to unlocking the next generation of truly seamless, instantaneous, and trustworthy voice-enabled applications.

Want to do a deep dive into the infrastructure required to power a modern, AI-powered voicebot? Schedule a demo with our team at FreJun Teler.

Also Read: How to Set Up IVR Software for Your Call Center (Step-by-Step Guide)

Frequently Asked Questions (FAQs)

It is a specialized STT toolkit that runs AI models on the user’s device. It avoids cloud processing entirely. This design enables voice recognition with extremely low latency. It also works without any internet connection.

The main advantages are speed and privacy. On-device STT delivers a nearly instant response. It removes the network round-trip to the cloud. It also offers superior privacy.

Offline recognition AI is another term for on-device processing. It refers to the ability of the AI model to function and perform its task (like voice recognition) even when the device has no active internet connection.

A privacy-focused speech SDK, which often utilizes on-device processing, is critical for applications that handle sensitive information (like in healthcare or finance).

The main trade-off is model size and complexity. The AI models must be small and highly optimized to run on the limited processing power of a mobile device.

A hybrid architecture combines the best of both worlds. It uses a fast, on-device STT for the simple, real-time parts of a conversation and then intelligently “escalates” to a more powerful, cloud-based Large Language Model (LLM) for the more complex, “heavy thinking” parts of the dialogue.

Yes, but the device must store the proper language packs. A good SDK offers lightweight language models for this. These models can ship with the application. They can also download on demand when needed.

Even with on-device recognition, many functions still require a cloud platform. The cloud connects to the PSTN. It also routes audio to cloud LLMs for complex queries. The cloud provides centralized analytics and management.