AI voice agents have moved from demos to real production systems. Over the last two years, large language models have improved reasoning, response quality, and tool usage. At the same time, real-time speech systems have become faster and more reliable. Because of this convergence, teams can now build voice agents that actually work in live phone calls.

A survey of senior leaders shows nearly 80% of enterprises are already adopting AI agents, signaling a concrete shift toward intelligent automation that includes voice agent workflows.

However, building an AI voice agent is not just about connecting a chatbot to a phone number. Instead, it requires careful system design, strict latency control, and clear ownership of conversation logic. Many early attempts fail because teams treat voice as a simple input and output problem.

Therefore, this guide focuses on how to integrate LLMs with an AI voice agent API in a production-ready way. It is written for founders, product managers, and engineering leads who want control over their stack. More importantly, it explains how to combine LLM voice integration, streaming speech systems, and backend services without locking into a single vendor.

Before discussing implementation details, we must first align on what an AI voice agent really is and how the conversational AI stack is structured.

What Is An AI Voice Agent, Really?

An AI voice agent is a real-time system that listens, understands, reasons, and responds using spoken language. Unlike IVR systems, it does not follow fixed trees. Instead, it adapts based on context, intent, and external data.

At a system level, an AI voice agent is composed of five core layers:

- Speech-to-Text (STT) for converting live audio into text

- A Large Language Model (LLM) for reasoning and response generation

- Retrieval Or Business Logic for Grounding Responses

- Tool Or Function Calling for taking actions

- Text-to-Speech (TTS) for converting responses back into audio

Because each layer has different performance needs, they must be loosely coupled. Otherwise, the system becomes slow, fragile, and hard to scale.

Voice Agent vs IVR vs Voice Bot

To clarify the difference, the table below shows how AI voice agents differ from traditional systems:

| System Type | Conversation Style | Context Awareness | Flexibility |

| IVR | Menu-based | None | Very low |

| Voice Bot | Intent-based | Limited | Medium |

| AI Voice Agent | Free-flowing | High | Very high |

As shown above, AI voice agents require far more coordination between components. Therefore, a clean architecture is essential before attempting an AI voice agent LLM setup.

What Does A Modern Conversational AI Stack Look Like?

A conversational AI stack defines how audio, text, reasoning, and actions move through the system. While tools may vary, the structure remains mostly the same.

Core Layers In The Conversational AI Stack

A modern conversational AI stack usually includes:

- Voice Transport Layer

- Handles phone calls or VoIP

- Streams raw audio in real time

- Handles phone calls or VoIP

- Audio Processing Layer

- Performs streaming STT

- Detects pauses and turn boundaries

- Performs streaming STT

- LLM Reasoning Layer

- Interprets intent

- Maintains conversational state

- Generates responses

- Interprets intent

- Knowledge And Tool Layer

- Retrieves domain data (RAG)

- Calls APIs or internal services

- Retrieves domain data (RAG)

- Speech Output Layer

- Converts text to audio

- Streams audio back to the caller

- Converts text to audio

Because each layer operates at different speeds, coordination is critical. For example, audio must stream continuously, while LLM responses may arrive token by token.

Why Modularity Matters

If one provider controls all layers, you lose flexibility. On the other hand, a modular stack allows you to:

- Swap LLMs without changing telephony

- Improve STT accuracy without touching logic

- Optimize latency layer by layer

As a result, most production teams prefer a modular conversational AI stack.

How Does An AI Voice Agent API Fit Into This Stack?

An AI voice agent API acts as the real-time bridge between phone calls and your backend logic. It does not provide intelligence by itself. Instead, it ensures that audio moves reliably and quickly between users and your systems.

Responsibilities Of An AI Voice Agent API

An AI voice agent API typically handles:

- Inbound and outbound call setup

- Real-time audio streaming

- Media session lifecycle

- Network reliability and retries

At the same time, it should not control:

- LLM selection

- Prompt design

- Conversation state

- Business rules

This separation is important. Otherwise, you cannot evolve your AI logic independently.

How Do You Integrate An LLM With A Voice Agent?

LLM voice integration is primarily about orchestration. You are not embedding an LLM into telephony. Instead, you are routing data between systems with strict timing guarantees.

High-Level Integration Flow

The most common flow looks like this:

- Caller speaks

- Audio is streamed to STT

- STT emits partial and final transcripts

- Transcripts are sent to the LLM

- LLM generates text (and tool calls if needed)

- Text is streamed to TTS

- Audio is streamed back to the caller

Although this flow seems simple, timing is critical at every step.

Key Latency Budget

| Component | Target Latency |

| STT partial results | < 300 ms |

| LLM response start | < 700 ms |

| TTS audio start | < 300 ms |

If any stage exceeds its budget, users perceive the system as slow or broken. Therefore, streaming is not optional.

Why Is Real-Time Streaming Critical For LLM Voice Integration?

Batch processing introduces silence. Silence breaks conversations. Because of this, real-time streaming is the foundation of any AI voice agent API.

Streaming Benefits

With streaming:

- STT emits partial text before speech ends

- LLM can start reasoning early

- TTS can speak while text is still being generated

As a result, the agent feels responsive even when full responses take time.

Common Streaming Mistakes

Teams often fail by:

- Waiting for full transcripts

- Waiting for full LLM responses

- Playing audio only after completion

These mistakes increase perceived latency, even if actual processing is fast.

How Do You Manage Context In An AI Voice Agent LLM Setup?

Context management is one of the hardest problems in voice systems. Unlike chat, voice conversations are longer and less structured.

Types Of Context

An AI voice agent usually manages:

- Short-term context

- Current turn

- Recent messages

- Current turn

- Session context

- Call-level state

- User intent

- Call-level state

- External context

- CRM data

- Knowledge base results

- CRM data

Practical Context Strategy

A reliable strategy includes:

- Keeping only recent turns in the LLM prompt

- Using RAG for factual recall

- Storing full transcripts outside the prompt

This approach reduces cost while maintaining accuracy.

Where Does FreJun Teler Fit In An AI Voice Agent Architecture?

Up to this point, we have discussed AI voice agents as a system of loosely coupled components. However, one question remains central for implementation teams: where does the voice infrastructure actually live?

This is where FreJun Teler fits into the conversational AI stack.

FreJun Teler’s Technical Role

FreJun Teler functions as the real-time voice and telephony infrastructure layer. Instead of bundling AI logic or enforcing a fixed agent model, it focuses on doing one thing well: reliable, low-latency voice transport.

Specifically, Teler handles:

- Inbound and outbound phone calls

- SIP and cloud telephony connectivity

- Real-time bidirectional audio streaming

- Call lifecycle events (connect, disconnect, errors)

At the same time, your application retains full control over:

- LLM selection and prompts

- STT and TTS providers

- Conversation state and logic

- RAG pipelines and tool execution

Because of this separation, Teler fits naturally into any LLM voice integration strategy.

Why This Separation Matters

If voice infrastructure and AI logic are tightly coupled, teams face limitations later. For example, switching LLMs or upgrading STT accuracy becomes risky. By contrast, using Teler as a transport layer allows teams to evolve their ai voice agent llm setup without touching telephony.

As a result, architecture remains flexible even as product requirements change.

How Do You Implement Teler With Any LLM, STT, And TTS?

Now that the role of Teler is clear, we can walk through a practical implementation flow. Although individual APIs differ, the core pattern remains consistent.

Step-By-Step Integration Flow

The typical flow looks like this:

- Call Initialization

- An inbound or outbound call is established via Teler

- A unique session ID is created

- An inbound or outbound call is established via Teler

- Audio Streaming Begins

- Caller audio is streamed in real time to your backend

- Audio frames are forwarded to your STT provider

- Caller audio is streamed in real time to your backend

- Speech-To-Text Processing

- Partial transcripts are emitted continuously

- Final transcripts are marked on turn completion

- Partial transcripts are emitted continuously

- LLM Orchestration

- Transcripts are sent to the LLM

- RAG queries or tool calls are triggered if required

- Transcripts are sent to the LLM

- Text-To-Speech Generation

- LLM output is streamed to the TTS engine

- Audio chunks are generated incrementally

- LLM output is streamed to the TTS engine

- Audio Playback

- TTS audio is streamed back through Teler to the caller

- TTS audio is streamed back through Teler to the caller

Because each step is streaming-based, the system remains responsive even under load.

How Should Engineers Handle Turn-Taking And Interruptions?

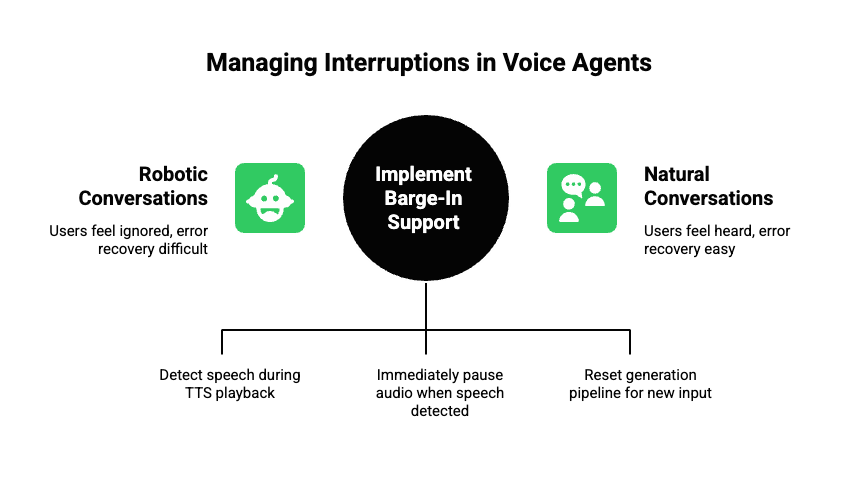

One of the most complex aspects of voice agents is managing interruptions. Unlike chat, users often speak while the agent is talking.

Why Barge-In Support Is Essential

Without interruption handling:

- Users feel ignored

- Conversations feel robotic

- Error recovery becomes difficult

Therefore, production systems must detect speech activity during playback.

Common Technical Approach

Most teams implement:

- Voice activity detection (VAD) on inbound audio

- Immediate pause of TTS playback when speech is detected

- Reset of the LLM generation pipeline

This requires tight coordination between the voice API, STT, and TTS layers. Because Teler streams audio continuously, it enables this control without hacks.

How Do You Connect AI Voice Agents To Business Systems?

At this stage, the agent can talk. However, talking alone is not useful. Voice agents must act.

Tool Calling In Practice

LLMs can invoke tools to:

- Create support tickets

- Book meetings

- Fetch account data

- Trigger workflows

A typical pattern is:

- LLM emits a structured tool call

- Backend executes the action

- Result is summarized and sent back to the LLM

- LLM responds to the user via voice

RAG And Tooling Comparison

| Capability | RAG | Tool Calling |

| Purpose | Knowledge lookup | Action execution |

| Latency | Medium | Variable |

| Output | Text context | Structured result |

| Usage | FAQs, docs | CRM, calendars |

In practice, most AI voice agents use both. RAG handles knowledge, while tools handle actions.

How Do You Scale AI Voice Agents In Production?

Once an agent works for one call, the next challenge is scale. This is where many systems fail.

Key Scaling Challenges

- Handling hundreds of concurrent calls

- Keeping latency consistent

- Managing LLM cost

- Observing failures in real time

Because voice is real time, delays compound quickly.

Recommended Scaling Practices

- Use stateless backend services where possible

- Store session state in fast external stores

- Limit LLM context length aggressively

- Cache RAG results when safe

The table below summarizes scaling focus areas:

| Layer | Scaling Focus |

| Voice API | Concurrent call handling |

| STT | Streaming throughput |

| LLM | Token and request limits |

| TTS | Audio generation speed |

Since Teler manages telephony and media transport, your team can focus on scaling AI logic independently.

How Do You Monitor And Debug AI Voice Agents?

Observability is often overlooked early. However, without it, diagnosing issues becomes nearly impossible.

What To Monitor

Effective monitoring includes:

- Call connection success rate

- End-to-end latency

- STT error rates

- LLM response times

- TTS generation delays

Logging Strategy

Instead of logging raw audio, most teams log:

- Transcripts

- LLM inputs and outputs

- Tool invocation results

- Timing metrics

This approach protects privacy while enabling debugging.

What Are Common Mistakes Teams Make With AI Voice Agents?

Even strong teams repeat the same mistakes.

Frequent Pitfalls

- Treating voice as batch input/output

- Overloading LLM prompts with history

- Ignoring interruptions

- Hard-coding business logic into prompts

- Locking into monolithic platforms

Avoiding these issues early saves months of rework.

How Should Founders And Product Leaders Think About Voice Agents?

For founders and PMs, technical decisions have long-term impact.

Strategic Guidelines

- Choose infrastructure that preserves flexibility

- Separate voice transport from AI intelligence

- Start simple, then iterate

- Measure real user latency, not just benchmarks

When done correctly, AI voice agents become a platform capability rather than a single feature.

Conclusion

As voice becomes the preferred interface for human-computer interaction, integrating large language models with real-time speech systems is no longer optional – it is a strategic imperative. The rapid expansion of the voice AI agents market, along with pervasive user demand and enterprise adoption, reflects a broader shift toward natural and responsive voice automation. By decoupling voice transport from AI logic and adopting modular architectures, engineering teams can build systems that are both powerful and flexible.

Partnering with robust infrastructure platforms such as Teler reduces operational complexity, enabling you to focus on optimizing the LLM voice integration and delivering exceptional conversational experiences at scale.

Explore Teler with a personalized demo today.

Schedule here.

FAQs –

- What is an AI voice agent?

Real-time system converting speech to text, reasoning via LLM, and responding using synthesized speech with context and actions. - Why use an AI voice agent API?

To handle call transport, real-time audio, and event management while your AI logic stays flexible and independent. - What does LLM voice integration require?

Streaming STT input, prompt orchestration, token streaming, RAG/tool support, and low-latency TTS output. - How do you handle interruptions?

Use voice activity detection and pause/resume audio streams to support barge-in without losing context. - Why modular architecture matters?

It ensures component independence, future flexibility, and easier upgrades without lock-in. - What is retrieval augmentation (RAG)?

A technique that uses external knowledge stores to make LLM responses more accurate and factual. - How do you scale voice agents?

Horizontal backend scaling, session stores, cached RAG results, and optimizing LLM context length. - What are common voice agent challenges?

Latency, cost, interruptions, context drift, and monitoring gaps. - Do AI voice agents reduce costs?

Yes, by automating support and lowering human intervention needs across high-volume interactions. - How does Teler help?

It provides resilient, low-latency voice transport so teams can focus on intelligent AI logic.