Building intelligent voice automation once required deep pockets and years of telecom expertise, but that reality has shifted. Open-source AI models like Llama 2 now give every business access to advanced conversational intelligence, while FreJun provides the reliable, low-latency infrastructure to carry it into real-world calls.

Together, they remove the biggest barriers to deploying voice bots at scale. This guide shows how to combine Llama 2 and FreJun to create production-ready AI voice automation, fast.

Table of contents

- The Open-Source AI Revolution in Call Automation

- The Hidden Hurdle: Why Your Voice Bot Project Will Stall

- The Modern Architecture: A Smart Brain on a High-Speed Network

- How Does a Llama 2 Voice Bot Works? An Inside Look

- Building and Deploying a Production-Ready Llama 2 Voice Bot

- The Infrastructure Choice: DIY Telephony vs. FreJun’s Voice Platform

- Transforming Industries with Voice Automation

- Final Thoughts: Move Faster and Build Smarter

- Frequently Asked Questions (FAQ)

The Open-Source AI Revolution in Call Automation

For years, building intelligent voice automation was the exclusive domain of large enterprises with massive budgets. Today, the game has changed. The release of powerful, open-source models like Meta’s Llama 2 has democratized access to cutting-edge AI, allowing businesses of all sizes to develop their own sophisticated conversational agents.

A Llama 2 voice bot is an AI-powered system that can understand natural human speech, process complex queries, and respond in a human-like voice. It’s a game-changer for automating customer support, scheduling appointments, and streamlining countless other business workflows. This technology gives you the power to build a completely custom, highly intelligent communication tool.

However, the AI model is only one piece of the puzzle. The real challenge, and the key to a successful deployment lies in the infrastructure that connects your AI to your customers.

Also Read: Automate Calls with Google Gemini 1.5 Pro Voice Bot Tutorial

The Hidden Hurdle: Why Your Voice Bot Project Will Stall

Many talented development teams embark on building a Llama 2 voice bot with great enthusiasm. They focus on fine-tuning the model, designing the perfect conversational flow, and integrating it with their business logic. They create a brilliant AI “brain.” But when they try to connect this brain to the public telephone network, the project grinds to a halt.

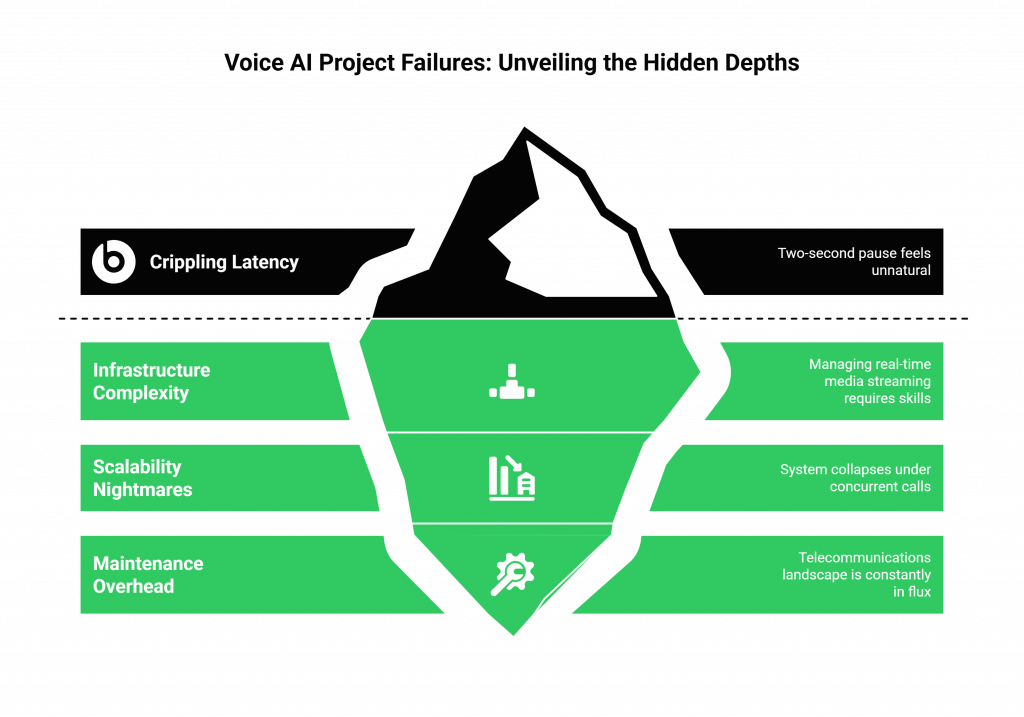

This is the hidden hurdle of voice AI: telephony is incredibly complex. Attempting to build the voice infrastructure from scratch introduces a host of problems that can kill your project:

- Crippling Latency: The delay between a customer speaking and the bot responding is the most common point of failure. Even a two-second pause feels unnatural and frustrating, leading to a poor customer experience and abandoned calls.

- Infrastructure Complexity: Managing real-time media streaming, SIP trunks, carrier negotiations, and call routing requires a deep, specialized skill set that is entirely different from AI development. It’s a massive distraction from your core goal.

- Scalability Nightmares: A system that works for a single test call will collapse under the weight of hundreds or thousands of concurrent calls. Building a resilient, secure, and globally scalable voice network is a monumental engineering effort.

- Maintenance Overhead: The telecommunications landscape is constantly in flux. A DIY system requires constant monitoring, updates, and troubleshooting, consuming valuable engineering resources that could be spent improving your AI.

The Modern Architecture: A Smart Brain on a High-Speed Network

To avoid these pitfalls, the most successful voice AI projects adopt a modern, decoupled architecture. This approach separates the AI’s intelligence from the voice’s transport layer, allowing you to use best-in-class solutions for both.

- The AI Brain (Your Application): This is the intelligent core that you build and control. It consists of three essential services working in unison:

- Automatic Speech Recognition (ASR): Converts the caller’s raw audio into text.

- Natural Language Processing (Llama 2): Your Llama 2 model processes the text to understand intent, manage context, and generate a response.

- Text-to-Speech (TTS): Converts the AI’s text response back into a natural, audible voice.

- The Voice Nervous System (FreJun’s Platform): This is the robust, low-latency infrastructure that connects your AI Brain to the customer. FreJun handles all the complex real-time communication, providing:

- Real-Time Media Streaming API: A purpose-built pipeline to carry audio between the phone call and your application with minimal delay.

- Global Telephony Network: Manages all the underlying complexity of making and receiving calls worldwide.

- Developer-First SDKs: Simple, powerful tools that let your developers focus on AI logic, not telecom protocols.

Also Read: Virtual Number Solutions for Professional Growth with WhatsApp Integration in the Netherlands

How Does a Llama 2 Voice Bot Works? An Inside Look

From the moment a call connects, a rapid, seamless cycle of actions takes place to create a fluid conversation.

- Audio Capture: A customer makes or receives a call. FreJun’s platform establishes the connection and begins capturing the raw audio of the customer’s voice.

- Real-Time Streaming and ASR: FreJun streams this audio in real time to your application. Your chosen ASR engine (like Google Speech-to-Text) transcribes the audio into text.

- NLP with Llama 2: The transcribed text is sent to your Llama 2 model. The AI processes the language to understand the user’s intent, extract key information, and determine the next step in the conversation.

- Response Generation and TTS: Based on the AI’s decision, your application generates a text response. This text is then sent to a TTS service (like Amazon Polly), which converts it into a human-like voice.

- Instant Playback: Your application streams the synthesized audio back to the FreJun API, which plays it to the customer instantly, completing the conversational loop without any awkward pauses.

This entire process happens in a fraction of a second, creating an experience that feels natural and efficient.

Building and Deploying a Production-Ready Llama 2 Voice Bot

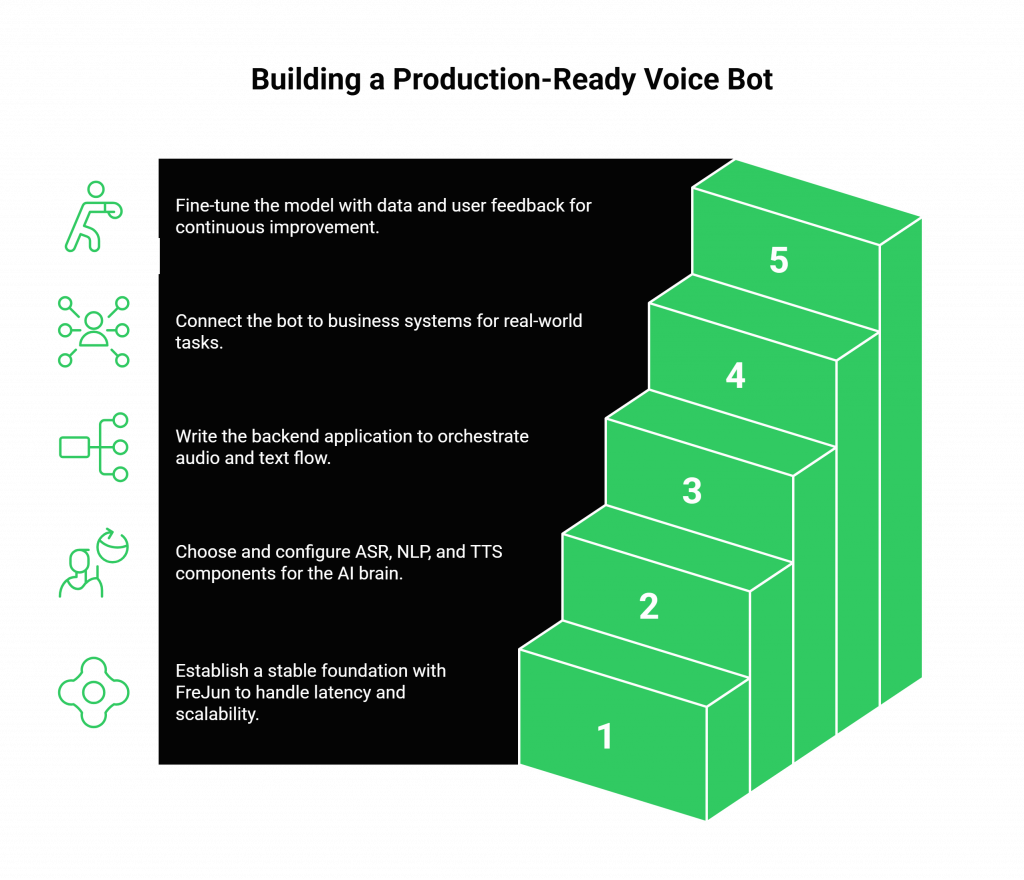

This tutorial provides a strategic roadmap for taking your voice bot from concept to a live, scalable application.

Step 1: Solve the Infrastructure Problem First

This is the most important step. Before you write a single line of AI code, establish your voice foundation. By choosing a telephony API provider like FreJun, you instantly solve the problems of latency, scalability, and reliability. Integrating with our platform gives you a stable, production-ready environment from day one, allowing you to focus exclusively on building your AI.

Step 2: Assemble Your AI Stack

Choose the other components for your AI Brain.

- ASR Engine: Select a service like Google Speech-to-Text or a fine-tuned open-source model like Whisper.

- NLP Engine: Set up and configure your Llama 2 model.

- TTS Service: Choose a provider like Amazon Polly or Azure TTS for high-quality, natural-sounding voices.

Step 3: Develop Your Core Application Logic

Write the backend application that will act as the central orchestrator. This code will use FreJun’s SDKs to manage the call and will be responsible for routing the audio and text between your ASR, Llama 2, and TTS services.

Step 4: Integrate with Business Systems

To be truly useful, your Llama 2 voice bot needs access to your business data. Use APIs to connect your application to your CRM, databases, and other backend systems. This allows the bot to perform real-world tasks like fetching an order status, checking an account balance, or scheduling an appointment.

Step 5: Train, Test, and Iterate

Fine-tune your Llama 2 model with domain-specific data to improve its accuracy. Start with a pilot program to test the bot with a small group of real users. Use the call analytics from FreJun and the conversation logs from your application to identify areas for improvement, then continuously refine your AI and conversational flows.

Also Read: How to Build a AI Voice Agents Using OpenAI GPT-3.5 Turbo?

The Infrastructure Choice: DIY Telephony vs. FreJun’s Voice Platform

The platform you build upon will determine your project’s speed, cost, and ultimate performance. Here is a direct comparison.

| Aspect | Building Your Own Voice Infrastructure | Building Your Llama 2 Voice Bot on FreJun |

| Time to Market | 9-12 months of complex, specialized engineering work. | 2-4 weeks. Integrate a proven API and focus on your core AI. |

| Latency | High risk of conversational delays, leading to poor UX. | Ultra-low latency by design, engineered for real-time AI conversations. |

| Scalability | A massive and continuous engineering challenge. | Effortlessly scales from one to thousands of concurrent calls. |

| Reliability | Your team is responsible for building and maintaining a 24/7 system. | 99.95% uptime on a resilient, geographically distributed network. |

| Developer Focus | 80% on managing telephony, 20% on improving the AI. | 100% focused on building and refining your AI and business logic. |

| Cost | High upfront and ongoing costs for servers, carriers, and staff. | Predictable, scalable pricing with no upfront infrastructure investment. |

Also Read: Virtual Number Setup for B2B Operations with WhatsApp Business in Indonesia

Transforming Industries with Voice Automation

A custom-built Llama 2 voice bot can be applied across numerous sectors to drive efficiency and improve customer experience. For example, in customer support:

- User: “Hi, I need to reset my password.”

- ASR: Transcribes the voice to text.

- Llama 2: Identifies the “password reset” intent.

- Bot Logic: The application verifies the user’s identity against the CRM.

- TTS Response: The bot replies, “Sure, I can help with that. For your security, I’ve just sent a password reset link to the email address we have on file. It should arrive in the next minute.”

This entire workflow is automated, instant, and available 24/7, freeing up human agents for more complex issues.

Final Thoughts: Move Faster and Build Smarter

The open-source AI movement, led by models like Llama 2, has opened up a world of possibilities for call automation. Your ability to capitalize on this opportunity depends on how quickly and effectively you can bring your ideas to market.

Don’t let the immense complexity of telecommunications become the anchor that weighs down your project. By leveraging a dedicated voice infrastructure platform like FreJun, you abstract away that complexity.

You empower your team to focus on what they do best: building a smarter, more effective, and more valuable AI experience for your customers. This is how you win in the new era of conversational AI.

Get Started with FreJun AI Today!

Also Read: How to Build an AI Voice Agents Using GPT-4o for Customer Support?

Frequently Asked Questions (FAQ)

No. FreJun is an AI-agnostic platform. We provide the voice infrastructure and the real-time streaming API that allows you to connect any AI model you choose, including Llama 2. This gives you complete control over your AI stack.

Yes. One of the major advantages of using an open-source model like Llama 2 is that you can host it in your own private cloud or on-premise environment for maximum security and control. FreJun securely connects to your application wherever it is hosted.

Latency is by far the biggest challenge. Even a small delay in response time can make a conversation feel unnatural and frustrating. This is why using an infrastructure provider that is purpose-built for low-latency, real-time communication is essential for success.

The bot’s ability to understand speech in challenging conditions depends on the quality of your chosen Automatic Speech Recognition (ASR) service. It is a best practice to train your ASR with diverse voice datasets to improve its accuracy.