We are living in a time of magic. You can type a question into a chat box and a computer writes a poem or solves a math problem or plans your vacation in seconds. Artificial Intelligence models like GPT-4 and Claude have changed how we think about software.

But there is one big problem. Most of these AI models are trapped behind a keyboard. You have to type to them. You have to read their answers.

Imagine if you could just pick up your phone and talk to them. Imagine if customer service was not a menu of buttons but a friendly conversation with a super intelligent agent.

This is the next frontier of technology. It is called conversational AI voice. The challenge is not building the brain because the AI is already smart enough. The challenge is building the mouth and ears.

To make an AI speak you need to connect the telephone network to the AI model. You do this through voice API integration.

In this guide we will explain exactly how to build these connections. We will look at how LLM voice pipelines work and why speed is the most important factor and how infrastructure platforms like FreJun AI provide the essential plumbing to bring these voice agents to life.

Table of contents

- What Is Voice API Integration for AI?

- Why Is Latency the Enemy of AI Voice?

- How Do LLM Voice Pipelines Work?

- How Does FreJun AI Simplify Connectivity?

- How Do You Choose the Right AI Model?

- How to Build the Integration Step by Step?

- What Are the Key Challenges in AI Model Voice Integration?

- What Are the Use Cases for Conversational AI Voice?

- Why Is Infrastructure the Backbone?

- Conclusion

- Frequently Asked Questions (FAQs)

What Is Voice API Integration for AI?

To understand how to connect an AI model we first need to understand the bridge.

On one side you have the telephony network. This is the world of phone numbers and carriers and audio signals. It is old and complex.

On the other side you have the AI model. This is the world of text and tokens and APIs. It lives on modern servers.

A voice API integration is the code that sits in the middle. It acts as a translator. It takes the audio from the phone call and converts it into data the AI can understand. Then it takes the answer from the AI and converts it back into audio for the caller to hear.

This creates AI model voice integration. It turns a static text bot into a dynamic voice agent.

Why Is Latency the Enemy of AI Voice?

When you type a message to a chatbot you do not mind waiting two or three seconds for an answer. You see the little typing bubbles and you wait.

Voice is different. Silence in a conversation is awkward. If you say “Hello” and the other person waits three seconds to answer you think the call dropped. You might say “Hello?” again. Then the AI answers and you talk over each other. It is a mess.

This is why infrastructure is critical. You cannot just use any API. You need a platform built for speed.

FreJun AI is designed specifically for this. We handle the complex voice infrastructure so you can focus on building your AI. Our platform uses real time media streaming to ensure that audio travels from the caller to your AI model in milliseconds. This low latency connection is what makes the conversational AI voice feel human.

How Do LLM Voice Pipelines Work?

To connect an AI model you need to build a pipeline. A pipeline is a series of steps that the data must flow through.

A standard LLM voice pipeline has three main stages:

- The Ears (STT): This stands for Speech to Text. The system captures the user’s voice and turns it into written words.

- The Brain (LLM): This stands for Large Language Model. The system takes the text and decides what to say next.

- The Mouth (TTS): This stands for Text to Speech. The system turns the AI’s text response back into audio.

Here is a table showing the difference between a text bot and a voice bot pipeline.

| Feature | Text Chatbot Pipeline | Voicebot Pipeline |

| Input | User types text | User speaks audio |

| Processing | Text goes directly to LLM | Audio must be transcribed first |

| Speed | Delays are acceptable | Speed must be real time |

| Output | Text appears on screen | Audio plays over the phone |

| Infrastructure | Simple HTTP requests | Complex media streaming |

| Complexity | Low | High |

Also Read: Voice API for Smart Meter Platforms

How Does FreJun AI Simplify Connectivity?

Building this pipeline from scratch is incredibly hard. You have to manage web sockets and audio encoding and jitter buffers.

FreJun AI acts as the transport layer. We do not force you to use a specific brain or a specific voice. We are model agnostic.

You can choose OpenAI for the brain and ElevenLabs for the voice. FreJun simply provides the high speed highway that connects them.

- We capture the audio from the call via FreJun Teler.

- We stream that audio to your chosen Speech to Text provider.

- We take the audio response from your Text to Speech provider and play it back to the caller.

We handle the difficult networking parts so you can just plug in your API keys and start building.

How Do You Choose the Right AI Model?

Because FreJun allows for open voice API integration you have the freedom to choose the best model for your needs.

General Purpose Models

Models like GPT-4 or Claude are great for general conversation. They are smart and can handle a wide range of topics. They are perfect for customer support agents who need to answer varied questions.

Specialized Models

For specific industries like healthcare or law you might want a specialized model. These are trained on specific data.

Speed vs Intelligence

This is a trade off you will have to manage. The smartest models are often the slowest. The fastest models might be less smart. With FreJun providing the fastest possible transport you have more room to use smarter models without making the user wait too long.

How to Build the Integration Step by Step?

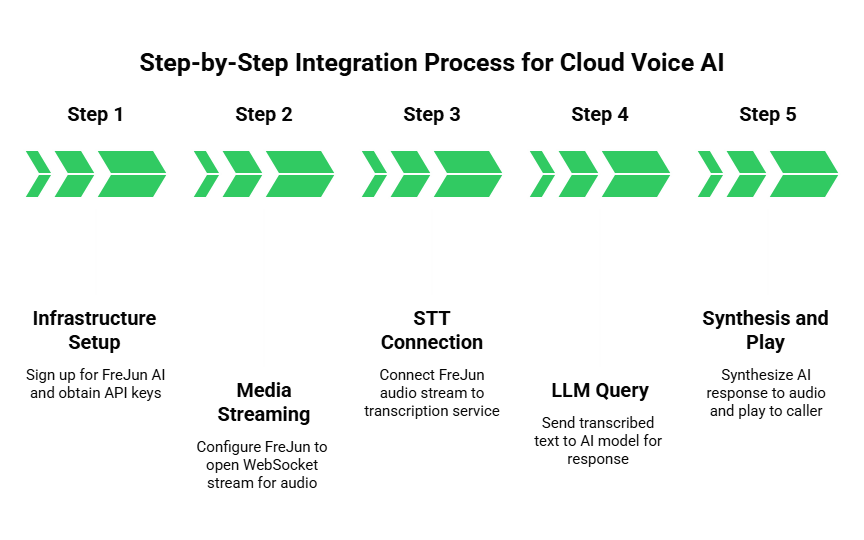

If you are a developer ready to connect your AI model here is the high level process.

Step 1 Get Your Infrastructure Ready

You need a way to make and receive calls. Sign up for a FreJun AI to get your API keys. This gives you access to our telephony network.

Step 2 Set Up Media Streaming

Standard HTTP requests are too slow for voice. You need to use WebSockets. Configure FreJun to open a WebSocket stream when a call starts. This creates a two way open pipe for audio data.

Step 3 Connect the STT

Point the incoming audio stream from FreJun to your transcription service (like Deepgram). As the user speaks FreJun sends the raw audio and the service returns text.

Step 4 Query the LLM

Take that text and send it to your AI model API. This is where the magic happens. The AI generates the response.

Step 5 Synthesize and Play

Send the AI response to your TTS provider. Take the resulting audio stream and send it back through the FreJun WebSocket. FreJun plays it to the caller instantly.

Also Read: Why Are Voicebot Solutions Replacing Traditional IVR Systems?

What Are the Key Challenges in AI Model Voice Integration?

Connecting the APIs is just the start. To make the experience good you have to solve some tricky problems.

Handling Interruptions

In a text chat you cannot interrupt the bot. In a voice chat users interrupt all the time. This is called “barge in.” If the bot is talking and the user says “Wait stop,” the bot must stop talking immediately.

FreJun’s real time media processing allows for this. We can detect when the user starts speaking and cut off the audio playback instantly. This makes the conversational AI voice feel respectful and attentive.

Context Management

The AI needs to remember what was said. If the user says “I want to buy a ticket” and then says “Make it for two people,” the AI must know that “it” refers to the ticket.

You must maintain a “context window” or history of the conversation and send it to the LLM with every new request.

Scale and Reliability

It is easy to run a demo for one person. It is hard to run a voice agent for 10,000 people at once.

This is where FreJun Teler comes in. Teler offers elastic SIP trunking. This means our system automatically scales up to handle high call volumes. Whether you have ten callers or ten thousand FreJun ensures the connections remain stable and clear.

What Are the Use Cases for Conversational AI Voice?

Once you have mastered AI model voice integration the possibilities are endless.

- AI Receptionists: Answering phones for dental offices or law firms and booking appointments 24/7.

- Order Takers: taking pizza orders or drive through requests with perfect accuracy.

- Therapy and Coaching: providing an empathetic ear for mental health support or life coaching.

- Language Learning: practicing a new language by conversing with an AI tutor that corrects your pronunciation.

Why Is Infrastructure the Backbone?

You can write the best code in the world and use the smartest AI model available. But if your voice infrastructure is weak the project will fail.

Voice data is heavy and sensitive. It degrades easily. Packets get lost. Jitter makes voices sound robotic.

FreJun AI is the backbone that supports your LLM voice pipelines. We ensure:

- Security: Your voice data is encrypted.

- Quality: We use high definition audio codecs.

- Reliability: Our distributed network means we are always online.

By using FreJun you are not just connecting an API. You are building on a foundation of enterprise grade telecommunications engineering.

Also Read: Why Is a Voice API for Developers the Backbone of AI Voice Systems?

Conclusion

We are moving away from the era of screens and keyboards. We are entering the era of voice. Connecting AI models to the telephone network is the key to unlocking this future.

It enables businesses to offer support that is instant and intelligent and human like. It frees humans from repetitive tasks and gives customers the service they deserve.

But remember that a voice agent is a system of parts. The brain and the ears and the mouth must all be connected by a nervous system that is fast and reliable. That nervous system is voice API integration.

FreJun AI provides the speed and the tools and the scalability you need to build that system. With our low latency streaming and FreJun Teler for elastic capacity we make it easy to turn your AI models into powerful voice agents.

Ready to launch your own voice AI project? Schedule a demo with our team at FreJun Teler and let us show you how fast your AI can speak.

Also Read: Call Log: Everything You Need to Know About Call Records

Frequently Asked Questions (FAQs)

Voice API integration is the process of using software code to connect different systems to the telephone network. In the context of AI it connects the audio from a phone call to the artificial intelligence models that process speech and text.

Yes. FreJun is model agnostic. This means you can use OpenAI or Google Gemini or Anthropic Claude or any open source model you prefer. We handle the audio transport and you handle the intelligence.

An LLM voice pipeline is the flow of data required to make an AI speak. It moves from Audio to Text (Transcription) then to the LLM (Reasoning) then to Audio (Synthesis) and finally plays back to the user.

Low latency means low delay. In a conversation if the delay is too long the user gets frustrated and talks over the bot. FreJun optimizes the network to keep this delay as short as possible.

Barge in capability allows the user to interrupt the AI while it is speaking. It is essential for a natural conversation. FreJun supports this by stopping the audio stream the moment the user’s voice is detected.

No. FreJun provides the infrastructure to carry the voice. You can use any Text to Speech provider you like such as ElevenLabs or PlayHT or Azure to generate the actual voice sound.

It can be difficult if you build your own servers. However using FreJun Teler makes it easy. Our elastic SIP trunking automatically adjusts to handle as many calls as you receive.

Yes. FreJun uses enterprise grade encryption standards to ensure that the voice data flowing through our platform is secure and private.

Yes. You can use FreJun to provision a phone number. When customers call that number FreJun will answer and stream the audio to your AI model.

No. FreJun abstracts away the hard telecom parts. If you are a web developer who knows how to work with APIs and WebSockets you can build a voice agent using our platform.