The rise of conversational AI has made voice automation one of the most powerful ways to transform customer experience. But building a real-time voice bot requires more than just intelligence, it needs infrastructure that can carry every conversation seamlessly.

Llama 3 delivers the reasoning and natural dialogue capabilities for next-generation support, while FreJun provides the enterprise-grade voice backbone that makes those conversations possible.

Table of contents

- The New Standard for Customer Support: Intelligent, Instant, and Always On

- The AI Is Ready, But Your Infrastructure Isn’t: The Real-Time Voice Challenge

- The Modern Voice Architecture: Separating the AI Brain from the Infrastructure Body

- Core Components of an Enterprise-Grade Voice Bot Using Llama 3

- A Step-by-Step Guide to Building Your Voice Bot

- DIY Infrastructure vs. FreJun’s Managed Voice Platform: A Comparison

- Final Thoughts: Build Your AI Asset on a Foundation Built for Voice

- Frequently Asked Questions (FAQ)

The New Standard for Customer Support: Intelligent, Instant, and Always On

For years, businesses have been caught in a frustrating loop: scale customer support by hiring more agents, driving up costs and complexity. Automated systems like IVRs promised efficiency but delivered frustration. Today, this paradigm is being broken by the advent of truly powerful, open-source large language models. With the release of Meta’s Llama 3, the ability to create genuinely intelligent, human-like conversational AI is no longer a futuristic dream; it’s a practical reality.

A voice bot using Llama 3 can understand complex queries, maintain conversational context, and automate sophisticated support workflows. With its advanced reasoning and fine-tuned dialogue capabilities, Llama 3 is the perfect “brain” for a next-generation customer support agent. However, even the most brilliant AI is useless if it can’t communicate effectively. The real challenge lies not in the AI itself, but in connecting it to your customers over a live phone call with the speed and clarity a real conversation demands.

Also Read: Automate Calls with Llama 4 Scout Voice Bot Tutorial

The AI Is Ready, But Your Infrastructure Isn’t: The Real-Time Voice Challenge

Many ambitious development teams dive into building a voice bot, focusing all their energy on the AI stack. They expertly integrate Speech-to-Text (STT), configure the Llama 3 model, and connect a Text-to-Speech (TTS) engine. In a local test environment, it’s a masterpiece.

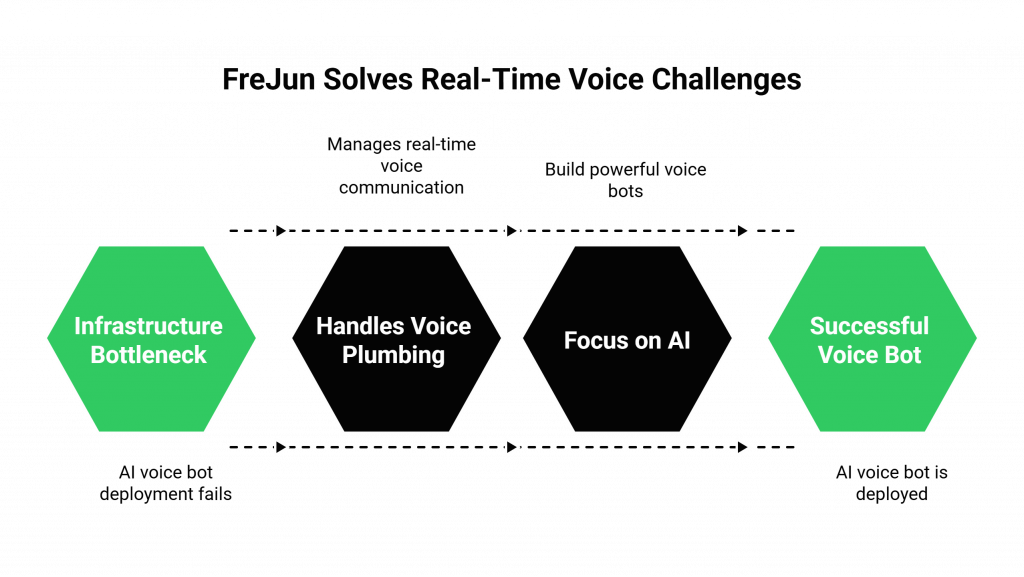

Then they attempt to deploy it on a live phone number. The project grinds to a halt. This is where the real-world complexity of voice communication reveals itself. The team is suddenly faced with a host of infrastructure problems they are unprepared to solve:

- Crippling Latency: The delay between a customer speaking, the audio traveling to your STT, the text being processed by Llama 3, the response being generated by your TTS, and the audio playing back to the customer is the number one killer of conversational AI. A pause of even two seconds feels unnatural and leads to a broken, frustrating experience.

- Telephony Complexity: Managing the intricate world of SIP trunks, real-time media protocols (RTP), carrier integrations, and global call routing requires deep, specialized expertise. It’s a massive technical distraction from the core goal of building a great AI.

- Scalability and Reliability: An infrastructure that works for one or two concurrent calls will fail under the load of hundreds or thousands. Building a resilient, secure, and geographically distributed voice network that guarantees enterprise-grade uptime is a monumental task.

This infrastructure problem is precisely what FreJun was built to solve. We handle the complex, real-time voice plumbing so your team can focus on what it does best: building a powerful voice bot using Llama 3.

The Modern Voice Architecture: Separating the AI Brain from the Infrastructure Body

To build a production-grade voice bot that delivers a seamless customer experience, you must adopt a modern, decoupled architecture. This approach separates the AI’s intelligence from the voice’s transport layer, allowing you to use the best possible tool for each job.

- The AI Brain (Your Application): This is the intelligent core that you build, own, and control. It’s where your unique business logic resides and consists of three key services working together:

- STT: Transcribes the caller’s voice into text.

- NLP Engine (Llama 3): Processes the text to understand intent, manage the conversation, and generate a response.

- TTS: Converts the AI’s text response back into a natural-sounding voice.

- The Voice Infrastructure (FreJun’s Platform): This is the high-speed, reliable network that connects your AI Brain to your customers. FreJun manages the entire real-time communication pipeline, providing a robust API and developer-first SDKs to handle:

- Live Call Management: Handling all inbound and outbound call connections on the global telephone network.

- Real-Time Audio Streaming: A purpose-built, low-latency API to stream audio from the call to your STT and from your TTS back to the caller.

- Enterprise-Grade Reliability: A scalable, secure, and high-availability foundation for your voice application.

This architecture is the key to unlocking the full potential of your voice bot using Llama 3, ensuring its intelligence is delivered with flawless performance.

Also Read: Virtual Number Solutions for Professional Communication with WhatsApp Integration in Mexico

Core Components of an Enterprise-Grade Voice Bot Using Llama 3

An effective voice bot is a symphony of four distinct technologies working in perfect synchronization.

- Speech-to-Text (STT): This is the bot’s “ear.” It listens to the customer’s raw voice audio from the phone call and transcribes it into text in real time. Popular choices include Google Speech-to-Text or open-source models like Whisper.

- NLP Engine (Llama 3): This is the bot’s “brain.” The transcribed text is fed into your Llama 3 model, which uses its advanced reasoning capabilities to interpret the user’s intent, understand the context of the conversation, and generate a meaningful, accurate response.

- Text-to-Speech (TTS): This is the bot’s “voice.” Once Llama 3 generates a text response, a TTS engine like Amazon Polly or Azure TTS converts it into a natural, human-like voice that can be played back to the customer.

- Voice Infrastructure: This is the bot’s “nervous system.” It’s the critical layer that handles the live phone call and streams audio back and forth between the customer and your AI services. This is where a specialized API provider like FreJun is essential for ensuring low-latency, real-time performance.

A Step-by-Step Guide to Building Your Voice Bot

This practical guide provides a strategic framework for developing and deploying your voice bot, starting with the most critical element for real-world success.

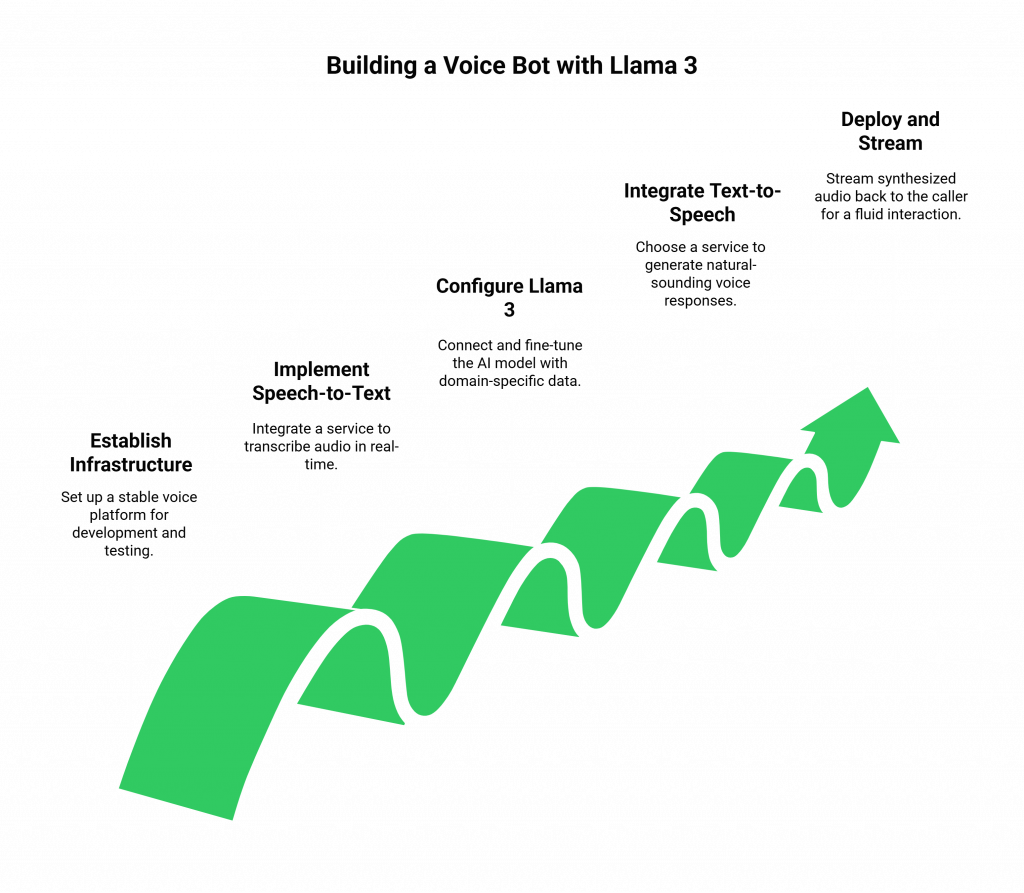

Step 1: Establish Your Voice Infrastructure Foundation

Before configuring your AI, solve the connectivity problem. Integrating with a specialized voice platform like FreJun from the start provides a stable, low-latency environment for development and testing. This foundational step gives you a live phone number and robust SDKs, de-risking the most complex part of the project.

Step 2: Implement Real-Time Speech-to-Text

Set up your chosen STT service. Your application will receive a raw audio stream from the FreJun API and feed it directly to your STT engine for real-time transcription.

Step 3: Connect and Configure Your Llama 3 Engine

This is the core of your AI. Connect the text output from your STT service to your Llama 3 model. Fine-tune the model with your company’s domain-specific data such as product manuals, FAQs, and past support conversations to improve its accuracy and contextual understanding.

Step 4: Integrate a High-Quality Text-to-Speech Service

Pass the text responses generated by Llama 3 to your TTS engine. Choose a service that offers high-quality, natural-sounding voices that align with your brand’s tone and personality.

Step 5: Deploy and Stream Responses via FreJun

Stream the synthesized audio output from your TTS engine back to the FreJun API. Our platform is optimized to play this audio back to the caller with minimal delay, completing the conversational loop and ensuring a fluid, natural interaction. This is the final step in creating a fully functional voice bot using Llama 3.

Also Read: How to Build a Voice Bot Using Microsoft Phi-3 for Customer Support?

DIY Infrastructure vs. FreJun’s Managed Voice Platform: A Comparison

The choice of how to handle your voice infrastructure will have the single biggest impact on your project’s timeline, budget, and performance.

| Feature | Building Your Own Voice Infrastructure | Building Your Voice Bot on FreJun’s Platform |

| Developer Focus | 75% on complex telephony, 25% on the AI. | 100% focused on building and refining your voice bot using Llama 3. |

| Time to Market | 9-12 months of specialized, high-cost engineering. | 2-3 weeks to integrate a proven API and launch your application. |

| Performance & Latency | High risk of conversational delays that destroy the user experience. | Engineered for ultra-low latency, ensuring natural, real-time dialogue. |

| Scalability | A massive and ongoing engineering challenge requiring significant investment. | Effortlessly scales to handle thousands of concurrent calls on demand. |

| Reliability & Uptime | Your team is solely responsible for building and maintaining a 24/7 system. | 99.95% uptime on a resilient, geographically distributed network. |

| Security & Compliance | You must build all security protocols and manage compliance from scratch. | A secure platform that adheres to robust data protection policies. |

Final Thoughts: Build Your AI Asset on a Foundation Built for Voice

The era of intelligent voice automation is here, and open-source models like Llama 3 have made it more accessible than ever. Your competitive advantage will come not just from having AI, but from how quickly and effectively you can deploy it to create a superior customer experience.

Diverting your best engineering talent to the monumental task of building a global telecommunications platform is a strategic mistake. It’s slow, expensive, and distracts from your core mission.

By partnering with FreJun, you leverage a battle-tested, enterprise-grade foundation to handle the voice layer. This allows your team to focus all of its energy on what truly matters: building the smartest, most effective, and most valuable voice AI for your business.

Also Read: Virtual Number Implementation for B2B Growth with WhatsApp Business in Spain

Frequently Asked Questions (FAQ)

No. FreJun is an AI-agnostic voice infrastructure platform. We provide the real-time streaming API and call management tools that allow you to connect any AI model you choose, including Llama 3. This gives you complete control over your AI stack.

In a natural human conversation, the pauses between speakers are very short. A delay of more than a second can make an interaction feel awkward and robotic. Low latency is essential for creating a fluid and engaging user experience that builds trust and prevents customers from hanging up.

Yes. Your backend application, which contains the Llama 3 logic, can use APIs to connect to any CRM, helpdesk, or database. This allows your bot to pull customer history, create support tickets, and perform other actions in real time.

Security is critical. Your application must be designed to handle sensitive data in compliance with regulations like GDPR. Using a secure voice infrastructure provider like FreJun ensures that the data is protected in transit, while hosting an open-source model like Llama 3 on your own servers can provide an additional layer of data control.