Watch any two humans have a conversation. You will notice a constant, fluid, and almost subconscious dance of interruption. One person starts a sentence, the other jumps in with a quick confirmation (“right,” “got it”), and the first person continues without missing a beat. This back-and-forth of overlapping speech, known as “barge-in,” is not a sign of rudeness; it is the very fabric of a natural, efficient conversation.

For a long time, this was the insurmountable wall that made conversations with automated voice agents feel so stilted and robotic. The AI would start its pre-programmed monologue, and the user would be forced to listen silently to the end, even if they already knew the answer. This is no longer a limitation we have to accept.

The ability to gracefully handle voice interruption support is one of the most significant and impactful advancements in modern conversational AI. For developers building voice bots, mastering the technology and the design principles behind real-time interruption is the key to creating an experience that is not just functional, but truly fluid and human-like.

It is the difference between a user feeling like they are talking at a machine and feeling like they are talking with an assistant. This guide will explore the critical role of barge-in and the architectural components required to enable truly full-duplex audio interactions.

Table of contents

What is Barge-In and Why is it the Key to a Natural Conversation Flow?

Barge-in is the technical term for a user’s ability to interrupt a voice bot while it is speaking. A “half-duplex” conversation is like using a walkie-talkie: only one person can talk at a time. A “full-duplex” conversation is like a natural phone call: both parties can speak and be heard simultaneously. A voice bot that supports barge-in is one that can engage in a full-duplex interaction.

The Frustration of the Monologue

The user experience without barge-in is a common source of frustration. Imagine this scenario:

- AI Voice Bot: “Thank you for calling. Before we get started, please be aware that our business hours have recently changed. We are now open Monday through Friday from 9 AM to 5 PM, and on Saturdays from 10 AM to…”

- User (thinking): “I know, I know! I just want to pay my bill!”

The user is held hostage, forced to listen to irrelevant information. This lack of control makes the interaction feel slow, inefficient, and disrespectful of the user’s time. An AI that forces a user to listen to a long monologue is failing this primary objective.

The Power of an Interrupted Dialogue

Now, imagine the same scenario with barge-in detection enabled.

- AI Voice Bot: “Thank you for calling. Before we get started, please be aware that our business…”

- User (interrupting): “I’d just like to pay my bill.”

- AI Voice Bot: [Instantly stops speaking] “Okay, I can help with that. Are you ready to make a payment now?”

This is a completely different experience. The user feels in control, the conversation is more efficient, and the AI feels dramatically more intelligent and responsive. This is the gold standard for a natural conversation flow.

Also Read: 5 Challenges of Media Streaming in Telephony Systems – and How to Solve Them

What is the Technical Architecture for Enabling Barge-In?

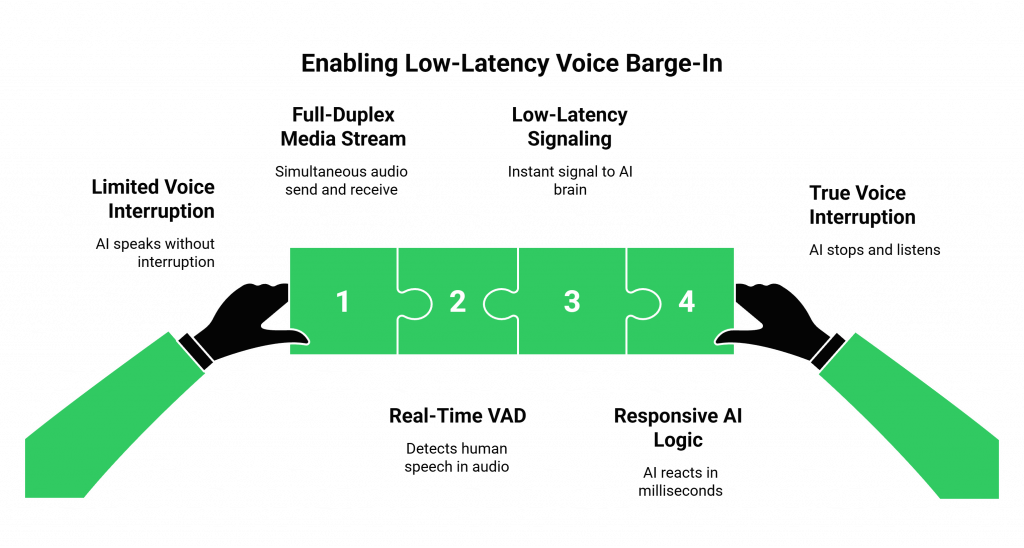

Enabling true, low-latency voice interruption support is a sophisticated engineering challenge. It requires a deep, real-time integration between the voice infrastructure and the AI’s “brain.” It is not a single feature but a carefully orchestrated, high-speed workflow.

The core components required are:

- A Full-Duplex Media Stream: The underlying voice platform must be able to simultaneously send audio to the user (the AI’s speech) and receive audio from the user.

- Real-Time Voice Activity Detection (VAD): This is the “ears” of the barge-in system. A piece of software, often running at the network edge, is constantly listening to the audio stream from the user. It can distinguish between background noise and the start of human speech.

- A Low-Latency Signaling Mechanism: The moment the VAD detects that the user has started speaking, it needs to send an instant signal to the AI’s brain.

- Responsive AI Logic: The AI’s brain (your application) must be designed to receive this signal and react to it in milliseconds.

How a Modern Voice Platform like FreJun AI Makes This Possible

This complex orchestration is exactly what a modern, developer-first voice platform is designed to handle. When you are building voice bots on FreJun AI, our Teler engine and Real-Time Media API provide the essential building blocks for barge-in detection.

Here is how the workflow operates on our platform:

- The AI Speaks: Your application sends an API command to our Teler engine to play the AI’s synthesized audio response to the user.

- Teler Listens While Speaking: While our Teler engine is playing the audio out, its built-in, edge-based VAD is simultaneously listening to the audio coming in from the user.

- Barge-In Detected: The moment the VAD detects that the user has started talking, Teler does two things in parallel and in real-time:

1. It can be configured to automatically stop the outbound audio playback.

2. It sends an immediate event notification (a webhook or a WebSocket message) to your application with the label “Barge-In Detected.”

This table summarizes the division of labor in enabling barge-in.

| Responsibility | The Voice Platform (e.g., FreJun AI’s Teler) | Your AI Application (Your “AgentKit”) |

| Simultaneous Audio I/O | Manages the full-duplex media stream. | – |

| Voice Activity Detection (VAD) | Performs real-time VAD on the user’s audio. | – |

| Sending the Barge-In Signal | Sends the real-time event notification. | – |

| Receiving the Signal | – | Receives the event and understands the context. |

| Stopping the Playback | Can be configured to stop automatically. | Can also send an explicit “stop playback” API command. |

| Deciding What to Do Next | – | Processes the user’s new utterance and formulates the next response. |

Also Read: How FreJun Teler Uses Media Streaming to Enable Instant Voice Responses

Ready to start building AI conversations that actually listen? Sign up for FreJun AI

What Are the Design Principles for a Great Interruption Experience?

Having the technical ability to handle interruptions is only half the battle. You also need to design your AI’s conversational logic to handle them gracefully.

Design for Interruptibility

- Shorter Sentences: Break down your AI’s responses into shorter, more digestible sentences. A user is more likely to interrupt a long, multi-part explanation than a short, concise statement.

- “Leading with the Punchline”: Whenever possible, give the most important piece of information first. For example, instead of saying, “Before we proceed, I need to inform you about our new policy, which states that all payments are…”, say, “Your payment is due on Friday. According to our new policy…” This gives the user the key information up front and reduces their need to interrupt.

Acknowledge the Interruption

To make the conversation feel even more natural, your AI can be designed to acknowledge that it was interrupted.

- User (interrupting): “I already have my account number ready.”

- AI Voice Bot: [Stops speaking] “Great! What is that number?”

This small piece of logic makes the AI feel much more aware and intelligent.

Also Read: What Is a Programmable SIP and How Does It Power Modern Voice Applications?

Conclusion

The ability to handle real-time interruptions is no longer a “nice-to-have” feature in an advanced voice bot; it is a fundamental requirement for a positive user experience. The era of the robotic monologue is over. Customers expect to be able to interact with an AI with the same conversational fluidity that they would with a human.

For developers building voice bots, this means that choosing a voice platform with robust, low-latency barge-in detection is a critical architectural decision.

By combining this powerful technology with a thoughtful, interruption-aware approach to conversational design, you can create full-duplex audio interactions that are not just efficient, but are a genuine pleasure for your customers to use.

Want a technical deep dive into our real-time VAD and barge-in capabilities? Schedule a demo for FreJun Teler.

Also Read: Predictive Dialer vs Power Dialer vs Preview Dialer: Full Comparison of Autodialer Types

Frequently Asked Questions (FAQs)

Barge-in, or voice interruption support, is the capability that allows a user to speak and interrupt an AI voice bot while it is in the middle of talking. The bot then stops speaking and listens to the user’s new input.

Full-duplex audio interactions are conversations where both parties can speak and be heard at the same time, just like a natural phone call. This is in contrast to “half-duplex,” where only one party can talk at a time, like a walkie-talkie.

It is critical for a natural conversation flow because it gives the user control over the conversation. It allows them to skip information they already know or to redirect the conversation, making the interaction faster and much less frustrating.

VAD is the technology that is used for barge-in detection. It is a piece of software that can analyze an audio stream in real-time and distinguish between silence, background noise, and the start of human speech.

A developer enables barge-in by using a modern voice platform, like FreJun AI, that provides this capability. When they use an API command to make the bot speak, they can set a parameter that enables interruption.

It requires your application’s logic to be designed to handle the “Barge-In Detected” event. So, instead of just having a linear script, your code needs to be able to gracefully stop its current action and start a new one based on this real-time event.

Our platform is built on a low-latency, edge-native architecture. The barge-in detection happens at the edge, close to the user. This means the notification event is sent to your application with minimal delay.