In 2025, developers have more choice than ever when it comes to building lifelike voice AI. ElevenLabs sets the benchmark for expressive speech synthesis, while Vapi.ai enables orchestration and scale at levels never seen before. But comparing these two platforms is only half the story.

To succeed in production, voice AI needs more than intelligence and fluency, it needs a resilient way to move audio across global telephony networks. That’s where FreJun enters as the invisible, yet essential, foundation.

Table of contents

- The Developer’s Dilemma: Choosing Your AI Voice Stack

- Why Building Voice AI Is More Than Just an API Call

- ElevenLabs: The Gold Standard for Expressive AI Voice

- Vapi.ai: The Framework for Scalable Voice Agents

- The Missing Piece: Why Your AI Needs a Voice Transport Layer

- DIY Stack vs. FreJun AI: A Strategic Comparison

- How to Build a Production-Grade Voice Agent: The Modern Stack?

- Final Thoughts: Build Your AI, Not Your Plumbing

- Frequently Asked Questions

The Developer’s Dilemma: Choosing Your AI Voice Stack

The era of robotic, stilted voice assistants is over. In 2025, businesses are deploying hyper-realistic, conversational AI agents that can handle complex queries, book appointments, and provide empathetic support. For developers tasked with building these next-generation voice applications, the landscape of available tools is both exciting and complex. Two names that frequently surface are ElevenLabs, renowned for its state-of-the-art voice synthesis, and Vapi.ai, a powerful framework for deploying voice agents at scale.

This leads to a critical question for development teams: which platform is the right choice? The debate of Elevenlabs.io Vs Vapi.ai is not just about features; it’s about architectural philosophy. Do you prioritize unparalleled voice quality and expressiveness, or do you need a robust, scalable framework designed for millions of concurrent calls?

This guide provides a comprehensive comparison to help you decide. More importantly, it reveals the crucial third component that is often overlooked until it’s too late: the underlying voice infrastructure that connects your brilliant AI to the real world.

Also Read: Grok 4 Voice Bot Tutorial: Automating Calls

Why Building Voice AI Is More Than Just an API Call

Many development teams dive into building a voice agent by focusing exclusively on the AI components: a Speech-to-Text (STT) model, a Large Language Model (LLM) for logic, and a Text-to-Speech (TTS) engine for the response. They might select a best-in-class TTS like ElevenLabs or an orchestration framework like Vapi.ai and assume the hardest part is over.

The reality is that the most significant challenge isn’t generating the audio; it’s managing the real-time, bidirectional stream of that audio over a telephone network. This is the domain of voice infrastructure, a complex world of telephony protocols, latency management, and carrier integrations.

The do-it-yourself (DIY) approach, stitching together separate APIs for telephony, AI processing, and voice synthesis and creates a fragile and inefficient system plagued by common failure points:

- Compound Latency: Each separate API call in the chain adds milliseconds of delay. The lag between a user finishing their sentence and the AI beginning its response can quickly become awkwardly long, breaking the illusion of a real conversation.

- Scalability Nightmares: Handling ten concurrent calls is different from handling ten thousand. Scaling a self-managed telephony integration requires deep expertise in load balancing, geographic distribution, and carrier management.

- Developer Distraction: Instead of refining the AI’s conversational logic, your team spends its time debugging dropped calls, poor audio quality, and telephony API errors. You end up managing plumbing instead of building your product.

This is where a dedicated voice transport layer becomes essential. It’s the specialized infrastructure that handles the messy reality of real-time voice communication, allowing your chosen AI tools to perform at their best.

ElevenLabs: The Gold Standard for Expressive AI Voice

Founded in 2022, ElevenLabs has rapidly established itself as a leader in high-quality speech synthesis. Its core strength lies in producing exceptionally realistic and emotionally nuanced voices, making it the top choice for applications where the quality of the voice itself is a primary feature.

Key Strengths and Features

- Unmatched Voice Realism: Using its advanced, in-house developed models like Eleven v3, the platform generates speech that is rich in intonation, emotion, and expression. Audio tags like [excited] or [whispers] give developers granular control over the delivery.

- Advanced Conversational AI Tools: Beyond simple TTS, ElevenLabs offers a suite of tools including Scribe (an accurate ASR with speaker diarization) and even Eleven Music for AI-generated soundscapes.

- Broad Language Support: The platform supports over 70 languages, enabling the creation of expressive, multi-speaker dialogue for global audiences.

Ideal Use Cases

ElevenLabs is the ideal choice when voice quality and expressiveness are non-negotiable. This includes:

- Narrating audiobooks with unique character voices.

- Dubbing movies and video content with emotion-sync.

- Creating highly-branded, signature voice assistants for companies.

However, while its voice quality is second to none, its architecture is not primarily designed for managing high-volume, real-time telephony. For large-scale voice agent deployments, developers may find its scalability limited unless paired with dedicated external infrastructure.

Also Read: Virtual PBX Phone Systems Setup for Businesses in Poland

Vapi.ai: The Framework for Scalable Voice Agents

Vapi.ai takes a different approach. It is a developer-first platform built specifically for building, testing, and deploying conversational voice agents at an immense scale via a single API. It acts as an orchestration layer, integrating with various third-party STT, LLM, and TTS services (including ElevenLabs).

Key Strengths and Features

- Engineered for Low Latency and Scale: Vapi.ai is designed from the ground up for performance, boasting sub-500 ms latency and the ability to handle over one million concurrent calls. Its use of GPU inference and smart caching enables interruption-aware conversations that feel truly real-time.

- Deep Integration Ecosystem: As a framework, it excels at connecting with other tools. It supports over 40 third-party applications, including Twilio, OpenAI, and Deepgram, allowing developers to build a “best-of-breed” AI stack.

- Developer-First Tooling: With an API-native infrastructure, thousands of pre-made templates, and automated testing suites, Vapi.ai accelerates the development-to-deployment lifecycle for complex voice agents.

Ideal Use Cases

Vapi.ai shines in scenarios that demand massive scale and real-time responsiveness:

- Large-scale outbound notification or lead qualification campaigns.

- Inbound customer service bots for enterprise contact centres.

- Any application requiring complex conversational workflows and heavy call volume.

The primary consideration with Vapi.ai is that it is an orchestrator. Developers must still bring (and pay for) their own ASR, LLM, and voice models, which can add cost and complexity to the stack.

Head-to-Head: Elevenlabs.io Vs Vapi.ai Feature Breakdown

To clarify the Elevenlabs.io Vs Vapi.ai decision, let’s break down their core differences.

Voice Quality & Expressiveness

ElevenLabs is the clear winner here. Its proprietary models are engineered for emotional nuance and realism, making it the go-to for premium voice experiences. [turn0search0, turn0search4] Vapi.ai’s quality is dependent on the third-party TTS model you integrate with it.

Latency & Performance at Scale

Vapi.ai holds the advantage. Its entire architecture is optimized for sub-500 ms latency and handling over a million concurrent calls, making it purpose-built for high-throughput telephony applications.

Developer Experience & Integration

This is a tie, with different philosophies. Vapi.ai is API-native and built for integrating a wide array of services, offering flexibility.ElevenLabs provides its focused APIs and SDKs for deep control over its voice models. Notably, the two are not mutually exclusive; developers can use ElevenLabs as the TTS engine within a Vapi.ai agent.

Ultimately, the choice in the Elevenlabs.io Vs Vapi.ai comparison depends on your primary goal. For the best voice, choose ElevenLabs. For the most scalable agent framework, choose Vapi.ai. But for either to succeed in a real-world telephony environment, they need a robust foundation.

Also Read: How to Build a Voice Bot Using InternLM for Customer Support?

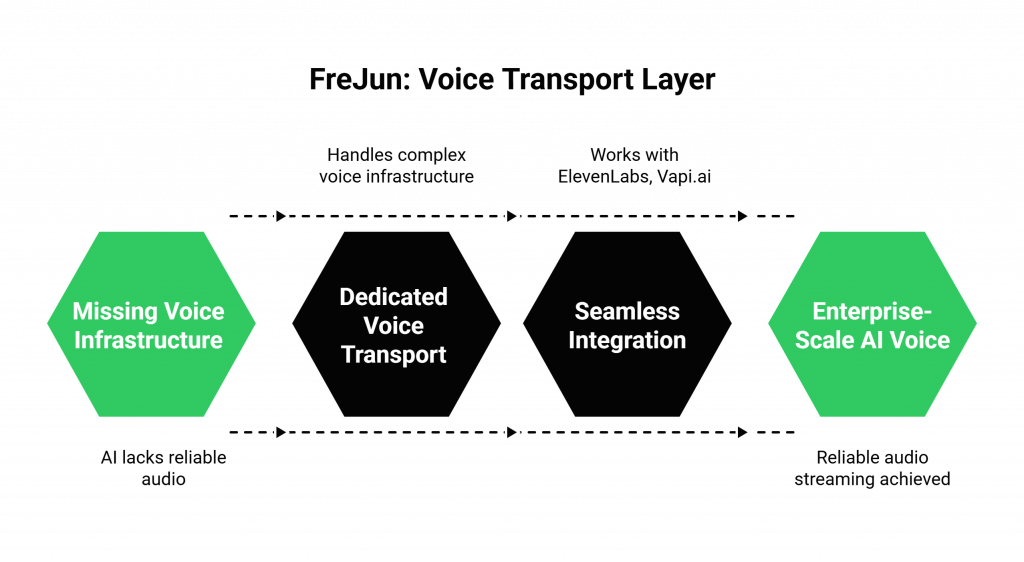

The Missing Piece: Why Your AI Needs a Voice Transport Layer

This brings us to the core of the problem. Both ElevenLabs and Vapi.ai provide powerful tools for the brain and mouth of your AI agent. But neither solves the fundamental challenge of the ears and the connection, the complex voice infrastructure required to reliably stream audio to and from a user over a phone call.

This is the exact problem FreJun AI was built to solve.

FreJun is a dedicated voice transport layer. We handle the complex, mission-critical voice infrastructure so you can focus on building your AI. We are not a competitor to ElevenLabs or Vapi.ai; we are the essential foundation that makes them work seamlessly at an enterprise scale.

How Does FreJun AI Complements Your Stack?

- Stream Voice Input: Our API captures real-time, low-latency audio from any inbound or outbound phone call. This crystal-clear audio stream is forwarded directly to your application and your chosen STT service.

- Process With Your AI: Your application, whether it’s using Vapi.ai for orchestration or custom logic, maintains full control. You process the transcribed text, consult your LLM, and generate a response.

- Generate Voice Response: You take the text response and send it to your chosen TTS service, like ElevenLabs. The resulting audio is piped back to the FreJun API, which plays it back to the user with minimal delay, completing the conversational loop.

We provide the robust, developer-first “plumbing” that ensures the conversation flows smoothly, without the awkward pauses and technical glitches that kill user experience.

DIY Stack vs. FreJun AI: A Strategic Comparison

The decision is not just about Elevenlabs.io Vs Vapi.ai. It’s about how you build your entire voice stack. Here’s how a DIY approach compares to using FreJun AI as your transport layer.

| Feature / Aspect | The DIY Stack (Vapi.ai + ElevenLabs + Telephony API) | The FreJun AI Transport Layer |

| Telephony Integration | Complex, multi-vendor integration. Requires managing separate APIs for call control and media streaming. | Unified API for all voice transport. Simple, direct connection between your AI and the phone network. |

| Latency Management | Latency compounds across each separate service. Difficult to diagnose the source of delays. | Optimized end-to-end for low latency. Our entire stack is engineered to minimize transport delay. |

| Scalability & Reliability | Requires self-managed scaling of telephony infrastructure. Prone to single points of failure. | Built on resilient, geographically distributed infrastructure engineered for 99.99% uptime. |

| Developer Focus | Divided between core AI logic and debugging telephony issues (jitter, packet loss, carrier errors). | Focused entirely on building the best AI logic. We handle the complex voice stream. |

| Support & Expertise | Fragmented support from multiple vendors who don’t see your full technology stack. | Dedicated integration support from voice infrastructure experts who help you succeed from day one. |

Also Read: Virtual PBX Phone Systems Implementation for B2B Growth in Enterprises in Sweden

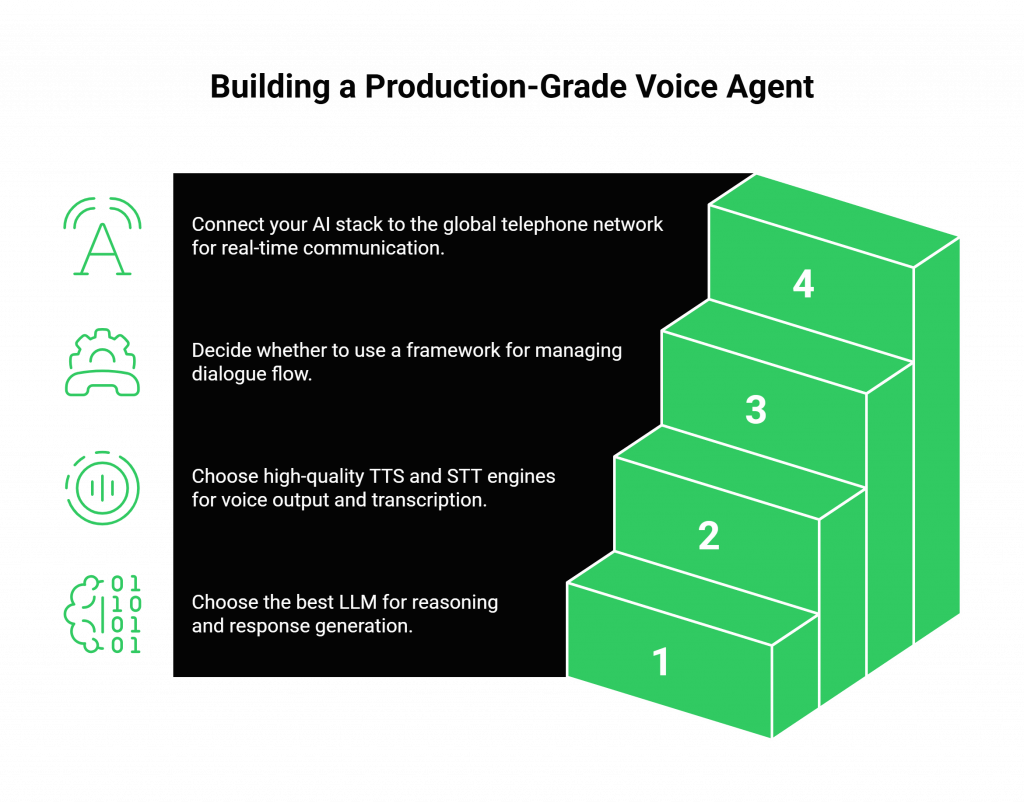

How to Build a Production-Grade Voice Agent: The Modern Stack?

Building a sophisticated voice agent in 2025 is about selecting the most specialised tool for each part of the job.

- Step 1: Define Your AI Logic. Choose the Large Language Model (e.g., GPT-4, Claude 3) that best suits your use case for reasoning and response generation.

- Step 2: Select Your Voice Components. Choose a best-in-class Text-to-Speech engine like ElevenLabs for high-quality voice output and a reliable Speech-to-Text provider for transcription.

- Step 3: Choose Your Agent Framework (Optional). For complex, stateful conversations, use a framework like Vapi.ai to manage the dialogue flow and integrations. For simpler agents, you can build this logic yourself.

- Step 4: Integrate with the FreJun AI Voice Transport Layer. This is the crucial final step. Utilise FreJun’s developer-first SDKs to integrate your entire AI stack with the global telephone network. Our platform will manage the real-time audio streams, ensuring your agent can communicate with users clearly and without delay.

This modular, best-of-breed approach ensures you have the highest quality at every layer of your application, without compromising on reliability or scalability.

Final Thoughts: Build Your AI, Not Your Plumbing

To build a truly great voice AI application, you need three pillars: an exceptional voice (TTS), intelligent logic (LLM), and a rock-solid infrastructure (the voice transport layer). In the rush to innovate, too many development teams focus on the first two and stumble on the third. They get bogged down in the complexities of real-time media streaming and telephony, and their projects fail to reach production.

The strategic choice in the Elevenlabs.io Vs Vapi.ai discussion is to recognise that they are powerful components, not a complete solution. By leveraging a specialised voice transport layer like FreJun AI, you de-risk your project and accelerate your time-to-market.

Let us handle the complex voice infrastructure. You focus on what you do best: building an intelligent, engaging, and powerful AI. With our robust API, comprehensive SDKs, and dedicated support, you can launch sophisticated, real-time voice agents in days, not months.

Also Read: Jamba Voice Bot Tutorial: Automating Calls

Frequently Asked Questions

Yes, absolutely. FreJun is model-agnostic. Our platform provides the real-time audio stream from the call to your application. You can then process it and pipe the audio output from ElevenLabs’ TTS API directly back into our API to be played for the user.

Yes. FreJun can act as the underlying voice transport layer for a Vapi.ai agent. Instead of connecting Vapi to a traditional telephony provider, you would use FreJun to manage the call and stream the audio to and from your Vapi agent, benefiting from our low-latency infrastructure.

No, and this is by design. We specialise exclusively in the voice infrastructure and transport layer. This gives our customers the freedom to choose best-in-class AI models for STT (like Deepgram) and TTS (like ElevenLabs) without being locked into a single vendor’s ecosystem.

The simplest distinction is this: ElevenLabs is a voice synthesis specialist focused on creating the highest-quality audio. Vapi.ai is a voice agent development framework focused on orchestrating the components of a scalable conversational agent.