In 2025, developers face an exciting but difficult choice when building voice AI. Do you stitch together best-in-class components like ElevenLabs, famous for voices that sound truly human? Or do you go with a no-code platform like Synthflow.ai, which prioritises speed and workflow automation?

Both approaches have clear strengths, but neither solves the toughest challenge: carrying these AI conversations seamlessly across the global telephone network. This guide compares the two platforms and shows how FreJun provides the missing voice backbone.

Table of contents

- The Developer’s Crossroads: Component vs. Platform

- The Hidden Hurdle: Why Voice AI Is More Than an API

- ElevenLabs.io: The Artisan of AI Voice Synthesis

- Synthflow.ai: The Architect of AI Call Automation

- Head-to-Head: Elevenlabs.io Vs Synthflow.ai Breakdown

- The Infrastructure Gap: Connecting Your AI to the World

- DIY Stack vs. FreJun AI: A Strategic Comparison

- How to Build a Production-Grade Voice Agent in 2025?

- Final Thoughts: Build Your Agent, Not Your Infrastructure

- Frequently Asked Questions

The Developer’s Crossroads: Component vs. Platform

In 2025, the demand for intelligent, human-like voice agents has moved from a novelty to a business necessity. For developers tasked with building these solutions, the market offers a dizzying array of tools, each with a distinct philosophy. At one end of the spectrum, you have highly specialised, best-in-class components. At the other, you find end-to-end, no-code platforms designed for rapid deployment.

This brings developers to a critical crossroads, perfectly encapsulated by the Elevenlabs.io Vs Synthflow.ai debate. Do you choose ElevenLabs, a platform renowned for its unparalleled, emotionally resonant voice synthesis, giving you granular control over the audio experience? Or do you opt for Synthflow.ai, a powerful no-code architect that lets you build and automate entire call workflows with deep business system integrations?

This guide will dissect the capabilities of both platforms, helping you understand their ideal use cases. More critically, it will illuminate the one component neither platform provides: the robust, low-latency voice infrastructure required to connect your brilliant AI to the global telephone network.

Also Read: How to Build a Voice Bot Using Kimi K2 for Customer Support?

The Hidden Hurdle: Why Voice AI Is More Than an API

A common pitfall for development teams is underestimating the complexity of real-time voice communication. It’s easy to get excited by a hyper-realistic TTS engine or a slick no-code workflow builder and assume the technical challenges are solved. However, the true bottleneck often lies in the “plumbing”, the intricate network that streams audio between your user and your application.

Stitching together separate services for telephony, Speech-to-Text (STT), AI logic, and Text-to-Speech (TTS) creates a fragile system that is difficult to scale and maintain. This do-it-yourself (DIY) approach often leads to critical failures:

- Crippling Latency: Every network hop between your telephony provider, your server, and your AI APIs adds milliseconds of delay. These delays accumulate, creating awkward pauses that shatter the illusion of a natural conversation and frustrate users.

- Poor Audio Quality: Real-world phone networks are messy. Jitter, packet loss, and inconsistent audio levels can degrade the input to your STT model, leading to transcription errors and flawed AI responses.

- Infrastructure Management Overhead: Instead of perfecting your AI’s conversational skills or business logic, your most valuable engineering resources are spent debugging SIP trunks, managing carrier relationships, and building redundant systems to ensure uptime. You become telephony experts by necessity, not by choice.

These challenges demonstrate that a world-class AI agent needs more than just a great brain; it needs a bulletproof nervous system. This is where a dedicated voice transport layer becomes the most critical part of your stack.

ElevenLabs.io: The Artisan of AI Voice Synthesis

ElevenLabs has firmly established itself as the market leader for developers who need to generate ultra-realistic, expressive, and emotionally nuanced speech. It is a component-focused platform, providing the tools to create the highest-quality voice output imaginable.

Key Strengths and Features

- State-of-the-Art Voice Realism: Leveraging its powerful Eleven v3 model, the platform generates incredibly natural-sounding speech across more than 70 languages. It excels at capturing subtle emotional intonations, making conversations feel authentic.

- Granular Developer Control: ElevenLabs provides rich APIs and SDKs (Python, TypeScript) that allow for deep customization. Developers can use expressive tags like [excited] or [sighs] to inject specific emotions into the dialogue, offering unparalleled control over the audio output.

- A Broad Audio Ecosystem: Beyond its flagship TTS, ElevenLabs offers a suite of powerful tools, including a high-accuracy STT service (Scribe), a voice isolator, AI dubbing capabilities, and even an AI music generator (Eleven Music).

- Enterprise-Ready Security: With SOC II and GDPR compliance, ElevenLabs is a secure and reliable choice for developers building scalable, commercial-grade applications.

Ideal Use Cases

ElevenLabs is the definitive choice when the quality and character of the voice itself are paramount. User feedback confirms this, with many stating, “Nothing is as good as ElevenLabs.” It is best suited for:

- Creating unique, branded voice assistants with a distinct personality.

- Developing applications for media, entertainment, and audiobooks where voice quality is a core feature.

- Any project where developers need fine-grained control over the vocal performance.

Also Read: Virtual PBX Phone Systems Solutions for Professional Communication in Businesses in Belgium

Synthflow.ai: The Architect of AI Call Automation

Synthflow.ai operates at the other end of the spectrum. It is an end-to-end, no-code platform designed for building and deploying fully automated AI phone call agents. Its primary focus is on workflow automation rather than the underlying voice synthesis technology.

Key Strengths and Features

- No-Code Simplicity: Synthflow empowers users to build sophisticated voice agents without writing a single line of code. This dramatically accelerates deployment time, with implementations possible in as little as three weeks.

- Deep Business Integrations: The platform’s core strength is its ability to connect with the tools businesses already use. With over 200 integrations, including major CRMs and Zapier, it can automate tasks like lead qualification, appointment scheduling, and customer support follow-ups.

- End-to-End Call Management: Synthflow handles the entire call lifecycle. Features like sentiment analysis, voicemail detection, intelligent routing, and automated SMS follow-ups provide a complete solution for operational call workflows.

- Robust Security and Compliance: Designed for business use, Synthflow is PCI-DSS compliant, encrypts all data, and conducts regular penetration testing, ensuring enterprise-grade security.

Ideal Use Cases

Synthflow is the ideal solution for businesses that need to automate specific, high-volume call-based processes quickly. It shines in:

- Automating inbound and outbound sales calls for lead qualification.

- Providing 24/7 customer support for common queries.

- Handling appointment scheduling and reminders without human intervention.

Head-to-Head: Elevenlabs.io Vs Synthflow.ai Breakdown

The decision between Elevenlabs.io Vs Synthflow.ai is not about which is “better,” but which is right for your specific development needs.

Voice Quality and Realism

Winner: ElevenLabs.io

This is ElevenLabs’ core competency. Its proprietary models deliver unmatched realism and emotional depth. Synthflow provides multi-language support, but its primary value is in workflow automation, not best-in-class voice synthesis.

Developer Control vs. No-Code Speed

Winner: It depends.

For developers who need to build custom logic and integrate voice as a feature within a larger application, ElevenLabs’ rich APIs and SDKs offer superior control and flexibility. For teams that need to deploy a standalone voice agent for a business function rapidly, Synthflow’s no-code platform is significantly faster and requires no specialized development.

Integration Ecosystem

Winner: Synthflow.ai

Synthflow is built for business process automation, and its vast library of 200+ integrations with CRMs and other systems is central to its value proposition. While ElevenLabs has a strong developer ecosystem, Synthflow’s focus is on connecting with end-user business tools.

This analysis of Elevenlabs.io Vs Synthflow.ai makes it clear: they solve different problems. But to solve either problem effectively over a phone line, you need a powerful foundation.

Also Read: Llama 2 Voice Bot Tutorial: Automating Calls

The Infrastructure Gap: Connecting Your AI to the World

Whether you choose the granular control of ElevenLabs or the rapid automation of Synthflow, you are still left with the challenge of connecting your agent to the public telephone network. This is the infrastructure gap that can derail a project.

FreJun AI is purpose-built to fill this gap. We are a developer-first voice transport layer that handles all the complexities of real-time communication, allowing your AI stack to perform at its peak.

FreJun is not a replacement for ElevenLabs or Synthflow; we are the essential enabler for them.

- For ElevenLabs Users: We provide the crystal-clear, low-latency audio stream from any inbound or outbound call. Your application receives this stream, sends it to your STT and LLM, then uses ElevenLabs to generate a response. You pipe that audio back to our API, and we play it to the user instantly, preserving the realism of the voice you so carefully crafted.

- For Synthflow Users: We act as the reliable, high-availability bridge between the telephone network and your Synthflow agent. We ensure every call is connected with pristine quality and that your automated workflows are never compromised by poor connectivity or dropped calls.

We handle the plumbing so you can focus on building your AI, not your telephony stack.

DIY Stack vs. FreJun AI: A Strategic Comparison

When building a voice agent, your choice of infrastructure is as important as your choice of AI. Here’s how a DIY approach compares to building on the FreJun AI transport layer.

| Feature / Aspect | The DIY Stack (ElevenLabs/Synthflow + Telephony API) | The FreJun AI Transport Layer |

| Call Management | Requires manual integration with a separate, often complex, telephony API for call control. | Unified, developer-first API handles all call logic and real-time media streaming. |

| Audio Quality & Latency | Variable audio quality subject to network jitter. Latency accumulates with each API call. | Engineered end-to-end for low latency. We deliver a crystal-clear, real-time audio stream. |

| Scalability | You are responsible for scaling your telephony infrastructure to handle peak call volumes. | Built on resilient, geographically distributed infrastructure designed for enterprise-scale traffic. |

| Developer Focus | Time is split between perfecting AI logic and troubleshooting telephony issues. | 100% of your time is spent on your application’s core AI and business logic. |

| Reliability & Support | Uptime depends on multiple vendors. Support is fragmented and siloed. | Guaranteed uptime with dedicated support from voice infrastructure experts. |

Also Read: Business Communication Between Australia and New Zealand

How to Build a Production-Grade Voice Agent in 2025?

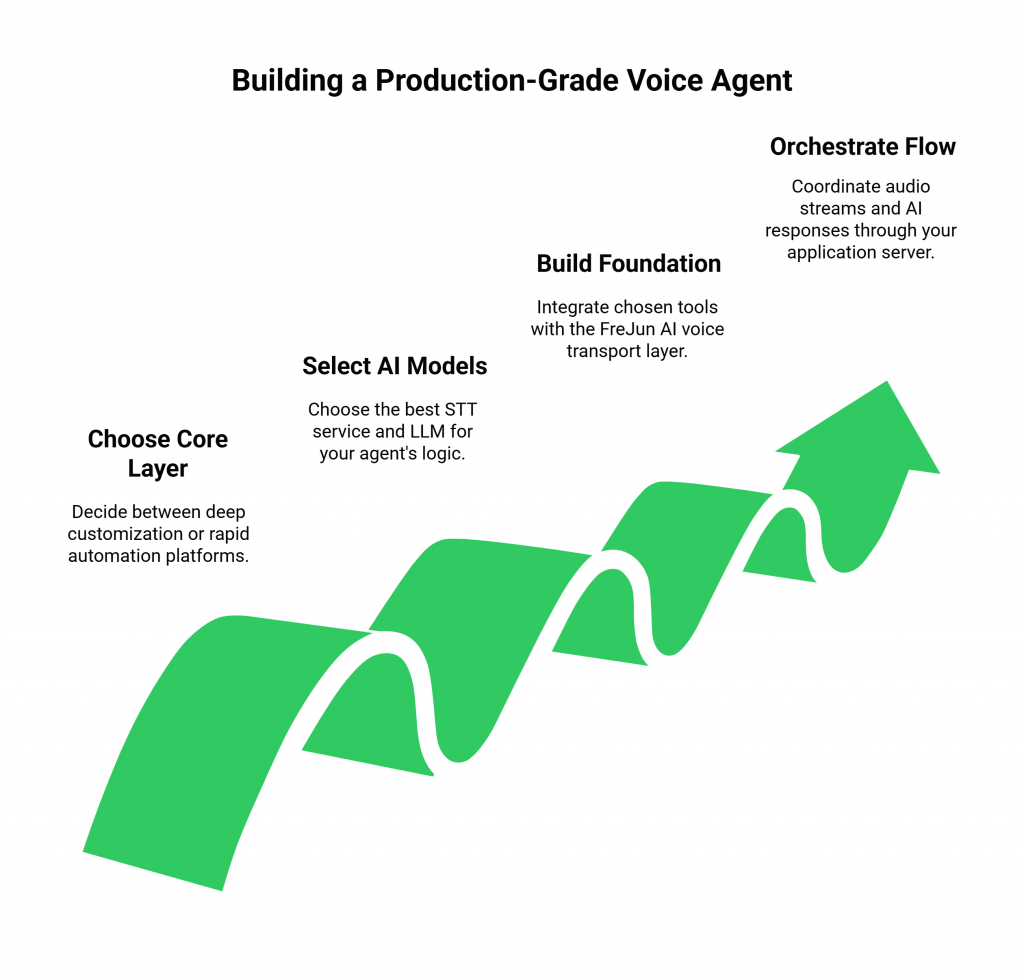

Follow this modern, modular approach to build a voice agent that is both intelligent and reliable.

- Step 1: Choose Your Core Application Layer. Decide if your project requires the deep customization of a tool like ElevenLabs or the rapid workflow automation of a platform like Synthflow.

- Step 2: Select Your Supporting AI Models. If you are using a component-based approach, select your preferred STT service and the Large Language Model (e.g., GPT-4, Claude 3) that will power your agent’s logic.

- Step 3: Build on a Solid Foundation. This is the most critical step. Integrate your chosen tools with the FreJun AI voice transport layer. Use our simple APIs and SDKs to manage all inbound and outbound calls.

- Step 4: Orchestrate the Flow. Your application server will receive the audio stream from FreJun, coordinate with your AI models or Synthflow agent to generate a response, and then use FreJun to send the response audio back to the user.

This layered approach allows you to use the best-in-class tool for each job without compromising on the performance and reliability of the underlying communication layer.

Final Thoughts: Build Your Agent, Not Your Infrastructure

The success of your AI voice agent hinges on the quality of the user’s conversational experience. A brilliant AI with a beautiful voice is useless if the conversation is plagued by lag, dropped words, and failed connections.

The debate over Elevenlabs.io Vs Synthflow.ai highlights the importance of choosing the right tools for your application layer. But the most strategic decision you can make is to abstract away the complexity of the infrastructure layer. Don’t let your project get mired in the messy details of real-time media streaming.

Let FreJun AI handle the voice infrastructure. We provide the performance, reliability, and scalability you need to move from concept to a production-grade voice AI. Focus your energy on creating an intelligent and engaging agent. We will make sure it can talk to the world.

Get Started with FreJun AI Today!

Also Read: How to Build a Voice Bot Using Llama 3 for Customer Support?

Frequently Asked Questions

It depends on the startup’s product. If your product is a unique voice experience, choose ElevenLabs for its customisation. If your startup needs to automate a business process like sales or support calls, Synthflow’s no-code platform will deliver value much faster.

This would depend on Synthflow’s integration capabilities. Conceptually, if Synthflow allows for custom TTS integrations via API, you could connect it to ElevenLabs. However, Synthflow is designed as a more all-in-one solution.

No. FreJun specialises exclusively in the voice transport layer. We are model-agnostic and platform-agnostic, giving you the freedom to build your stack with best-in-class components like ElevenLabs without being locked into a single ecosystem.

ElevenLabs is for developers who need deep control over the voice itself and are building custom applications. Synthflow is for businesses that need to automate call-based workflows quickly without coding. They serve very different needs.