Imagine trying to build a modern smartphone app, but first, you have to build a cell tower in your backyard. That sounds ridiculous, right? You would never do that. You would just connect to the existing network.

However, for a long time, building voice applications felt exactly like building that cell tower. Developers had to wrestle with complex hardware, copper wires, and confusing telecom protocols just to make a phone ring.

Now, we are in the era of Artificial Intelligence. Businesses want to build smart agents—bots that can talk, listen, and solve problems like a human. To do this, they need to connect their cutting-edge AI brains to the old-school telephone network.

This is where the voice calling API and SDK come into play.

These tools act as the bridge between the internet and the telephone. They allow developers to build sophisticated voice features without needing a degree in telecommunications engineering. By using an API, you can spin up a voice agent in minutes rather than months.

In this guide, we will explore why these APIs are the essential building blocks for AI integration. We will look at how they handle call transcription API needs, facilitate real-time STT SDK integration, and how infrastructure platforms like FreJun AI provide the invisible plumbing that makes these agents sound smart and responsive.

Table of contents

- The Gap Between AI and Telephony

- Why Speed of Development Matters

- How the AI Voice Loop Works

- The Critical Role of Real-Time Data

- Why Infrastructure Is the Secret Sauce

- Feature Spotlight: Barge-In and Interruptibility

- Scalability: Handling One Call or One Million

- Comparison: Building vs. Buying

- Why Developers Prefer FreJun for AI Integration

- Real-World Use Case: The AI Appointment Setter

- Conclusion

- Frequently Asked Questions (FAQs)

The Gap Between AI and Telephony

To understand why we need APIs, we first need to look at the two worlds we are trying to connect.

On one side, you have the world of AI. It runs on the cloud and speaks JSON and REST and WebSockets. It is fast, flexible, and modern.

On the other side, you have the Public Switched Telephone Network (PSTN). This is the network that connects all the phone numbers in the world. It runs on protocols like SIP (Session Initiation Protocol) and RTP (Real-time Transport Protocol). It is reliable, but it is old and rigid.

These two worlds do not speak the same language. You cannot just plug ChatGPT into a phone line. You need a translator.

A voice calling API and SDK is that translator. It takes the digital commands from your AI (like “Say Hello”) and converts them into the audio signals that travel over the phone network. Conversely, it takes the audio from the phone network and converts it into data your AI can understand.

Why Speed of Development Matters

In the tech world, speed is everything. If you have a great idea for an AI receptionist, you want to launch it today, not next year.

Building a telephony stack from scratch is incredibly difficult. You have to manage carrier relationships, handle server scaling, deal with firewalls, and optimize audio codecs. It is a massive headache.

Using an API removes 90% of this work. It turns voice capabilities into “Lego blocks.”

- Need to make a call? There is a function for that.

- Need to record audio? There is a function for that.

- Need to stream audio to an AI? There is a function for that.

This allows developers to focus on the unique logic of their AI agent rather than fixing plumbing issues.

According to a market analysis report, the global voice recognition market is expected to grow at a compound annual growth rate of 17.2% from 2020 to 2027. Companies that can deploy voice solutions quickly using APIs will capture this growing market share.

Also Read: Why Startups Are Switching to Programmable SIP for Scalable Voice AI?

How the AI Voice Loop Works

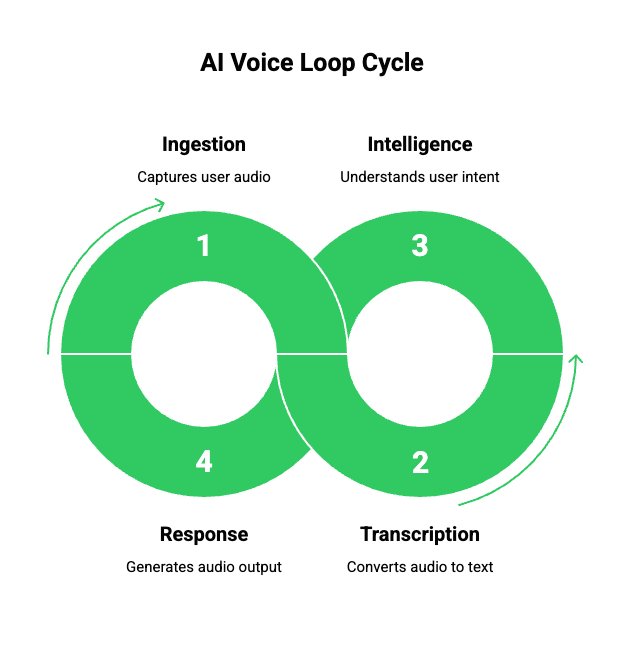

To see the value of the API, let us walk through the lifecycle of a single interaction with an AI agent. It is a loop of four steps: Listen, Transcribe, Think, Speak.

- Ingestion (The API): The user speaks into their phone. The voice calling API and SDK captures this audio in high definition.

- Transcription (Voice to Text): The audio is streamed to a call transcription API. This converts the sound waves into text.

- Intelligence (The Brain): The text is sent to the Large Language Model (LLM). The AI understands the user said “I want to book a table.”

- Response (The Output): The AI generates a text response (“What time?”). A Text-to-Speech (TTS) engine turns it back into audio, and the API plays it to the caller.

FreJun AI specializes in optimizing this loop. We handle the complex voice infrastructure so you can focus on building your AI. By providing low-latency media streams, we ensure that the time between the user stopping speaking and the AI starting speaking is minimal.

The Critical Role of Real-Time Data

For an AI agent to feel human, it cannot wait until the call is over to understand what happened. It needs to understand now.

This is why real-time STT SDK integration is vital.

Legacy systems would record a call and transcribe it later. That is useful for quality assurance, but useless for a conversation. A modern Voice API supports “media forking” or “streaming.”

This means that as the audio packets arrive from the phone network, the API instantly pushes them to your transcription engine via a WebSocket. This happens in milliseconds.

If you were trying to build this yourself, you would have to manage packet loss, jitter, and synchronization. A robust API handles all of this buffering for you, delivering a clean stream of voice to text data that your AI can consume immediately.

Why Infrastructure Is the Secret Sauce

Many developers make the mistake of thinking all APIs are the same. They think, “Code is code.” But in the world of voice, the code is only as good as the network it runs on.

Voice is sensitive. If an email arrives two seconds late, nobody cares. If a voice arrives two seconds late, the conversation is ruined.

This is where FreJun AI stands out. We are not just a software wrapper; we are a voice transport layer.

- FreJun Teler: Our telephony arm provides elastic SIP trunking. This ensures that your connection to the global phone network is robust and scalable.

- Low Latency Routing: We route calls through the fastest possible paths to minimize delay.

When integrating an AI agent, you need an API provider that guarantees high-quality audio. If the audio is fuzzy or choppy, the call transcription API will make mistakes. It might hear “cancel my order” as “candle my border.” The AI will get confused, and the user will get frustrated.

Feature Spotlight: Barge-In and Interruptibility

One of the hardest things to get right in AI voice agents is “barge-in.”

Imagine you are talking to a human. They start explaining something you already know. You say, “Yeah, I got it, but what about the price?” The human stops talking immediately and answers your new question.

Robots usually don’t do this. They keep talking until they finish their script. It is annoying.

To fix this, you need a Voice API that supports bi-directional streaming. The API needs to “listen” even while it is “speaking.” If it detects the user’s voice, it must send a signal to cut off the audio playback instantly.

FreJun’s infrastructure supports these advanced media handling capabilities, allowing developers to build agents that are polite and responsive, not robotic and rude.

Scalability: Handling One Call or One Million

AI agents are often used for high-volume tasks.

- An airline dealing with thousands of cancelled flights.

- A retail store running a Black Friday sale.

- A political campaign calling millions of voters.

If you build your own server rack, you have a limit. When you hit that limit, the system crashes.

Using a cloud-based voice calling API and SDK gives you “elasticity.” This is a key feature of FreJun Teler. Whether you have ten concurrent calls or ten thousand, the API scales up to handle the load. You do not have to buy more hardware. You just pay for the minutes you use.

Also Read: How Programmable SIP Improves Voice Quality and Latency for AI-Powered Calls?

Comparison: Building vs. Buying

Here is a look at the difference between building your own telephony stack and using an API integration.

| Feature | Building From Scratch | Using Voice Calling API |

| Setup Time | 6 to 12 months | Days or Weeks |

| Upfront Cost | High (Servers, Licenses) | Low (Pay as you go) |

| Maintenance | High (Patching, Upgrades) | Zero (Handled by provider) |

| Scalability | Limited by hardware | Infinite (Cloud scale) |

| Audio Quality | Hard to optimize | Enterprise grade |

| AI Integration | Complex custom code | Simple WebSocket streams |

| Global Reach | Requires local carriers | Global out of the box |

Why Developers Prefer FreJun for AI Integration

Developers are pragmatic. They want tools that work. FreJun is built with a “developer-first” mindset.

Model Agnostic

We do not force you to use a specific AI model. You can use OpenAI, Anthropic, or your own custom Llama model. We just provide the clean pipe to get the audio there.

Comprehensive SDKs

We provide Software Development Kits (SDKs) for major programming languages. This means you can integrate voice features into your Python, Node.js, or Go applications using standard functions and libraries.

Security

AI interactions often involve sensitive data (credit cards, health info). FreJun ensures that the voice data is encrypted in transit, providing a secure tunnel between the user and your AI.

Ready to start building your own AI voice agent? Sign up for FreJun AI to access our SDKs and API keys.

Real-World Use Case: The AI Appointment Setter

Let us look at a practical example of how these APIs come together.

A dental clinic wants an AI to handle appointments.

- Call Incoming: A patient calls. FreJun Teler accepts the call via SIP trunking.

- Streaming: The FreJun API starts a media stream to the developer’s server.

- Transcribing: The developer uses a real-time STT SDK to turn “I have a toothache” into text.

- Booking Logic: The AI checks the clinic’s calendar API. It sees a slot at 2 PM.

- Speaking: The AI generates the audio: “We have an opening at 2 PM. Do you want it?”

- Confirmation: The patient says “Yes.” The API detects this and confirms the booking.

Without the Voice API, the developer would have to figure out how to answer the phone line, how to decode the audio packets, and how to stream them without lag. With the API, they just write the booking logic.

Also Read: The Role of Programmable SIP in Next-Gen Customer Support Automation

Conclusion

The rise of AI agents is transforming how businesses communicate. But an AI without a voice is just a chatbot. To give your AI a voice, you need a robust connection to the telephone world.

Voice calling API and SDK solutions provide that connection. They abstract away the complexity of telecom, allowing you to focus on the intelligence of your agent. They provide the speed, scalability, and clarity required for natural human-machine conversation.

However, the quality of your agent depends on the quality of the infrastructure. You need a partner that understands the nuances of real-time media. FreJun AI provides the high-performance transport layer that modern AI agents demand. With FreJun Teler handling the scale and our low-latency APIs handling the stream, you can build voice experiences that truly delight your users.

Want to discuss your specific AI integration needs? Schedule a demo with our team at FreJun Teler and let us help you build the future of voice.

Also Read: Create High-Impact WhatsApp Message Templates for Enterprises in Dubai

Frequently Asked Questions (FAQs)

A voice calling API is a software interface that allows developers to add voice calling capabilities to their applications.

AI agents live on servers. To talk to humans on phones, they need a gateway to the telephone network. The voice API acts as this gateway, converting digital AI responses into audio that can be heard on a phone.

An API (Application Programming Interface) is the set of rules for connecting to the service. An SDK (Software Development Kit) is a package of tools and code libraries that makes using that API easier for a specific programming language (like Python or JavaScript).

It is a service that converts spoken audio from a phone call into written text.

Real-time Speech-to-Text (STT) converts speech to text instantly while the person is still talking. This allows the AI to understand and respond immediately, creating a natural flow of conversation.

No. FreJun provides the voice infrastructure (the pipe). We are model-agnostic, meaning you can connect our voice stream to any AI model you choose, such as GPT-4, Claude, or a custom model.

FreJun Teler is the telephony component of our platform. It offers elastic SIP trunking, which provides the actual phone lines and connectivity to the global telephone network, ensuring your calls always connect.

Yes. You can program the API to accept incoming calls (like a customer support line) or initiate outbound calls (like an appointment reminder system).