In the fast-moving world of software development, three years is an eternity. The technologies, architectures, and user expectations that define the landscape can shift dramatically in that time. This is especially true in the realm of voice, where the explosive rise of Large Language Models (LLMs) is acting as a powerful catalyst, rapidly accelerating the evolution of what is possible.

The voice API for developers of today is a powerful tool, but the platform you are choosing is not just for the application you are building now; it is a bet on the applications you will be building in the future. So, what should a forward-thinking developer expect from the best voice api 2026?

The next gen voice platform will be defined by a profound shift: a move from being a passive “pipe” that simply connects a call, to an active, intelligent, and deeply integrated co-processor in the real-time conversational workflow. It will be a platform that is not just “AI-ready,” but “AI-native.”

For developers, this means a new set of expectations and a new universe of capabilities. This article will explore the key trends and the specific features that will define the future-ready voice api of tomorrow.

Table of contents

The Foundational Shift: From a Connectivity Tool to an Intelligence Enabler

To understand the future, we must first recognize the fundamental evolution in the role of the voice API.

- Generation 1 (The Past): The first-generation voice API was a connectivity tool. Its primary job was to abstract away the complexity of the PSTN and allow a developer to programmatically make and receive a call. It was a revolution, but its focus was on the connection.

- Generation 2 (The Present): The current generation is a real-time media platform. Its defining feature is the ability to provide a live, low-latency stream of the call’s audio. This is the key that has unlocked the first wave of truly interactive voice AI.

- Generation 3 (The Future): The next gen voice platform will be an intelligence enabler. It will not just transport the data of the conversation; it will actively participate in understanding and optimizing it in real-time, at the network edge.

This evolution is being driven by the relentless demand for more natural, more responsive, and more human-like AI conversations.

Also Read: How Real Time Voice API Benefits for Businesses Transforming Workflows

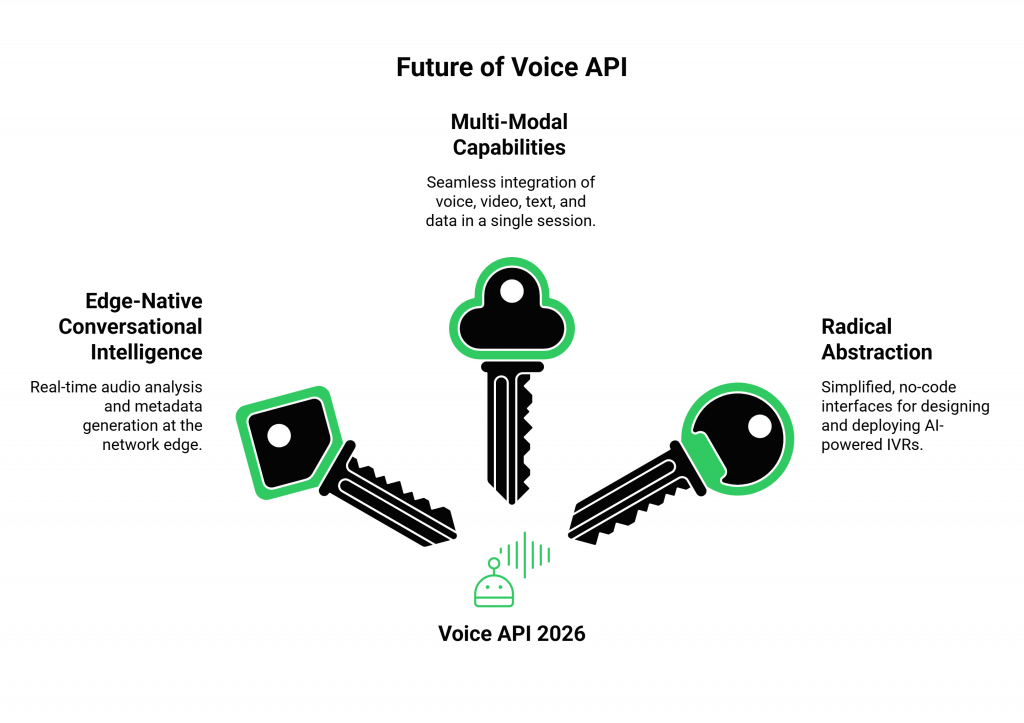

What Are the Key Features of the Best Voice API in 2026?

The voice API for developers of 2026 will be defined by a set of advanced, AI-infused capabilities that are happening at the infrastructure level, before the call’s data even reaches your application’s core logic.

Feature 1: Edge-Native, Real-Time Conversational Intelligence

The battle against latency is relentless. The future-ready voice api will push more and more of the real-time conversational mechanics to the network edge.

- What to Expect: The API itself will be able to perform basic, real-time analysis on the audio stream. It will not just detect that someone is speaking; it will be able to provide your application with a real-time stream of metadata about that speech. This will include:

- Real-Time Sentiment Analysis: The API will be able to analyze the user’s tone of voice and provide a live “sentiment score” (e.g., angry, neutral, happy).

- Advanced End-of-Speech Detection: It will use AI to more intelligently determine when a user has truly finished their thought, reducing the chance of the AI interrupting them.

- Proactive Packet Loss Concealment (PLC): It will use AI to predict and intelligently fill in missing audio packets, dramatically improving the perceived audio quality on poor networks.

Feature 2: Deeply Integrated, Multi-Modal Capabilities

The conversation of the future is not just about voice. It is about a seamless blend of voice, video, text, and data.

- What to Expect: The best voice api 2026 will be a unified, multi-modal API. A single API call will be able to initiate a session that can start as a voice call, seamlessly escalate to a video call, and allow for the real-time sharing of on-screen data or text messages, all within the same logical session. This will break down the silos that currently exist between different communication channels.

Feature 3: Radical Abstraction and “No-Code” Workflows

As the underlying technology becomes more powerful, the interface for using it will become simpler.

- What to Expect: While the low-level API will always be there for developers who need maximum control, the next gen voice platform will also offer a higher level of abstraction. This will include sophisticated, “no-code” or “low-code” visual workflow builders. A product manager or a business analyst will be able to design, build, and deploy a sophisticated AI-powered IVR by dragging and dropping components on a canvas, without writing a single line of code.

This table provides a summary of this evolutionary path.

| Feature Area | The Voice API of Today | The Best Voice API 2026 |

| AI Integration | Provides a raw, real-time media stream to your AI. | Provides a “smart” stream with real-time metadata (sentiment, etc.) and performs AI-powered quality enhancement. |

| Modality | Primarily single-modal (voice-centric). | Natively multi-modal (seamlessly blends voice, video, and text). |

| Developer Interface | Primarily code-based (APIs and SDKs). | Offers both deep code-based control and high-level, no-code visual workflow builders. |

| Network Intelligence | Intelligently routes calls for low latency. | Proactively uses AI to optimize the network and the audio stream in real-time. |

Ready to build on a platform that is architected for these future trends? Sign up for FreJun AI

Also Read: How Voice API Benefits Businesses Strengthen Communication Flow

How Will This Shape the Applications We Build?

These new capabilities in the voice API for developers will unlock a new generation of applications that are more intelligent, more empathetic, and more deeply integrated into our lives.

The Rise of the Hyper-Empathetic AI Agent

With real-time sentiment analysis delivered by the API, an AI agent will be able to do more than just understand a user’s words; it will be able to understand their feelings. If the API signals that a user’s tone is becoming frustrated, the AI’s LLM can be programmed to dynamically switch its own strategy, perhaps using a more apologetic tone or proactively offering to escalate to a human agent.

The Seamless “See What I See” Support Call

The multi-modal API will revolutionize technical support. A customer can start by describing their problem on a voice call. If they are struggling to explain it, the AI (or a human agent) can use the API to instantly escalate the session to a video call, allowing the customer to use their phone’s camera to “show” the support agent the problem.

What is FreJun AI’s Vision for the Future-Ready Voice API?

At FreJun AI, we are not just building for the present; we are architecting for this future. Our vision for the next gen voice platform is built on the same core principles that will define the best voice api 2026.

- An Intelligent Edge: Our globally distributed Teler engine is the perfect foundation for running these next-generation, real-time AI models at the edge, as close to the user as possible.

- A Commitment to Abstraction: We are relentlessly focused on abstracting away the underlying complexity. Our goal is to make the incredibly powerful capabilities of real-time communication accessible to every developer, regardless of their background in telecommunications.

- An Open and Flexible Ecosystem: We will always be a model-agnostic platform. We believe that the future of AI is too big and is moving too fast for any single company to own. Our job is to be the open, high-performance bridge that connects your application to the very best of this ever-expanding universe of innovation.

Also Read: Voice API For Bulk Calling That Handles Millions Of Calls Seamlessly

Conclusion

The voice API for developers is at a major inflection point. It is rapidly evolving from a simple tool for making and receiving calls into a sophisticated, intelligent, and multi-modal platform for building the next generation of human-to-machine communication.

The best voice API 2026 will be one that is not just a passive participant, but an active intelligence enabler in the conversational workflow.

For developers and businesses looking to stay ahead of the curve, the key is to choose a platform that is not just building for the features of today, but is architecting for the profound and exciting possibilities of tomorrow. The future of voice is intelligent, it is multi-modal, it is simple to build, and it is coming faster than you think.

Want to have a strategic conversation about your company’s long-term voice roadmap and see how our platform is architected to support the future of AI? Schedule a demo for FreJun Teler.

Also Read: Top Mistakes to Avoid While Choosing IVR Software

Frequently Asked Questions (FAQs)

The most significant trend is the shift from a passive “connectivity tool” to an active “intelligence enabler” that performs real-time analysis at the network edge.

A multi-modal API can manage a communication session that seamlessly blends different modes, like voice, video, and text, within a single, unified experience.

A future-ready voice API is one that is built on a flexible, model-agnostic, and edge-native architecture, allowing it to easily adopt and integrate new AI and communication technologies.

The best voice api 2026 will use a globally distributed, edge-native architecture to process audio as close to the user as possible, which is the most effective way to minimize network latency.

This is a future capability where the voice platform itself can analyze the user’s tone of voice and provide a live “sentiment score” to your application via its API.

No. A key trend is “radical abstraction.” The next gen voice platform will provide these advanced AI capabilities through very simple, high-level APIs or even no-code visual workflow builders.

A model-agnostic approach means you are not lock into a single AI provider. This gives you the freedom to always use the best-in-class AI models as they are released.