In the world of outbound communication, there are “campaigns,” and then there are “events.” A campaign might be a few thousand calls spread over a week. An event is a hundred thousand calls that absolutely must be delivered in the next ten minutes. It could be an urgent fraud alert to a bank’s entire customer base, a last-minute flight cancellation notification from an airline, or a critical public safety announcement from a municipality.

In these moments, the ability of your voice infrastructure to handle a massive, instantaneous, and unpredictable load is not just a feature; it is the entire point. This is the ultimate stress test, and it is a test that traditional, hardware-based dialing systems are guaranteed to fail.

The question for any CTO, developer, or operations leader is not if they will face a high-load scenario, but when. The ability to manage these peaks with grace and reliability is a core business requirement. This is where a modern, cloud-native voice API for bulk calling is not just an option; it is the only viable solution.

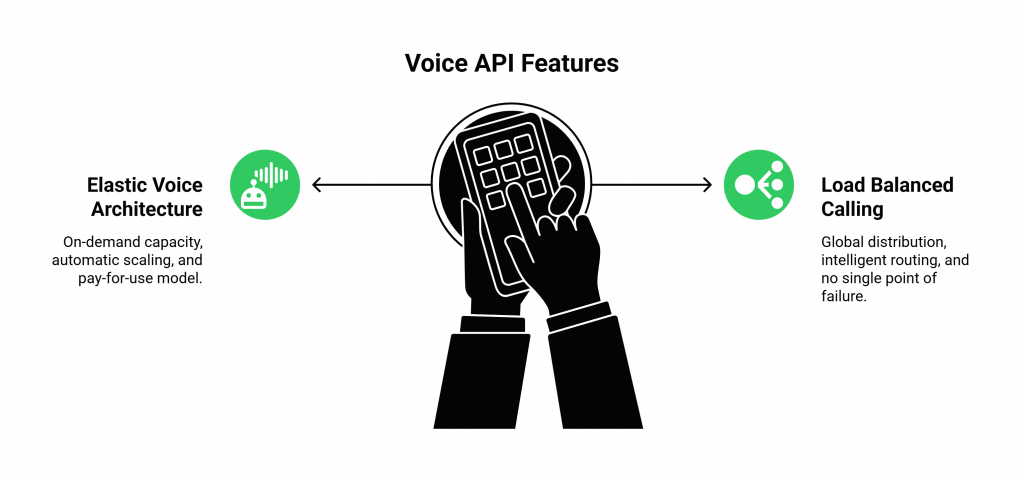

By leveraging an elastic voice architecture and the principles of load balanced calling, businesses can build communication systems that are as resilient and scalable as the cloud services they are built upon.

Table of contents

The Inevitable Failure of Traditional, On-Premise Dialers

To understand why a modern API is the solution, we must first diagnose the fundamental failure of the old model. For years, high-volume outbound calling was the domain of on-premise, hardware-based “auto-dialers” or “predictive dialers.” These were powerful machines, but they were products of a pre-cloud era, and they suffer from a set of fatal, architectural flaws.

The “Fixed Capacity” Trap

The biggest flaw is their rigid, fixed capacity.

- A Hard Ceiling: An on-premise dialer is a physical box that is connected to a fixed number of phone lines (either PRI or channelized SIP trunks). If you have a 200-line system, you can make a maximum of 200 simultaneous calls. Period.

- The Impossibility of “Spike” Management: You cannot magically add 10,000 lines for ten minutes to handle an emergency and then turn them off. You are permanently constrained by the physical and contractual limits of the infrastructure you purchased.

- Wasteful Overprovisioning: This forces you into a hugely wasteful economic model. To be prepared for a potential high-load event, you have to buy and maintain a massive, expensive system that sits idle for 99.9% of the time, burning cash, power, and rack space.

The Burden of Complexity and Fragility

These systems are also incredibly complex and fragile.

- A Single Point of Failure: The on-premise dialer is a single, monolithic point of failure. If that hardware fails, your entire outbound calling capability goes down with it.

- Complex Maintenance: They require specialized engineers to maintain, upgrade, and troubleshoot.

- Geographic Rigidity: The system is tied to a single physical location, making it difficult to build a geographically redundant or global operation.

Also Read: How Can Building Voice Bots Improve Customer Experience Across Channels?

How Does a Modern Voice API for Bulk Calling Solve the High-Load Problem?

A modern voice API for bulk calling is architected on a completely different set of principles. It is not a box; it is a globally distributed, software-defined service. It is designed from the ground up to solve the problem of massive, unpredictable scale.

The Power of an Elastic Voice Architecture

The core innovation is the move to an elastic voice architecture.

- On-Demand, Infinite Capacity: Instead of a fixed number of lines, the API gives you access to a massive, global pool of call capacity. A provider like FreJun AI maintains a network that is engineered to handle the combined peak traffic of all its customers. From your perspective as a user of the API, the capacity is virtually limitless.

- Automatic, Instantaneous Scaling: When your application needs to make 100,000 calls, you simply make 100,000 API requests. The platform’s infrastructure automatically scales in real-time to handle the load. There is no manual provisioning, no calling a sales rep, and no waiting.

- The “Pay for Use” Economic Model: This architecture enables a far more efficient economic model. You are not paying for idle hardware. You are billed based on your actual, metered usage (typically on a per-minute basis). This transforms a large capital expenditure into a lean, predictable, operational expense.

The business world is rapidly moving towards this kind of scalable infrastructure. A recent report on enterprise cloud adoption from Flexera found that 87% of enterprises have a multi-cloud strategy, a clear indicator that businesses are embracing distributed, scalable, and on-demand services over traditional, monolithic systems.

The Resilience of Load Balanced Calling

A true, carrier-grade voice API for bulk calling is not a single server. It is a globally distributed network of interconnected nodes, or Points of Presence (PoPs). This is the foundation of load balanced calling.

- Global Distribution: A provider like FreJun AI has infrastructure in data centers all over the world.

- Intelligent Routing and Failover: When you initiate a call via the API, the platform intelligently routes it through the most optimal path on its global network. The system is constantly monitoring carrier performance and can automatically reroute traffic away from congested or failing network segments.

- No Single Point of Failure: This distributed architecture means there is no single point of failure. An issue with a specific carrier in one region or even an entire data center going offline will not bring down the entire system. Traffic is automatically and seamlessly rerouted, ensuring that your critical communications always get through.

Also Read: How Should QA Teams Evaluate Interactions While Building Voice Bots For Users?

This table clearly summarizes the architectural differences that enable high-load management.

| Feature | Traditional On-Premise Dialer | Modern Voice API for Bulk Calling |

| Architecture | Monolithic, hardware-based, single-site. | Distributed, software-defined, global PoPs. |

| Scalability | Fixed and rigid. Cannot handle spikes. | Elastic and on-demand. Scales to millions of calls. |

| Resilience | Single point of failure. | Highly resilient with load balanced calling and automatic failover. |

| Management | Requires specialized, on-site engineers. | Managed via a simple, powerful API from anywhere. |

| Cost Model | High CapEx for hardware, plus fixed recurring costs. | Pay-as-you-go, operational expense. |

Ready to build your outbound communication on an infrastructure that was born to scale? Sign up for FreJun AI

What Does the Future of High-Load Support Look Like?

The evolution of high-load communication is not just about handling bigger spikes; it is about handling them more intelligently. The trend for high load support 2026 and beyond is the fusion of this massive scalability with real-time AI.

Imagine an AI-powered fraud alert system for a large bank.

- The Trigger: The bank’s internal systems detect a pattern of suspicious activity that could affect 500,000 customers.

- The API Call: The system makes half a million API calls to the voice API for bulk calling in a matter of seconds.

- The Intelligent Conversation: Each of these is not a dumb, pre-recorded message. It is an interactive call with an AI voice agent. The AI can authenticate the user, inform them of the specific suspicious transaction, and ask them to verbally confirm if it was legitimate.

- The Real-Time Feedback Loop: If the customer says “No, that was not me,” the AI can immediately place a temporary hold on their account and offer to connect them to a live fraud specialist, all within the same call.

This kind of intelligent, interactive, and high-speed response at a massive scale is the future of critical business communication, and it is a future that is only possible on a robust, elastic, and API-driven voice platform.

Also Read: How Can Small Teams Start Building Voice Bots With Minimal Cost?

Conclusion

The ability to communicate with a large audience, quickly and reliably, is a fundamental business need. In an increasingly unpredictable world, the ability to do so at a massive, instantaneous scale is a critical strategic advantage. The old world of on-premise, hardware-based dialers, with its rigid capacity and inherent fragility, is simply not up to the task.

A modern, cloud-native voice API for bulk calling provides the only viable path forward. By leveraging an elastic voice architecture and the resilience of load balanced calling, businesses can finally build communication systems that are as powerful, scalable, and dependable as the critical messages they are designed to deliver.

Have an upcoming high-load event? Want to stress-test our platform and see our scalability in action? Schedule a demo for FreJun Teler.

Also Read: IVR Software Analytics: How Reporting Improves Call Center Performance

Frequently Asked Questions (FAQs)

It is a programmable interface that allows a developer’s application to initiate and manage a large number of outbound phone calls simultaneously and automatically.

An elastic voice architecture is a cloud-native design that allows a voice platform’s capacity to automatically and instantly scale up or down to meet demand.

Load balanced calling distributes the call traffic across a global network of servers. This eliminates any single point of failure, ensuring high availability.

The main limitation is its fixed capacity. It cannot handle a sudden, massive spike in call volume that exceeds the number of physical lines it has.

A true, carrier-grade voice API for bulk calling can scale almost instantaneously, from a few calls per minute to tens of thousands of concurrent calls.

Overprovisioning is the wasteful practice of buying and paying for far more capacity than you need, just to be safe. An elastic, pay-as-you-go model prevents this.

Yes. A high-quality provider designs their network with massive redundancy. The system automatically routes calls around congestion to maintain crystal-clear audio quality.