In the new era of “AI-first” product development, the user interface is undergoing a profound transformation. Keyboards and touchscreens are no longer the only way to interact with our devices; the human voice is emerging as the most natural, intuitive, and efficient input method.

For a product manager or a developer building an AI-first app stack, the quality of this voice interaction is not just a feature, it is the entire foundation of the user experience.

A small error in a text-based UI might be a minor annoyance. A single, critical error in a voice command can render the entire application useless. This is why the pursuit of the highest possible accuracy is the defining characteristic of a modern voice recognition SDK.

For an AI-first product, voice is not an afterthought; it is the core functionality. Whether you are building a hands-free tool for surgeons in an operating room, a voice-controlled inventory system for a noisy warehouse, or a conversational AI that provides complex financial advice, the “speech-to-text” (STT) engine is the very first link in your AI value chain. If that first link is weak, the entire chain breaks.

Choosing a precision STT engine and the voice recognition SDK that delivers it is the most critical architectural decision you will make.

Table of contents

What Does “High Accuracy” Truly Mean in the Context of AI?

Accuracy in speech recognition is not a simple, monolithic metric. It is a multi-faceted and deeply contextual challenge. The “99% accuracy” advertised in a quiet, controlled lab environment can plummet to 50% or less in the messy, unpredictable reality of the real world. For an AI-first product, achieving true high accuracy speech requires a solution that can conquer three distinct challenges.

The Challenge of the Acoustic Environment (The “Noise”)

The real world is a noisy place. An AI-first product must be able to distinguish the user’s voice from a cacophony of competing sounds.

- The Problem: Background noise (like a busy street, a humming factory, or a crying baby), reverberation in a large room, and the low quality of a Bluetooth microphone can all corrupt the audio signal before it even reaches the STT engine.

- The Solution: A high-accuracy system uses advanced, AI-powered noise suppression and echo cancellation algorithms. An advanced voice recognition SDK will often perform some of this audio pre-processing on the client-side, sending a cleaner, more intelligible audio stream to the recognition engine.

Also Read: What Tools and SDKs Are Best for Building Voice Bots in 2025?

The Challenge of the Speaker (The “Voice”)

The human voice is an instrument of infinite variety.

- The Problem: Accents, dialects, and different speaking styles are a massive challenge for a one-size-fits-all recognition model. A model trained on a dataset of Midwestern American English will struggle to understand a user with a thick Glaswegian accent.

- The Solution: The best precision STT engines are trained on massive, incredibly diverse datasets of global speech. Furthermore, an advanced voice recognition SDK will often provide the ability to use specialized models that are tuned for specific languages and dialects.

The Challenge of the Content (The “Words”)

This is often the most overlooked but most critical challenge for a specialized, AI-first product.

- The Problem: Every industry and every domain has its own unique vocabulary of jargon, acronyms, and proper nouns. A general-purpose STT model has no idea what “brachytherapy,” “SKU,” or “EBITDA” means. It will try to transcribe these words phonetically, resulting in nonsensical and often comical errors.

- The Solution: This is where customization becomes a non-negotiable requirement. A high-accuracy voice recognition SDK must provide a powerful and easy-to-use “speech adaptation” or “custom vocabulary” feature. This allows a developer to provide the STT engine with a list of the domain-specific words and phrases that it is likely to encounter, which dramatically improves its accuracy for that specific use case.

The impact of this is huge; a recent study on AI in healthcare found that custom-trained medical transcription models can reduce word error rates by over 40% compared to generic models.

This table highlights why a specialized, customizable approach is essential for an AI-first app stack.

| Feature | General-Purpose Voice Recognition | High-Accuracy, Enterprise-Grade Solution |

| Acoustic Handling | Basic noise filtering. | Advanced, AI-powered noise suppression and echo cancellation. |

| Accent & Dialect Handling | Often struggles with non-standard accents. | Uses models trained on diverse, global datasets. |

| Domain-Specific Vocabulary | Prone to frequent errors on jargon and acronyms. | Allows for custom vocabulary and model training for high precision. |

| Accuracy Measurement | A single, generic “Word Error Rate” (WER). | Provides detailed analytics and tools to measure accuracy for your specific use case. |

Ready to build your AI-first product on a foundation of a truly accurate voice? Sign up for FreJun AI

Also Read: How Do You Start Building Voice Bots for Customer Support?

How Do You Choose and Integrate a High Accuracy Voice Recognition SDK?

Choosing the right STT engine is a critical decision, and it is not one that should be made based on marketing claims alone. It requires a rigorous, data-driven evaluation.

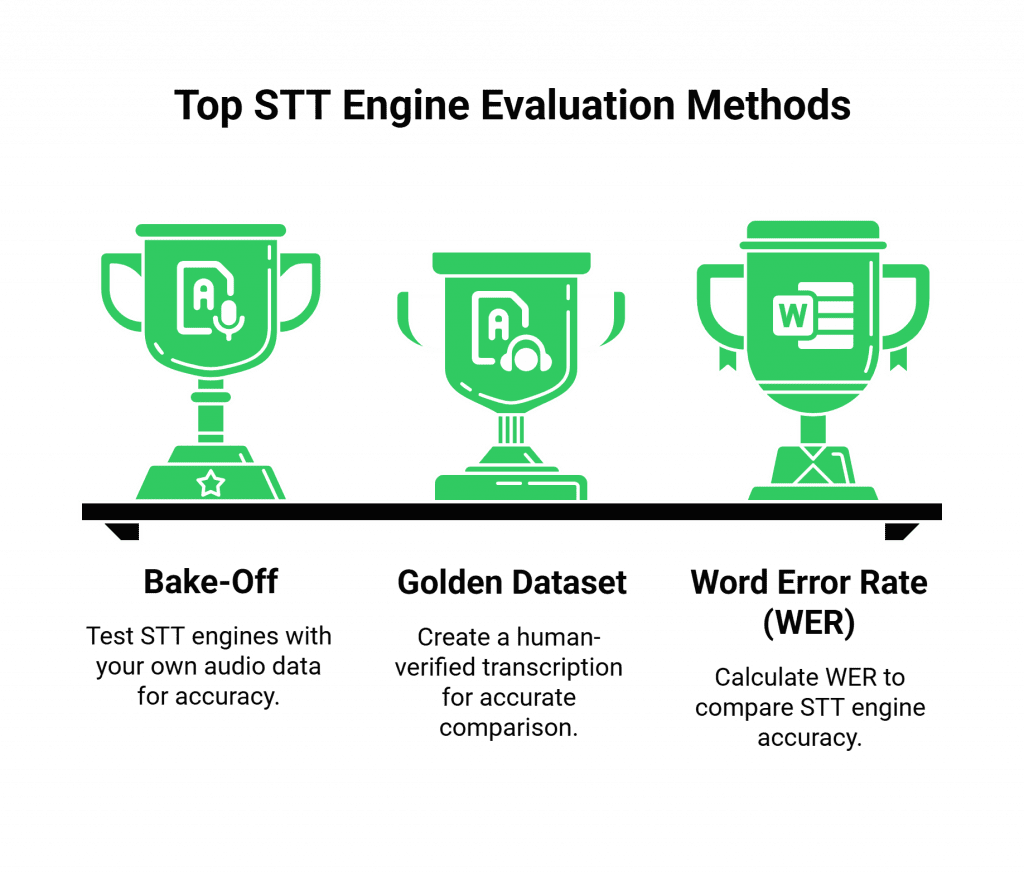

The “Bake-Off”: Test with Your Own Data

The only way to know which STT engine is the most accurate for your product is to test it with your audio.

- Gather a “Golden Dataset”: Collect a representative sample of audio that reflects your real-world use case. This should include different speakers, different accents, and different acoustic environments.

- Manually Transcribe It: Create a 100% accurate, human-verified transcription for this dataset. This is your “ground truth.”

- Run the Test: Process this audio dataset through the STT engines of the different providers you are evaluating.

- Calculate the Word Error Rate (WER): Compare the machine-generated transcripts to your human-generated ground truth to calculate the WER for each provider. The provider with the lowest WER on your specific data is your accuracy winner.

The Integration: The Role of the Voice Infrastructure

Once you have chosen your precision STT engine, you need a way to connect it to a real-time voice stream. This is where a platform like FreJun AI becomes the essential “plumbing” in your AI-first app stack.

- The Secure, Low-Latency Pipe: FreJun AI provides the foundational voice infrastructure and the voice recognition SDK that handles the connection to the user. Our platform is responsible for establishing the call and providing a secure, low-latency, real-time stream of the user’s audio.

- The Model-Agnostic Bridge: We are not an STT provider. We are a model-agnostic voice platform. This is a critical advantage. It means you can take the real-time audio stream from our platform and pipe it to any STT engine you choose. This gives you the freedom to always use the absolute best-in-class, highest-accuracy STT for your needs, without being locked into a single vendor’s ecosystem.

Also Read: Programmable SIP Explained: A Developer’s Blueprint for the Voice-First Era

Conclusion

In the new frontier of AI-first product development, voice is the ultimate user interface. But for this interface to be effective, it must be built on a foundation of unwavering accuracy. The pursuit of high accuracy speech is a complex, multi-faceted challenge that requires a sophisticated approach, from advanced noise cancellation to deep, domain-specific customization.

A modern, enterprise-grade voice recognition SDK provides the essential tools to conquer these challenges. By rigorously testing and selecting the precision STT engine that is right for your specific use case, and by integrating it with a flexible, low-latency voice infrastructure, you can build an AI-first product that does not just hear, but truly understands.

Want to do a deep dive into the architecture of how to stream real-time audio from our platform to your chosen high-accuracy STT engine? Schedule a demo for FreJun Teler.

Also Read: Call Log: Everything You Need to Know About Call Records

Frequently Asked Questions (FAQs)

An AI-first app stack is a product where the core functionality and user experience are fundamentally enabled by artificial intelligence. In the context of voice, this means an application where voice is the primary and most important method of interaction.

By far, the most important factor is accuracy. The ability to achieve high accuracy speech recognition, especially for your specific industry’s vocabulary and in your users’ real-world acoustic environments, is paramount.

WER is the standard industry metric for measuring the accuracy of a speech recognition system. It is a calculation of the number of errors (substitutions, deletions, and insertions) that the machine makes compared to a perfect, human-generated transcript. A lower WER is better.

The most effective way is to use a precision STT engine that supports “custom vocabulary” or “speech adaptation.” This feature allows you to provide the AI with a list of your specific jargon, product names, and acronyms, which dramatically improves its accuracy on those terms.

Being model-agnostic means the platform is not tied to a single STT provider. This is critical for an enterprise because it gives you the freedom to choose the highest-accuracy STT engine for your specific needs and to switch providers in the future as the technology evolves, without having to re-architect your entire voice application.

FreJun AI provides the foundational voice infrastructure and the voice recognition SDK. We are the experts in handling the real-time, low-latency audio streaming from a live phone call or an in-app client.

While the best, large-scale models are trained on very diverse data, they can still struggle with particularly strong or less common regional accents.