In the world of voice AI, there are two fundamentally different ways of listening. The first is the familiar “push-to-talk” model: you press a button, speak a command, and release. It is a discrete, transactional interaction. But there is a second, far more powerful, and technically complex way of listening: continuous, real-time recognition.

This is the “always-on” model, where the AI is not just waiting for a command but is constantly processing a live, uninterrupted stream of audio. This capability is the key to unlocking a new generation of sophisticated voice applications, from live meeting transcription to real-time agent assist and ambient AI. The core technology that enables this is a specialized voice recognition SDK.

Building a system that can handle continuous STT streaming is a profound architectural challenge. It is not just about having a fast Speech-to-Text (STT) engine; it is about creating an incredibly resilient and efficient data pipeline that can handle a massive, unending flow of audio data without ever failing.

For developers, choosing a voice recognition SDK that is designed for this specific, high-throughput task is the most critical decision in building these advanced, “always-on” voice experiences. This guide will explore the unique challenges of continuous recognition and the architectural principles required to build stable voice pipelines.

Table of contents

- What is the Difference Between “Command-and-Control” and “Continuous Recognition”?

- Why is Continuous, Real-Time Recognition Such a Hard Problem?

- How Does a Modern Voice Recognition SDK Provide the Solution?

- What are the Best Practices for Building with a Voice Recognition SDK?

- Conclusion

- Frequently Asked Questions (FAQs)

What is the Difference Between “Command-and-Control” and “Continuous Recognition”?

To appreciate the complexity of continuous recognition, we must first distinguish it from its simpler cousin, “command-and-control” or “endpointing” speech recognition.

The “Command-and-Control” Model

This is the model used by most simple voice bots and virtual assistants.

- The Workflow: The system waits for a user to speak. It detects a period of silence at the end of their utterance (an “endpoint”). It then takes that finite chunk of audio, sends it to the STT engine for transcription, and waits for the result.

- The Use Case: This is perfect for transactional, turn-by-turn conversations, like an AI agent that asks a question and waits for an answer.

- The Technical Implication: The audio is processed in discrete, manageable chunks.

The “Continuous Recognition” Model

This is a fundamentally different paradigm.

- The Workflow: The system does not wait for silence. The moment the audio stream begins, it is continuously piped to the STT engine. The STT engine processes this stream in real time and sends back a continuous, evolving stream of text and events. This requires a persistent, “always-on” connection for persistent audio capture.

- The Use Case: This is essential for applications that need to process long-form, uninterrupted speech, such as:

- Live Transcription: Transcribing a meeting, a lecture, or a contact center call as it happens.

- Real-Time Agent Assist: Listening to a live conversation between a human agent and a customer to provide the agent with real-time suggestions and information.

- Ambient AI: An AI in a doctor’s office that listens to the entire patient consultation to automatically generate clinical notes.

- The Technical Implication: You are dealing with an unbounded, potentially infinite stream of data that must be processed with minimal latency and perfect reliability.

Also Read: Top Use Cases Of Media Streaming In Customer Communication Platforms

Why is Continuous, Real-Time Recognition Such a Hard Problem?

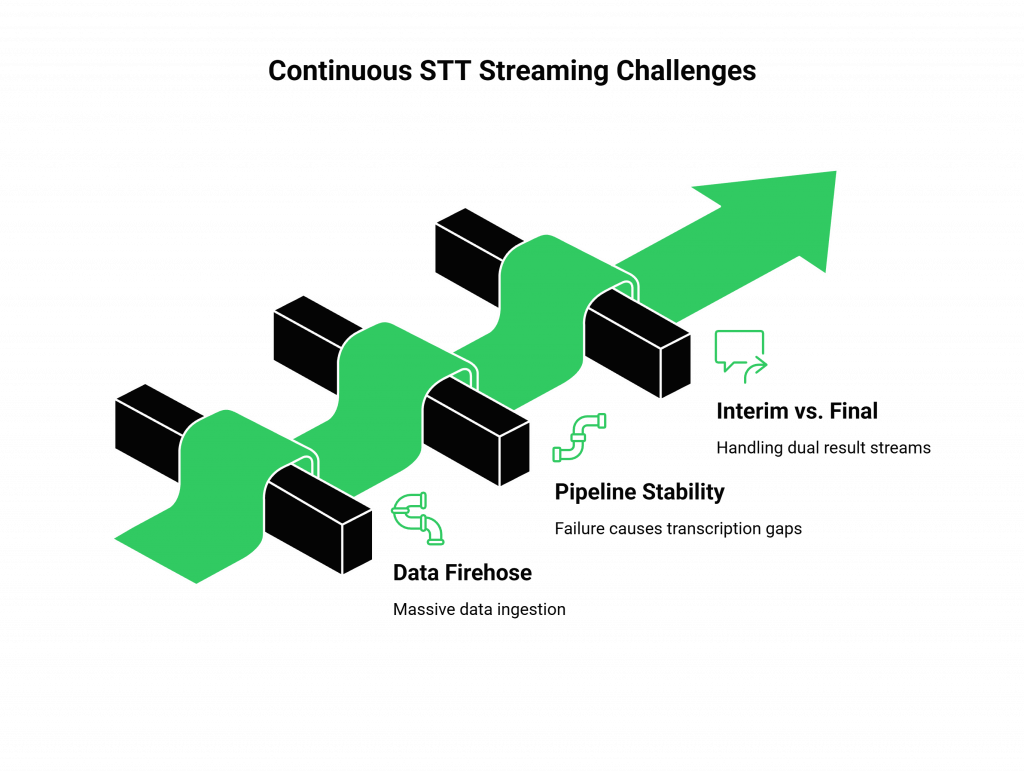

Building a system for continuous STT streaming is one of the most demanding challenges in real-time software engineering. It pushes every part of your technology stack, from the client-side audio capture to the network to the STT engine to its absolute limit.

The Challenge of the “Firehose” of Data

A continuous audio stream is a veritable “firehose” of data. A standard, single-channel audio stream can generate a significant amount of data every second.

For a contact center that wants to transcribe 1,000 simultaneous calls, you are suddenly dealing with a massive, multi-gigabit-per-second data ingestion problem. Your infrastructure must be able to handle this without dropping a single packet.

The Need for Absolute Pipeline Stability

In a transactional model, if a single STT request fails, you can simply try again. In a continuous streaming model, a failure is much more catastrophic. If the persistent connection between your application and the STT engine breaks, you have a gap in your transcription.

For a compliance-driven use case like recording a financial trading call, a one-second gap is not an option. This requires the creation of incredibly stable voice pipelines.

The “Interim vs. Final” Results Problem

A continuous STT engine does not just give you one final transcription. It typically provides a real-time stream of two types of results:

- Interim Results: These are the fast, “best-guess” transcriptions that the engine produces as the person is still speaking. They are subject to change.

- Final Results: These are the more accurate, finalized transcriptions that the engine produces after it has had a moment to process the context of a completed phrase or sentence.

Your application’s logic must be sophisticated enough to handle this dual stream of data, perhaps by displaying the interim results in a light gray text that is then replaced by the final, bolded text.

This table summarizes the architectural shift required for continuous recognition.

| Feature | Command-and-Control Model | Continuous Recognition Model |

| Audio Handling | Processes discrete, finite chunks of audio. | Processes an unbounded, continuous stream of audio. |

| Connection Type | Short-lived, stateless HTTP requests. | A persistent, stateful WebSocket or gRPC connection. |

| Data Flow | One request, one response. | A continuous, bi-directional stream of data and events. |

| Error Handling | Simple retry logic is sufficient. | Requires complex logic for connection recovery and data resynchronization. |

| Primary Challenge | The accuracy of a single transcription. | The stability and reliability of the entire data pipeline. |

Also Read: Optimizing Media Streaming Performance For High-Quality Voice AI Experiences

How Does a Modern Voice Recognition SDK Provide the Solution?

A modern voice recognition SDK is the essential toolkit that abstracts away the immense complexity of building these stable voice pipelines. It is not just a simple wrapper around an STT API; it is a sophisticated client- and server-side library that is specifically designed to handle the rigors of continuous STT streaming.

A high-quality voice recognition SDK from a provider like FreJun AI provides several critical components:

- A Resilient Streaming Client: The client-side SDK handles the persistent audio capture from the microphone and the establishment of a robust, persistent connection to the voice platform. It has built-in logic to automatically handle network blips and to gracefully reconnect if the connection is temporarily lost.

- A High-Performance Media Gateway: The provider’s backend infrastructure (our Teler engine) acts as a high-performance media gateway. It can receive thousands of simultaneous audio streams and intelligently route them to the appropriate STT engines.

- A Unified Event Model: The SDK presents the complex stream of interim and final results from the STT engine as a simple, easy-to-use set of events that your application can subscribe to, making the developer experience much simpler.

Ready to build the next generation of “always-on” voice applications? Sign up for FreJun AI

What are the Best Practices for Building with a Voice Recognition SDK?

Even with a powerful SDK, there are several SDK best practices that a developer must follow to ensure their continuous recognition application is truly production-ready.

Design for Graceful Reconnection

Your application’s logic must be built with the assumption that the network connection will fail at some point. The SDK will handle the low-level reconnection, but your application needs to be able to handle the state. For example, your UI should be able to clearly indicate to the user if the transcription has been temporarily paused due to a network issue.

Choose the Right STT Model for Your Use Case

The accuracy of your real-time transcription is highly dependent on the quality of the STT model. A model-agnostic platform like FreJun AI gives you the freedom to choose the best model for your specific needs.

- For a medical transcription app, you would choose a model that has been specifically trained on medical terminology.

- For a contact center application with diverse callers, you would choose a model that is robust to a wide range of accents and dialects. The importance of this is critical; a recent study on AI bias found that some speech recognition systems have error rates that are nearly twice as high for certain demographic groups. Choosing an inclusive model is essential.

Manage Your Costs

Continuous STT streaming is a resource-intensive and potentially expensive process. You are being billed for every second that the stream is active. Your application must have clear logic for starting and stopping the stream. For example, in a meeting transcription app, the stream should only be active when the meeting is actually in session.

Also Read: Media Streaming For AI: The Future Of Interactive Voice Experiences

Conclusion

The ability to perform continuous, real-time speech recognition is one of the most powerful and transformative capabilities in the modern AI landscape. It is the technology that is enabling a new class of “always-on” applications that can listen to, understand, and assist us in the background of our daily lives.

But building these systems is a significant technical challenge. It requires a move away from the simple, transactional model of the past and an embrace of a more complex, streaming-first architecture. A modern voice recognition SDK is the essential tool that makes this possible.

By providing a resilient, high-performance, and developer-friendly abstraction layer, it handles the immense complexity of building stable voice pipelines, allowing developers to focus on what they do best: creating the intelligent and innovative applications of the future.

Want to do a technical deep dive into our continuous streaming architecture and see a live demo of our SDK in action? Schedule a demo for FreJun Teler.

Also Read: UK Phone Number Formats for UAE Businesses

Frequently Asked Questions (FAQs)

A standard voice recognition SDK handles short, command-based audio after speech ends, while a continuous SDK processes live, unending audio streams in real time.

Real-world examples include live transcription services for meetings (like Otter.ai), real-time captioning for broadcast media, and agent-assist platforms that listen to contact center calls to provide real-time guidance to the human agent.

A voice pipeline defines the end-to-end audio path, ensuring uninterrupted real-time streaming from microphone to STT engine.

In a continuous STT streaming system, the STT engine provides “interim” results, which are its fast, best-guess transcriptions while the user is still speaking. Once the user pauses, it provides a “final” result, which is a more accurate and context-aware transcription of that phrase.

Persistent audio capture in a noisy environment is a challenge. The solution is a combination of using a high-quality microphone with noise-cancellation and choosing an STT model that has been specifically trained to be robust to background noise.

It is important because the performance of different STT models can vary significantly based on the use case (e.g., medical vs. financial terminology, noisy vs. quiet environment). A model-agnostic platform like FreJun AI gives you the freedom to choose the absolute best-performing STT engine for your specific needs.

The FreJun AI voice recognition SDK is designed specifically for this challenge. It provides a resilient client that handles the complexities of persistent audio capture and network reconnection, and it connects to our high-performance, globally distributed Teler engine, which acts as a scalable media gateway, ensuring the entire pipeline is stable and reliable.