In the early days of the LLM revolution, the process of building voice bots was a relatively straightforward choice: you picked a single, powerful Large Language Model (like GPT-4) and built your entire application around it. This “one model to rule them all” approach was a fantastic starting point, but as the AI landscape matures, a far more sophisticated and powerful strategy is emerging.

This is the era of the Multi-LLM architecture. Instead of being locked into a single model, savvy developers are now building intelligent LLM routing engines that can dynamically choose the perfect model for the specific task at hand, in real-time, in the middle of a conversation.

This is not just about chasing the latest, most powerful model. It is a strategic approach to building cost-efficient AI pipelines that are faster, more resilient, and more intelligent. The future of voice AI is not a single, monolithic brain, but a flexible, multi-brained system that can leverage the unique strengths of a diverse array of models.

This guide will explore why smart inference selection is becoming a non-negotiable requirement for any serious, production-grade voice bot.

Table of contents

Why is the “Single-Model” Approach Showing Its Limits?

The “one model to rule them all” approach was a necessary first step, but it is a model that is inherently inefficient and inflexible. Relying on a single, large-scale LLM (like GPT-4 or Claude 3 Opus) for every single turn of a conversation is like using a sledgehammer to crack a nut.

The Crippling Cost of “Always-On” Power

The most advanced LLMs are computational marvels, but they are also incredibly expensive to run. Every API call to a top-tier model costs a certain amount of money.

- The Problem: Many turns in a typical conversation are actually very simple. A user might say “yes,” “no,” or ask a basic question that does not require the deep reasoning capabilities of a flagship model. Using your most expensive model to process these simple inputs is a massive financial drain.

- The Inefficiency: It is the equivalent of having your most senior, most expensive neurosurgeon on call 24/7 to answer basic questions about headaches.

The Inescapable Speed-vs-Intelligence Trade-Off

In the world of LLMs, there is almost always a direct trade-off between the model’s intelligence (its reasoning ability) and its speed (its “time to first token” or inference latency).

- The Problem: The most powerful models are often the slowest. While you might need that deep reasoning for a complex, multi-step problem, using that slow model for a simple, quick-turnaround question introduces unnecessary latency into the conversation. For a voice bot, this added latency is a user experience killer.

- The Inflexibility: A single-model architecture forces you to make a single, universal compromise between speed and intelligence for your entire application.

Also Read: How Programmable SIP APIs Are Redefining Real-Time Communication?

What is a Multi-LLM Routing Engine?

A Multi-LLM routing engine, sometimes called a “model router” or a smart inference selection system, is a layer of intelligence that sits in front of your various LLM providers. Its job is to act as an intelligent traffic cop.

Before a user’s prompt is sent to an LLM, it is first sent to the router. The router’s job is to analyze the prompt and, based on a set of predefined rules or its own AI model, decide which LLM is the best fit for that specific request.

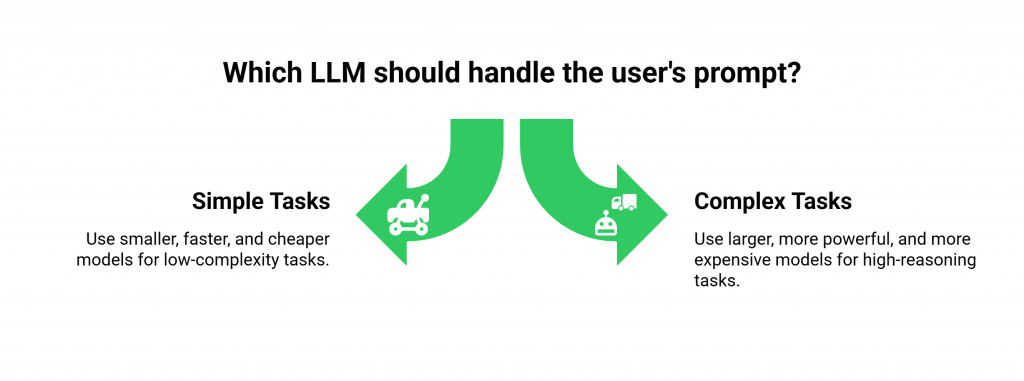

The core logic of the router is to send:

- Simple, low-complexity tasks to smaller, faster, and cheaper models.

- Complex, high-reasoning tasks to larger, more powerful, and more expensive models.

This creates hybrid LLM workflows that are optimized for both cost and performance in real-time.

How Do You Build and Implement a Model Routing System?

Building voice bots with a multi-LLM architecture involves adding a new, strategic layer to your application’s “brain” (your AgentKit).

The “Triage” Model: Rule-Based Routing

This is the simplest and most common approach to building model switching systems. It involves creating a set of rules based on the content or the context of the user’s prompt.

- Intent-Based Routing: You can pre-classify the types of intents your bot will handle. Route simple yes/no or FAQ queries to fast, low-cost models; route complex troubleshooting to powerful models like Claude 3 Opus.

- Keyword-Based Routing: The router can look for specific keywords in the user’s prompt. A prompt containing the word “summarize” might be sent to a model known for its strong summarization skills, while a prompt containing “write code” could be sent to a model optimized for coding.

- State-Based Routing: The router can also consider the current state of the conversation. If the bot has just asked a simple clarifying question, it can be programmed to expect a simple answer and route the response to a faster model.

This table provides an example of a simple, rule-based routing strategy.

| User Intent / Prompt | Best-Fit Model Characteristics | Example Model | Justification |

| Simple Confirmation (“Yes”) | Fast, very cheap. | Groq LLaMA3-8b | The task requires near-instant response, not deep reasoning. |

| Basic FAQ (“What are your hours?”) | Fast, cheap, good instruction following. | GPT-3.5-Turbo / Claude 3 Sonnet | The answer is straightforward and can be pulled from a knowledge base. |

| Complex Troubleshooting | High reasoning, multi-step logic. | GPT-4o / Claude 3 Opus | The task requires deep understanding and problem-solving skills. |

| Summarize a Conversation | Long context window, strong summarization. | Gemini 1.5 Pro | The model is specifically optimized for this type of task. |

Also Read: SIP vs Programmable SIP What the Difference and Why It Matters for Developers

The “AI-for-the-AI” Model: AI-Based Routing

A more advanced approach is to use a smaller, specialized AI model as the router itself.

- How it Works: The router is a fine-tuned classification model. It is trained on a dataset of prompts, and its only job is to look at a new prompt and classify it (e.g., “simple,” “complex,” “creative”).

- The Advantage: This can be more flexible and accurate than a rigid set of hand-written rules, but it requires the additional overhead of training and maintaining the router model. A recent report on enterprise AI adoption shows that 87% of AI projects never make it into production, often due to this kind of operational complexity, making the simpler rule-based approach a better starting point for most.

Ready to build a more cost-effective and performant AI pipeline? Sign up for FreJun AI

What Are the Strategic Benefits of a Multi-LLM Architecture?

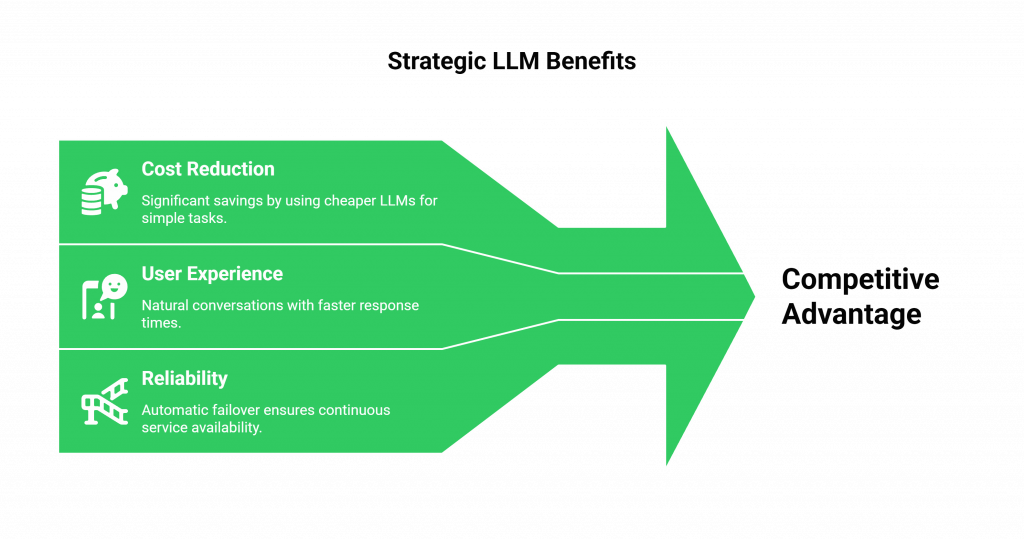

Adopting hybrid LLM workflows is more than just a clever technical trick; it is a powerful business strategy that provides a significant competitive advantage.

Massive Cost Reduction

This is the most immediate and tangible benefit. By routing the majority of your simple, high-volume conversational turns to cheaper models, you can dramatically reduce your overall AI spend. For a voice bot that handles millions of interactions, this can translate into hundreds of thousands of dollars in savings, creating a far more cost-efficient AI pipeline.

A Superior, Lower-Latency User Experience

By using faster models for the quick, back-and-forth parts of a conversation, you can significantly reduce the average response time of your voice bot. This lower latency makes the conversation feel more natural, more responsive, and less robotic, which is a huge driver of user satisfaction.

Increased Reliability and Resilience

Relying on a single LLM provider is a single point of failure. What happens if your chosen provider has a major API outage? With a multi-LLM architecture, you can build in automatic failover.

If a call to your primary model (e.g., GPT-4o) fails, your routing engine can automatically retry the request with a secondary model from a different provider (e.g., Claude 3 Opus), making your entire system far more resilient.

What is the Role of the Voice Infrastructure?

To implement these sophisticated model switching systems, your underlying voice infrastructure must be incredibly flexible. This is where a model-agnostic platform like FreJun AI is essential. Our platform is designed to be the “voice” for any AI “brain” you choose to build. We do not care if your AgentKit is using one LLM or five.

Our job is to provide the high-performance, low-latency connection between the phone call and your application, giving you the complete freedom to build the most advanced and cost-efficient AI pipeline imaginable.

Also Read: How Programmable SIP Enables Scalable AI-Driven Voice Experiences?

Conclusion

The first chapter of building voice bots was about proving that an AI could have a conversation. The next, more mature chapter is about making that conversation as efficient, performant, and intelligent as possible. The “single model” approach is a relic of the early days. The future belongs to a more sophisticated, multi-brained architecture.

By building intelligent LLM routing engines that can dynamically choose the right tool for the right job, developers can create voice bots that are not only smarter but also faster, more resilient, and dramatically more cost-effective.

This strategy of smart inference selection is no longer a niche optimization; it is becoming the new standard for professional, production-grade voice AI.

Want to do a technical deep dive into how our model-agnostic platform can support your multi-LLM strategy? Schedule a demo for FreJun Teler.

Also Read: 5 Best Autodialer Software in 2025: Top Platforms, Features, Pricing & Reviews

Frequently Asked Questions (FAQs)

An LLM routing engine is a piece of software that acts as an intelligent “traffic cop.” It analyzes an incoming user prompt and, based on a set of rules, decides which Large Language Model (e.g., GPT-4, Claude 3, Llama 3) is the best fit to handle that specific request.

The primary benefit is a massive reduction in operational costs. By routing simple, high-volume requests to cheaper and faster LLMs, you can create a much more cost-efficient AI pipeline and save a significant amount of money on your AI spend.

It improves the experience by reducing latency. For the simple, back-and-forth parts of a conversation, the router can use a very fast model, which makes the voice bot’s responses feel more instantaneous and natural.

Hybrid LLM workflows are conversational designs that use a combination of different LLMs to complete a task. For example, a fast model might be used for the initial greeting and data gathering, while a more powerful model is used for the final, complex problem-solving step.

The simplest approach to building model switching systems is to use a rule-based router. This involves creating a set of “if-then” rules that look for certain keywords or intents in the user’s prompt to decide which model to call.

Smart inference selection is another term for Multi-LLM routing. It is the process of intelligently selecting the most appropriate AI model to run an “inference” (to process a prompt and generate a response) based on the specific characteristics of the prompt.

No. This strategy works perfectly with API-based, commercially available LLMs. Your routing engine simply decides which provider’s API endpoint to call for each specific request.