In 2025, developers working on conversational AI know that voice is no longer just an optional feature. It defines the user experience and shapes how people interact with technology. Among the leading platforms, ElevenLabs.io and Deepgram.com frequently come up in discussions. Each brings something unique to the table. ElevenLabs sets the standard for lifelike and emotionally engaging synthetic voices, while Deepgram leads in ultra-fast and accurate transcription.

Rather than competing, the two are best seen as complementary. ElevenLabs makes machines sound human, while Deepgram ensures that machines can reliably understand humans. Together, they form two critical pieces of a modern conversational stack. However, they both share a hidden dependency: a strong, reliable voice transport layer. That is where FreJun becomes essential by making these APIs production-ready.

Table of contents

- The Two Sides of Conversational AI

- The Real Bottleneck: Beyond APIs

- ElevenLabs.io: The Standard for Voice Generation

- Deepgram.com: The Leader in Speech Recognition

- Elevenlabs.io Vs Deepgram.com: A Direct Comparison

- DIY Stack vs FreJun

- Building the Ultimate Voice Agent in 2025

- Final Thoughts

- Frequently Asked Questions (FAQs)

The Two Sides of Conversational AI

For developers, the question is often framed as Elevenlabs.io Vs Deepgram.com when deciding which tool to integrate. But this comparison misses the bigger picture. In reality, they address different needs.

ElevenLabs is the voice of your application. It takes text and transforms it into rich, natural audio that can carry emotion, tone, and subtle personality. Deepgram, on the other hand, is the ear. It listens in real time, converts human speech into text, and passes it to the logic layer of your AI.

This means the real decision for developers is not about choosing one over the other, but about how to orchestrate them together effectively. And to do that, you must also consider the underlying infrastructure that connects users to your AI in real-world environments.

The Real Bottleneck: Beyond APIs

Most developers assume that once they have a good Text-to-Speech service like ElevenLabs and an accurate Speech Recognition service like Deepgram, their application will just work. In practice, the most common failures happen in the layer that connects everything.

Think of this as the nervous system of your AI. It is the real-time audio transport between the user and your models. Without a reliable and low-latency transport layer, the conversation breaks down, no matter how advanced your ASR or TTS models are.

Common problems include:

- Latency build-up: Even with Deepgram transcribing in under 300ms and ElevenLabs generating voices in about 75ms, multiple hops through networks and servers quickly create noticeable pauses that ruin natural conversation.

- Degraded audio quality: Real-world phone lines are messy. Background noise, jitter, and packet loss can reduce transcription accuracy and make voices sound distorted.

- Infrastructure overhead: Developers spend countless hours dealing with SIP trunks, call routing, and redundancy instead of focusing on the intelligence of their AI.

This is why a dedicated voice transport layer such as FreJun is not a luxury but a requirement.

Also Read: How to Build a Voice Bot Using Gemma 1.1 for Customer Support?

ElevenLabs.io: The Standard for Voice Generation

ElevenLabs has quickly become the platform of choice for developers who care deeply about voice quality. Its strength lies in making synthetic voices sound indistinguishable from human ones.

Key strengths of ElevenLabs:

- Voices that carry emotional nuance and realism across many languages.

- Tools for developers to fine-tune pacing, tone, and delivery for branding or character voices.

- Low-latency models like Flash that can generate audio in real time.

- APIs and SDKs designed for creative use cases such as gaming, audiobooks, and AI-powered assistants.

Best use cases for ElevenLabs:

- Narrating audiobooks and media projects.

- Adding branded voices to conversational AI assistants.

- Creating immersive character dialogue in gaming.

- Providing natural-sounding multilingual dubbing.

Deepgram.com: The Leader in Speech Recognition

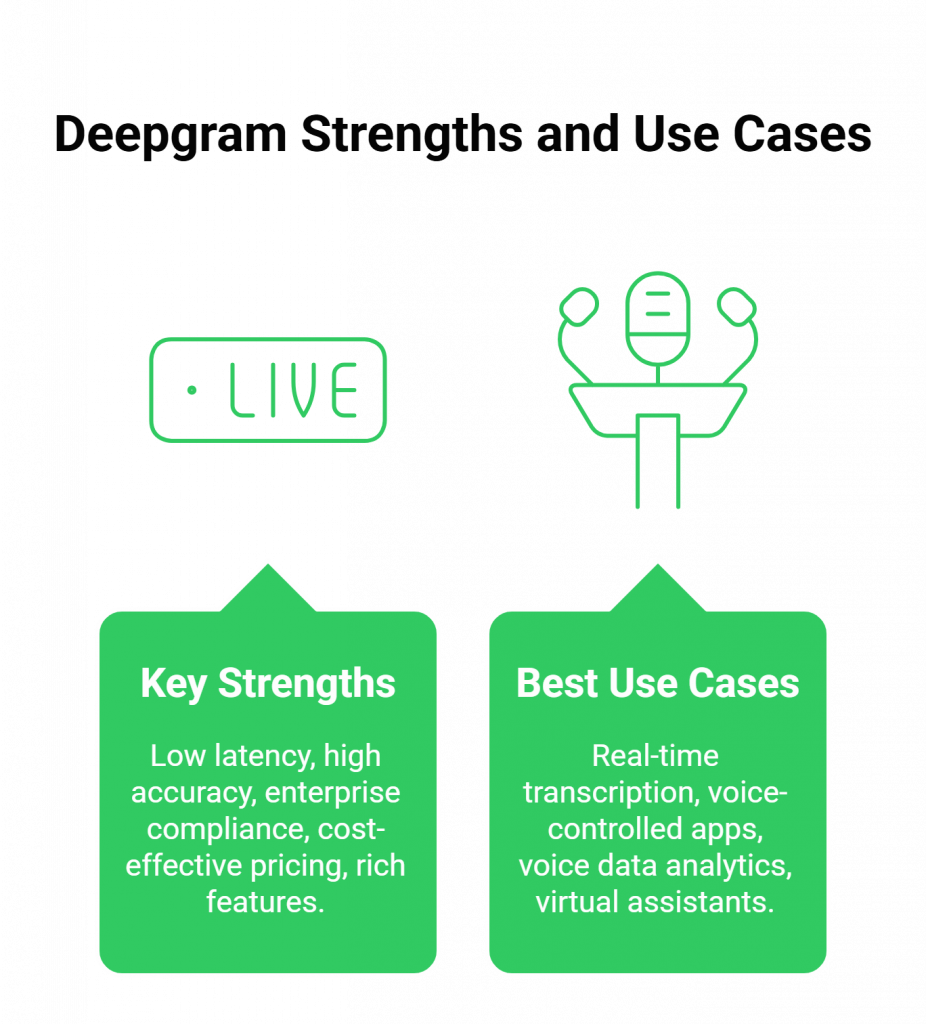

Deepgram has established itself as the go-to solution for Automated Speech Recognition. Its main focus is understanding human speech at scale and with minimal delay.

Key strengths of Deepgram:

- Sub-300ms latency for real-time transcription, ideal for live interactions.

- High accuracy even in noisy conditions, making it suitable for call centers and business environments.

- Enterprise-ready compliance with HIPAA and other standards.

- Cost-effective pricing, with some models significantly cheaper than competitors.

- Rich features such as speaker separation, sentiment analysis, and word-level timestamps.

Best use cases for Deepgram:

- Real-time transcription in call centers and meetings.

- Voice-controlled apps where accuracy is critical.

- Analytics from voice data at scale.

- Virtual assistants that must understand diverse accents and noisy environments.

Also Read: Virtual Number Setup for B2B Communication with WhatsApp Business in Thailand

Elevenlabs.io Vs Deepgram.com: A Direct Comparison

When placed side by side, the distinction becomes clearer.

- Core Functionality: ElevenLabs is focused on Text-to-Speech. Deepgram is focused on Speech-to-Text. They serve different, complementary roles.

- Performance: Both are optimized for real time. ElevenLabs excels in low-latency audio synthesis, while Deepgram shines in transcription speed.

- Developer Experience: Each offers well-documented APIs and SDKs designed with developers in mind.

- Cost-effectiveness: Deepgram’s ASR is especially budget-friendly, while ElevenLabs offers flexible subscription models for TTS-heavy applications.

This means the Elevenlabs.io Vs Deepgram.com question should not be about competition but about how best to combine them in your stack.

Why the Voice Transport Layer Matters

Imagine having the best ears and the best mouth but no reliable nervous system. That is what happens if you use ElevenLabs and Deepgram without a strong transport layer.

FreJun solves this problem by acting as the dedicated backbone of your stack. Its developer-first APIs capture live audio from phone calls, send it to Deepgram for transcription, route it to your LLM for reasoning, and finally forward the response to ElevenLabs for synthesis. The processed audio is then instantly delivered back to the user over the call.

With FreJun, developers can focus on building intelligence and user experience instead of telephony infrastructure.

Also Read: Gemma 2 Voice Bot Tutorial: Automating Calls

DIY Stack vs FreJun

| Feature / Aspect | DIY Stack (Telephony + Deepgram + ElevenLabs) | FreJun AI Transport Layer |

| Infrastructure | Complex and fragile integrations | Unified, developer-first API |

| Latency | Delay compounds across services | Optimized for real-time audio |

| Scalability | Requires building redundant systems | Global infrastructure with enterprise uptime |

| Developer Focus | Time wasted on telephony issues | Time spent on AI logic and features |

| Support | Fragmented across vendors | Dedicated expert support |

Building the Ultimate Voice Agent in 2025

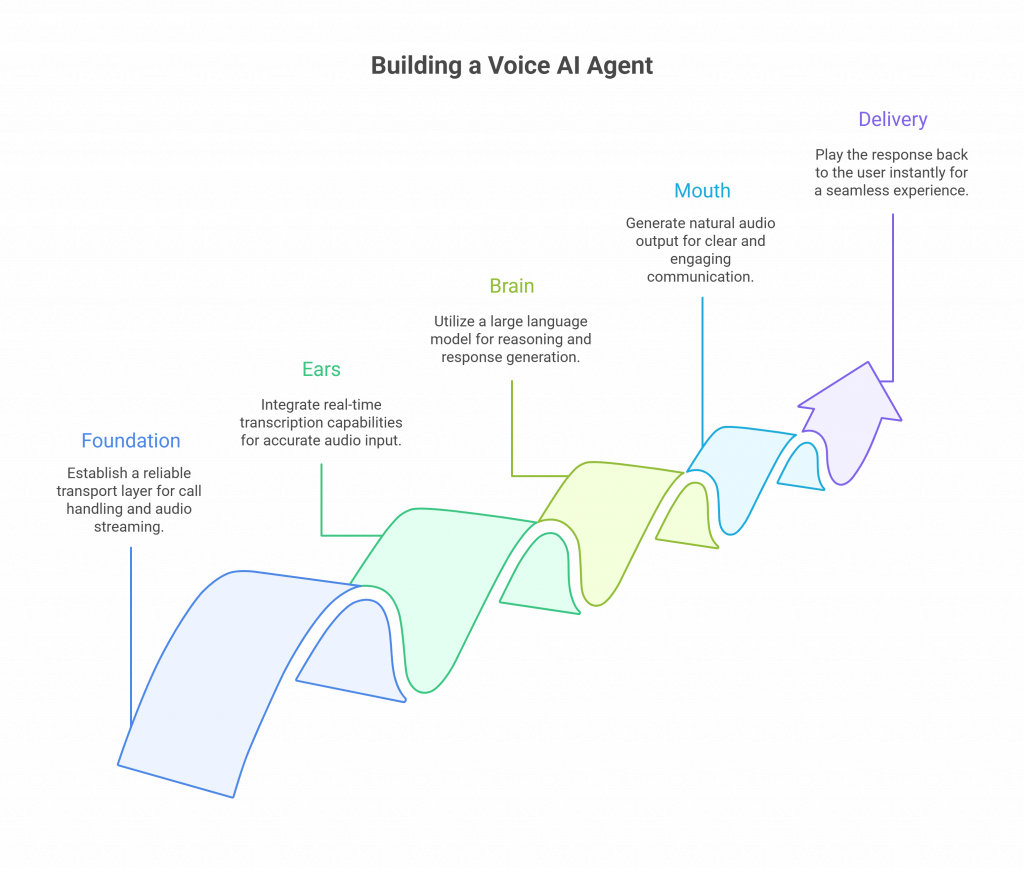

A modern, production-grade voice AI agent should follow this layered approach:

- Foundation (Transport Layer): Use FreJun for all call handling and low-latency audio streaming.

- Ears (ASR Layer): Integrate Deepgram for real-time transcription.

- Brain (Logic Layer): Use your chosen LLM to reason and generate responses.

- Mouth (TTS Layer): Generate natural audio with ElevenLabs.

- Delivery: Play the response instantly back through FreJun to the user.

This modular stack ensures reliability, scalability, and the best possible experience for end users.

Final Thoughts

The debate around Elevenlabs.io Vs Deepgram.com should not be seen as a choice between competitors. They are two halves of a complete conversational AI system. ElevenLabs provides the most natural synthetic voices, while Deepgram delivers the fastest and most accurate transcriptions. Together, they enable machines to both hear and speak in ways that feel seamless to humans.

The real decision developers must make in 2025 is whether to build their own fragile voice infrastructure or to rely on a purpose-built transport layer like FreJun. By focusing on the intelligence and creativity of your AI while letting FreJun handle the voice plumbing, you can deliver applications that feel smooth, natural, and production-ready.

If your goal is to create AI that people enjoy speaking with, then combining ElevenLabs, Deepgram, and FreJun is the winning stack.

Start Your Journey with FreJun AI!

Also Read: How to Build a Voice Bot Using Gemma 3 for Customer Support?

Frequently Asked Questions (FAQs)

No. They specialize in different areas. Deepgram handles transcription (speech-to-text), while ElevenLabs handles voice generation (text-to-speech).

Yes. In fact, they complement each other perfectly. Deepgram transcribes what users say, and ElevenLabs voices the AI’s response.

No. FreJun focuses exclusively on the transport layer, which makes it agnostic and compatible with best-in-class providers like ElevenLabs and Deepgram.

Not necessarily. Specialized providers like Deepgram and ElevenLabs deliver much higher quality in their domains. FreJun reduces hidden infrastructure costs by providing a ready transport layer.

The takeaway is that these two platforms are not substitutes but partners. Use Deepgram for recognition, ElevenLabs for generation, and FreJun to glue them together reliably.