Customer expectations for support have shifted toward instant, natural, and personalized conversations. Traditional call centres and closed AI systems often fall short, either too costly, too rigid, or too complex to adapt. Open-source breakthroughs like InternLM now give developers control to build highly customized, intelligent agents.

But intelligence alone is not enough; customers expect seamless, real-time voice experiences. This is where FreJun complements InternLM, providing the enterprise-grade voice infrastructure that turns conversational intelligence into production-ready customer interactions.

Table of contents

- The Next Wave in Customer Support

- The Hidden Obstacle: Where Brilliant Voice AI Projects Fail

- The Modern Architecture: Separating the AI Brain from the Voice Infrastructure

- Anatomy of an Intelligent Voice Bot Using InternLM

- A Practical Guide to Building Your Voice Bot Using InternLM

- The Infrastructure Decision: DIY Telephony vs. FreJun’s Managed Platform

- Real-World Impact: Customer Support Use Cases

- Final Thoughts: Build Intelligence, Not Infrastructure

- Frequently Asked Questions (FAQ)

The Next Wave in Customer Support

For years, businesses seeking to automate customer support were locked into proprietary, black-box AI solutions. These systems offered a glimpse of the future but often came with rigid constraints, high costs, and limited customization. Today, the landscape is fundamentally changing with the rise of powerful, open-source large language models. A prime example is InternLM, a sophisticated LLM that puts unprecedented control and flexibility back into the hands of developers.

Building a voice bot using InternLM allows your business to create a highly tailored, intelligent customer support agent. You can fine-tune it for your specific industry, deploy it on your own infrastructure for maximum data security, and scale it without the punitive licensing fees of closed-source alternatives. This is more than just another chatbot; it’s a strategic asset.

However, creating a truly effective voice bot requires more than just a powerful AI model. The real challenge and the key to success lies in how you connect that intelligence to your customers in real time.

Also Read: How to Build a Voice Bot Using Gemma 1.1 for Customer Support?

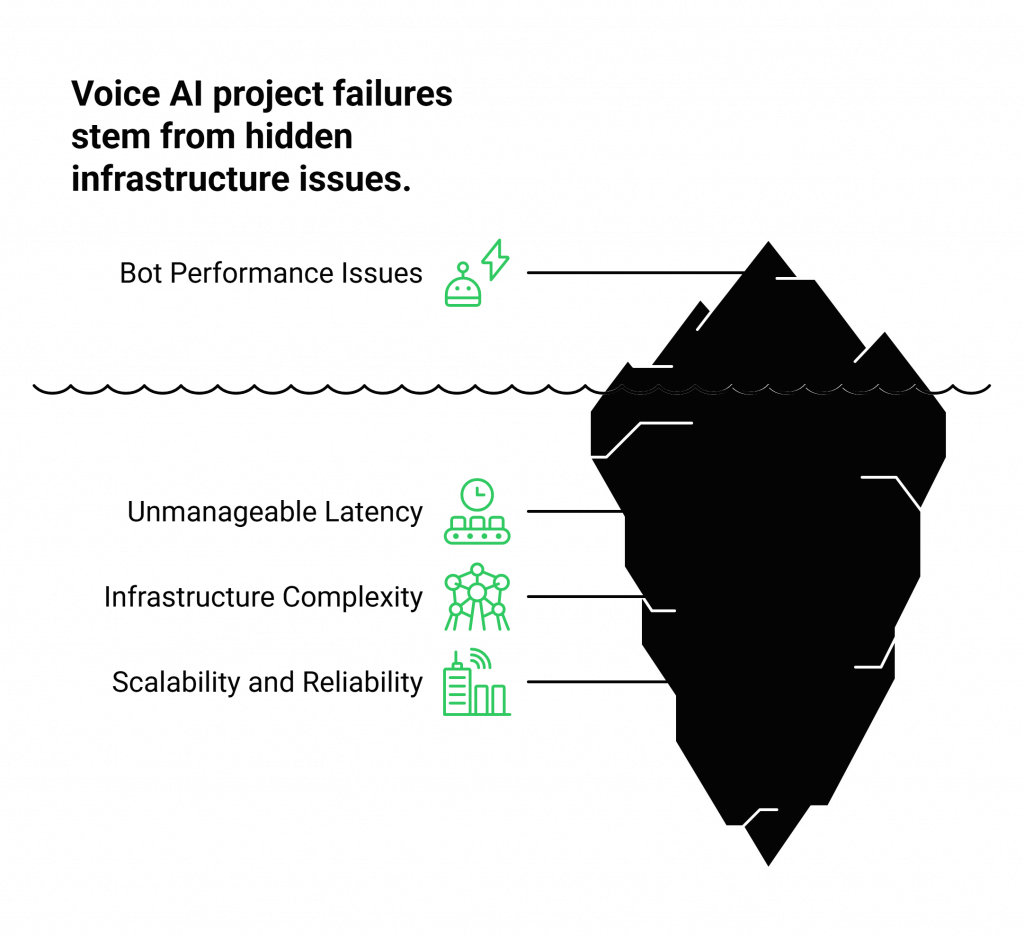

The Hidden Obstacle: Where Brilliant Voice AI Projects Fail

Imagine your development team has spent months building a sophisticated voice bot using InternLM. They’ve fine-tuned the model with your company’s data, perfected the conversational flows, and integrated it with your internal knowledge base. In a controlled test environment, it performs flawlessly, answering queries with remarkable accuracy and nuance.

Then, you connect it to a live phone number. The first real customer call comes in, and the experience falls apart. There are awkward, multi-second pauses between the customer speaking and the bot responding. The audio is choppy. The conversation feels disjointed and unnatural, leading to customer frustration and abandoned calls.

This is the hidden obstacle where countless voice AI projects fail. The problem isn’t the AI; it’s the plumbing. The real-time voice infrastructure such as the complex network layer responsible for capturing, streaming, and playing back audio over the telephone network is a massive engineering challenge. Teams that attempt to build this from scratch face:

- Unmanageable Latency: The delay introduced by capturing audio, sending it to your Automatic Speech Recognition (ASR) service, processing it with InternLM, generating a response, converting it to speech (TTS), and playing it back can easily kill a conversation.

- Infrastructure Complexity: Managing telephony protocols, carrier integrations, and real-time media streaming requires deep, specialized expertise that is rare and expensive. It’s a completely different discipline from AI development.

- Scalability and Reliability Issues: A system built for a demo cannot handle thousands of concurrent calls. Ensuring enterprise-grade uptime, security, and geographic redundancy is a full-time, resource-intensive operation.

This is precisely the problem FreJun was built to solve. We handle the complex voice infrastructure so your team can focus on its core mission: building a world-class voice bot using InternLM.

The Modern Architecture: Separating the AI Brain from the Voice Infrastructure

To build a successful, production-grade voice bot, you need to adopt a modern, decoupled architecture. Think of it as having two distinct but seamlessly connected parts: the AI Brain and the Voice Nervous System.

- The AI Brain (Your Application): This is the core intelligence that you build, own, and control. It consists of the key services that enable conversation:

- ASR: Transcribes the caller’s spoken words into text.

- InternLM Core: Processes the text to understand intent, manage the dialogue, and generate a text-based response.

- TTS: Converts the AI’s text response back into natural-sounding audio.

- The Voice Nervous System (FreJun’s Platform): This is the high-speed, reliable transport layer that connects your AI Brain to the customer. FreJun manages the entire real-time communication pipeline, providing a robust API and developer-first SDKs to handle:

- Call Connectivity: Managing inbound and outbound calls on the global telephone network.

- Real-Time Audio Streaming: Capturing the caller’s voice and delivering it to your ASR with minimal delay.

- Low-Latency Playback: Taking the synthesized audio from your TTS and playing it back to the caller instantly.

This separation of concerns allows you to leverage the full power of an open-source model like InternLM without getting bogged down in the complexities of telecommunications.

Also Read: Enterprise Virtual Phone Solutions for B2B Communication in Israel

Anatomy of an Intelligent Voice Bot Using InternLM

An effective voice bot is an ecosystem of technologies working in perfect harmony. Here are the four essential components that bring your automated agent to life.

- Automatic Speech Recognition (ASR): This is the bot’s “ears.” It listens to the customer’s speech on the call and converts it into machine-readable text. Services like Whisper or Google Speech-to-Text are common choices. FreJun streams the call audio directly to your ASR service, ensuring a clean input for transcription.

- InternLM Core (NLP): This is the bot’s “brain.” The transcribed text is fed to your fine-tuned InternLM model. It analyzes the text to understand the customer’s intent, pulls context from the conversation, and generates a precise, relevant response.

- Text-to-Speech (TTS): This is the bot’s “voice.” Once InternLM generates a text response, a TTS engine like Microsoft Azure TTS or Amazon Polly converts it into a natural, human-like voice. You then pipe this audio back to FreJun’s API to be played to the customer.

- Integration Layer: This is the bot’s “hands.” To provide truly helpful answers, your bot needs access to your business data. This layer uses APIs to connect your application to your CRM (like Zendesk or Freshdesk), databases, and other backend systems to perform actions like checking an order status or updating customer records.

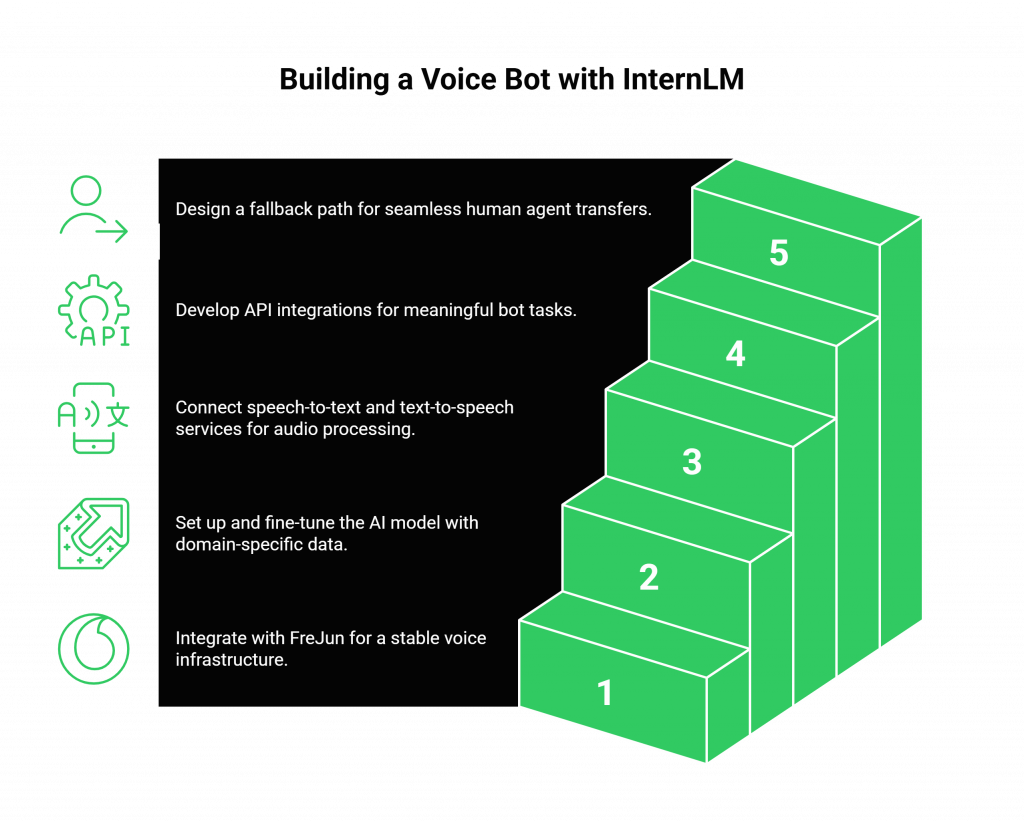

A Practical Guide to Building Your Voice Bot Using InternLM

This step-by-step guide provides a strategic framework for developing and deploying your voice bot, starting with the most critical component for real-world success.

Step 1: Establish a Rock-Solid Voice Foundation

Before you configure your AI model, solve the infrastructure problem. Instead of attempting to build a telephony stack yourself, integrate with FreJun. This foundational step immediately provides your project with:

- A live phone number ready for real-time, low-latency audio streaming.

- Robust server-side SDKs to manage call logic from your backend application.

- An enterprise-grade platform built for scalability and reliability.

By starting here, you create a stable environment where you can develop and test your AI with confidence.

Step 2: Set Up and Configure InternLM

With your voice infrastructure in place, you can now focus on the AI. Install and configure the InternLM model on your preferred environment, whether it’s a private server for data security or a cloud instance. Fine-tune the model with your domain-specific datasets such as FAQs, past customer support transcripts, and product documentation to improve its accuracy and relevance.

Step 3: Integrate Your ASR and TTS Services

Connect your chosen ASR and TTS providers to your core application. Your code will be responsible for orchestrating the flow: receiving the raw audio stream from FreJun, sending it to your ASR, passing the resulting text to InternLM, sending InternLM’s text response to your TTS, and streaming the synthesized audio back to FreJun for playback.

Step 4: Build the Business Logic and Integration Layer

Develop the API integrations that allow your voice bot using InternLM to perform meaningful tasks. Connect it to your CRM, helpdesk software, and internal databases. This is what transforms your bot from a simple question-answering machine into a functional tool that can resolve customer issues.

Step 5: Implement Human Handoff and Test Rigorously

No AI is perfect. Design a graceful fallback path for when the bot encounters a query it cannot handle. The bot should be able to seamlessly transfer the call, along with the full conversation context, to a human agent. Once this is in place, begin rigorous testing with pilot calls to identify and fix any issues in the conversational flow before a full-scale launch.

Also Read: Automate Voice Calls with Gemma 2 Voice Bot Tutorial

The Infrastructure Decision: DIY Telephony vs. FreJun’s Managed Platform

The path you choose for your voice infrastructure will have a profound impact on your project’s timeline, budget, and ultimate success.

| Feature | Building Your Own Voice Infrastructure | Building on FreJun’s Voice Platform |

| Development Focus | 60% on telephony, 40% on AI. Your team becomes distracted. | 100% on building and refining your voice bot using InternLM. |

| Time to Market | 6-12 months of complex, specialized engineering. | 1-2 weeks. Integrate a robust API and launch quickly. |

| Latency | High risk. Requires constant, deep optimization to be conversational. | Ultra-low latency by design, engineered for real-time voice AI. |

| Reliability & Uptime | Entirely your responsibility to build and maintain a resilient system. | 99.95% uptime on a geographically distributed, high-availability network. |

| Scalability | A major engineering hurdle that requires significant ongoing investment. | Effortlessly scales to handle thousands of concurrent calls. |

| Expertise Required | Deep knowledge of VoIP, SIP, media servers, and carrier networks. | Your existing backend development skills are all you need. |

| Support | You are on your own when critical infrastructure fails. | Dedicated integration support from our team of experts. |

Also Read: Enterprise Virtual Phone Solutions for Professional B2B Expansion in the UAE

Real-World Impact: Customer Support Use Cases

Once deployed, your intelligent voice agent can automate a wide range of customer interactions, delivering immediate value to your business. For example:

- Customer Inquiry: “I need to reset my account password.”

- ASR Transcription: The bot’s ASR transcribes the speech into text.

- InternLM Processing: InternLM processes the text and identifies the intent as ‘password reset’.

- Action & Response: The bot triggers a secure workflow via an API and replies through TTS: “I can help with that. For your security, I’ve just sent a password reset link to your registered email address. Please check your inbox.”

This entire interaction is handled instantly and automatically, freeing up human agents to focus on more complex, high-value tasks.

Final Thoughts: Build Intelligence, Not Infrastructure

The emergence of open-source LLMs like InternLM has democratized access to powerful AI, enabling businesses of all sizes to build custom communication solutions. The strategic advantage is no longer just about having AI; it’s about how quickly and effectively you can deploy it to solve real-world business problems.

Wasting valuable engineering resources on the monumental task of building and maintaining a global telecommunications infrastructure is a strategic error. It diverts focus, delays your time-to-market, and introduces unnecessary risk.

By partnering with FreJun, you abstract away that complexity. You leverage a battle-tested, enterprise-grade platform to handle the voice layer, allowing your team to pour all its energy into what truly differentiates your business: creating a smarter, more helpful, and more efficient customer experience with your voice bot.

Get Started with FreJun AI Today!

Also Read: How to Build a Voice Bot Using Gemma 3 for Customer Support?

Frequently Asked Questions (FAQ)

No. FreJun is a voice infrastructure provider. Our platform is model-agnostic, meaning you can connect any LLM you choose, including InternLM. We provide the real-time streaming API to make your AI audible and interactive over a phone call.

Yes. This is a primary advantage of using an open-source model. You can host InternLM in your own private cloud or on-premise environment and use FreJun as the secure transport layer to connect it to the telephone network.

By handling the complex infrastructure, FreJun dramatically accelerates your timeline. Teams can typically move from concept to a production-ready voice bot in a matter of weeks, rather than the many months it would take to build the voice layer from scratch.

Yes. Multilingual support is a key feature. Your ability to support different languages will depend on your chosen ASR and TTS services, as well as the languages supported by the InternLM model you are using. FreJun’s platform can transport the audio for any language.